🔥 My React Handbook - Part I

I am a developer creating open-source projects and writing about web development, side projects, and productivity.

In this comprehensive guide, we delve into the art of writing "clean code" in React.js. Having embarked on my React journey five years ago and continuing to utilize it in my role as a Software Engineer, I've encountered various challenges along the way. Reflecting on past experiences, I strive to approach them in a more refined manner moving forward.

React stands out as one of the most prominent technologies in the realm of JavaScript, often hailed as the preferred choice by many developers. Unlike some frameworks, React grants developers the freedom to structure projects as they see fit. While this fosters creativity, it can also lead to disorder if not managed properly, particularly when collaborating within a team setting. Hence, establishing a clear and comprehensible structure becomes imperative.

React Advanced Concepts

How React’s Render, Effects, and Refs work under the hood

Let’s start with this snippet to get the thoughts rolling:

function ExploringReactRefs() {

// Why does this ref start as null?

// When does it get its actual value?

const divRef = useRef<HTMLDivElement>(null);

// This feels like it should work... but does it?

// When exactly does this effect run?

useEffect(() => {

console.log("Effect:", divRef.current?.getBoundingClientRect());

}, []);

// What's different about this effect?

// Why might we need this instead of useEffect?

useLayoutEffect(() => {

console.log("Layout Effect:", divRef.current?.getBoundingClientRect());

}, []);

// What's special about this callback ref approach?

// When does this function actually get called?

// See the second div below where handleRef is used.

const handleRef = (node: HTMLDivElement | null) => {

if (node) {

console.log("Callback ref:", node.getBoundingClientRect());

}

};

return (

<div className="flex gap-4">

{/* When can we actually access this element via divRef? */}

<div ref={divRef}>Using useRef</div>

{/* How is this different from useRef? */}

<div ref={handleRef}>Using callback ref</div>

</div>

);

}

State updates and renders

Whenever your component’s state is updated, React will re-render it. Re-rendering a component renders all of its children(yes, you can optimize this, but it’s not the point here).

And just to be clear, effects only run if their dependencies change. If an array is empty, it will only run once when the component is mounted (created).

Let’s just go over the snippet to be brutally clear about this:

function Component() {

// 1. No dependency array - runs on EVERY render

useEffect(() => {

// Effect runs

return () => {

/* Cleanup runs before next effect */

};

}); // Missing dependency array

// 2. Empty array - runs only on mount/unmount

useEffect(() => {

// Effect runs once

return () => {

/* Cleanup runs on unmount */

};

}, []);

// 3. With dependencies - runs when deps change

useEffect(() => {

// Effect runs if count changed

return () => {

/* Cleanup runs before next effect if count changed */

};

}, [count]);

// Same rules apply for useLayoutEffect

}

Mount means the component gets created.

Unmount means the component gets destroyed, or in simpler words, removed from the DOM. I used to believe in my younger days that this meant navigating away from the page. But this can also be the case if you are conditionally rendering a component.

When a component is rendered, it goes through two main phases:

Render phase

Commit phase

We are gonna break those down into simpler terms.

For now, understand that every single time a render happens, two phases are executed: Render and commit.

Virtual DOM

Before we dive into the Render phase, let’s talk about the Virtual DOM.

A lot of people who lack understanding instantly rush towards “Virtual DOM is to make React faster“. It’s a bit funny because it’s not really the case. You have UI libraries today, such as Solid.js, that don’t have a Virtual DOM and are faster than React. That statement is very confusing and incorrect.

In actuality, React uses Fiber architecture instead of a simple Virtual DOM. This lets React split into chunks and prioritize it. This is still good for us to understand the basis.

Virtual DOM is just Javascript objects. It’s the representation of the actual DOM.

So here, we noticed the first “cost“ already. We are storing a representation of the DOM in memory. Not that this isn’t a big deal. Millions, if not billions of websites, are using React.

By having a Virtual DOM, React isn’t tied to the browser’s DOM.

This means React can render to different platforms.

That’s why React Native exists and works. Mobile apps do not use the browser’s DOM.

Just pseudo code for our enlightenment:

// React can render to different targets

function render(virtualElement) {

switch (environment) {

case "web":

return renderToDOM(virtualElement);

case "mobile":

return renderToNative(virtualElement);

case "server":

return renderToString(virtualElement);

}

}

Batching updates

As we discussed before, React re-renders the entire component (including its children) whenever a state update happens.

This means that when state updates happen, it could result in a lot of DOM changes in the end.

With Virtual DOM, React can batch these updates. It can figure out all the changes that it needs to do and apply them in a single pass when the commit phase is executed.

// Without Virtual DOM

state.change1(); // DOM update

state.change2(); // DOM update

state.change3(); // DOM update

// With Virtual DOM

state.change1(); // Update virtual tree

state.change2(); // Update virtual tree

state.change3(); // Update virtual tree

// One single DOM update at the end!

Render phase

Let’s finally talk about the render phase.

This is the first phase of the render cycle.

One thing that annoys me sometimes when learning is all the terminology people try to use.

We can also call this the first step of going from state change to DOM change.

Let’s look at some pseudo code:

// RENDER PHASE

function renderPhase(newState) {

// 1. React creates/updates Virtual DOM by calling components

function handleStateUpdate() {

// Create new Virtual DOM tree

const newVirtualDOM = {

type: "div",

props: { className: "app" },

children: [

{

type: "span",

props: { children: newState },

},

],

};

// 2. Reconciliation (Diffing)

// React compares new Virtual DOM with previous one

// Figures out what needs to change in real DOM

const changes = diff(previousVirtualDOM, newVirtualDOM);

// Results in a list of required DOM operations

// [{type: 'UPDATE', path: 'span/textContent', value: newState}]

}

}

With the new state, React creates a new Virtual DOM tree.

React uses this new Virtual DOM tree to figure out what changes need to be made to the actual DOM.

It does so by comparing the new Virtual DOM tree with the previous one.

Now React knows exactly the changes that need to be made and we don’t need to update the full DOM every time a state update happens.

Commit phase

Now we know what changes we need to make.

The commit phase is often summarized as “React updates the DOM“. But it’s a bit deeper than that.

If you are not familiar with the event loop. I recommend reading up on it before continuing. Chapters 12, 13, and 14 are relevant if you wanna learn more about the event loop. MDN or YouTube are also good resources.

Let’s look at some pseudo code:

// 1. React's Commit Phase (Synchronous JavaScript)

// This runs on the main thread

function commitToDOM() {

// React calls DOM APIs

// Each call gets added to the call stack

mutateDOM() {

document.createElement()

element.setAttribute()

element.appendChild()

// ...

}

// remember useLayoutEffect?

// Now we'll run all the layout effects

// this is synchronous

// the code in here gets added to the call stack too

runLayoutEffects()

// Queue useEffect for later

queueMicrotask(() => {

runEffects()

})

}

// commitToDOM() is done - time for browser to work

// 2. Browser's Work

// - Calculate Layout

// - Paint

// - Composite

// 3. Microtask Queue

// Now useEffect runs

How browsers work is out of the scope of this post. But that is super interesting. It’s on my list of things to learn in 2025. I have done some research on it when I dug into hidden classes and stuff. Let’s go over those points quickly, then get back to the topic:

Calculating layout: The browser calculates exact positions and sizes.

Paint: The browser converts layout results into visual pixels.

Composite: The browser combines layers into a final screen image.

When we run the layout effects, we are running the synchronous Javascript code. The function call and the ones it contains get added to the call stack. Now, if you have been following along closely, you understand that every time the layout’s dependencies change, they will run again. This MEANS more synchronous code to go through before the browser can do its thing (which is why React recommends being careful with useLayoutEffect).

We then run the normal effects. These are queued up with queuedMicrotask() in our example. However, in actuality, React uses its own scheduling system. But I think it helps to review it as a microtask queue to sort of understand the basics.

When the browser does its things, it’s gonna first clear the entire call stack before it’s running anything from the microtask queue. Then, it runs the microtask queue.

Refs

Let’s focus on the refs from the original snippet.

const divRef = useRef<HTMLDivElement>(null);

This ref is created during the render phase. It starts as null because the DOM element doesn’t exist during the first render. It gets its actual value after React commits the changes to the DOM. But you can’t know exactly when this happens just by using useRef alone.

That’s why you always need to check if the ref is null before you use it.

if (divRef.current) {

console.log(divRef.current.getBoundingClientRect());

}

What happens when you use a callback ref?

const handleRef = (node: HTMLDivElement | null) => {

if (node) {

console.log("Callback ref:", node.getBoundingClientRect());

}

};

Called immediately when the element is attached to the DOM. You can be 100% sure that the callback ref will run at the right time. It’s null when the element is removed in case you need to clean up. It runs before useLayoutEffect. It’s best for immediate DOM measurements or setup.

function Tooltip({ text, targetRef }) {

const tooltipRef = useRef(null);

// Wrong: Might cause flicker

// Why?

// Because this happens after the DOM is painted

// You will tooltip in its original position

// Then it flickers when this runs

useEffect(() => {

const targetRect = targetRef.current.getBoundingClientRect();

tooltipRef.current.style.top = `${targetRect.bottom}px`;

}, []);

// Better: No flicker

// Why?

// Because this happens before the DOM is painted

// You will see the tooltip in its final position

useLayoutEffect(() => {

const targetRect = targetRef.current.getBoundingClientRect();

tooltipRef.current.style.top = `${targetRect.bottom}px`;

}, []);

// Best: Most direct

// Why?

// Because this happens immediately after the DOM is attached (layout effect happens AFTER the DOM is attached)

const handleRef = (node) => {

if (node) {

const targetRect = targetRef.current.getBoundingClientRect();

node.style.top = `${targetRect.bottom}px`;

}

};

return <div ref={handleRef}>{text}</div>;

}

When do cleanup functions run?

After a render, right BEFORE React runs the effect (useEffect or useLayoutEffect, only if dependencies change), it runs the cleanup functions with the previous values. Then, it runs the new effect with the new values. Or, of course, if the component unmounts.

How React’s Reconciliation Decides What to Re-Render

Ever wondered how React knows exactly which parts of your UI need updating when state changes? It’s not magic — a meticulously designed algorithm called reconciliation that makes thousands of split-second decisions about what to re-render.

After diving deep into the React source code and running countless performance test cases, I’ve discovered that most developers have a completely wrong mental model of how React actually works. Understanding reconciliation isn’t just academic curiosity — it’s a skill for writing React apps that feel blazing fast.

Let me show you how React exactly makes these decisions, and why some innocent-looking code can completely tank your apps’ performance.

The Problem React Hard To Solve

Before we dive into the solution, let’s understand the challenge. When your app’s state changes, React needs to update the UI. The native approach would be to destroy everything and build from scratch.

// The naive approach (thankfully React doesn't do this)

function updateUI() {

document.body.innerHTML = ''; // Destroy everything

renderEntireAppFromScratch(); // Rebuild everything

}

This would work, but it would be painfully slow. Rebuilding the entire DOM tree is expensive, and you will lose focus states, scroll positions, and any user input.

The smart approach is to figure out the minimum set of changes needed to update the UI. But here’s the catch: the state-of-the-art algorithms have a complexity in the order of O(n³) where n is the number of elements in the tree. If we used this in React, displaying 1000 elements would require in the order of one billion comparisons.

React’s solution? Build a heuristic O(n) algorithm based on two key assumptions that hold true for almost all real-world applications.

The Two Assumptions That Changed Everything

React’s reconciliation algorithm is built on two simple assumptions:

Assumption 1: Different Element Types = Different Trees

Two elements of different types will produce two different trees. This means if you change a <div> to a <span>, React assumes everything inside has changed and rebuilds the entire subtree.

// Before

<div>

<UserProfile />

<UserSettings />

</div>

// After

<span>

<UserProfile />

<UserSettings />

</span>

Even though UserProfile and UserSettings haven't changed, React will unmount and remount all components. This might seem wasteful, but in practice, it’s rare to change element types, and this assumption allows React to skip complex assumptions about React-diffing algorithms.

Assumption 2: Keys Provide Stability Hints

The developer can hint which child elements may be stable across different renders with a key prop. This is where keys become crucial for performance.

Here’s a mind-blowing example of how keys affect reconciliation:

// Without keys - performance disaster

<ul>

<li>Duke</li>

<li>Villanova</li>

</ul>

// Adding item at the beginning

<ul>

<li>Connecticut</li> // React thinks this is "Duke" that changed

<li>Duke</li> // React thinks this is "Villanova" that changed

<li>Villanova</li> // React thinks this is a new item

</ul>

// With keys - efficient updates

<ul>

<li key="duke">Duke</li>

<li key="villanova">Villanova</li>

</ul>

// Adding item at the beginning

<ul>

<li key="connecticut">Connecticut</li> // New item

<li key="duke">Duke</li> // Unchanged

<li key="villanova">Villanova</li> // Unchanged

</ul>

With proper keys, React knows exactly which items are new, moved, or unchanged.

The Three-Step Decision Process

When React needs to update your UI, it follows a precise three-step process:

- Step 1: Element Type Comparison

React first compares the types of elements. Here’s what happens in different scenarios:

// Scenario A: Same type, different props

// Before

<input type="text" value="hello" />

// After

<input type="text" value="world" />

// Decision: Update the value attribute only

// Scenario B: Different types

// Before

<input type="text" value="hello" />

// After

<textarea value="hello" />

// Decision: Unmount input, mount textarea (complete rebuild)

Here is a mind-bending example that shows how reconciliation can surprise you:

function App() {

const [showFirst, setShowFirst] = useState(true);

return (

<div>

{showFirst ? (

<input key="first" placeholder="First input" />

) : (

<input key="second" placeholder="Second input" />

)}

</div>

);

}

When you toggle showFirst, React sees different keys and completely unmounts the first input and mounts the second one. Any text you typed gets lost.

But without keys:

function App() {

const [showFirst, setShowFirst] = useState(true);

return (

<div>

{showFirst ? (

<input placeholder="First input" />

) : (

<input placeholder="Second input" />

)}

</div>

);

}

React sees the same element type (input) in the same position and just updates the placeholder. Your typed text is preserved! React doesn't treat the email input as an entirely new node, because the type of input element (<input />) is the same, even though it's conditionally rendered.

- Step 2: Props and State Diffing

If the element types match, React moves to comparing props and state:

function UserCard({ name, avatar, isOnline }) {

// React compares: name, avatar, isOnline

// Only re-renders if any of these changed

return (

<div className={isOnline ? 'online' : 'offline'}>

<img src={avatar} alt={name} />

<h3>{name}</h3>

</div>

);

}

Here’s where it gets interesting. React does shallow comparison, so:

// This will cause unnecessary re-renders

function App() {

const [count, setCount] = useState(0);

return (

<UserList

users={users}

settings={{ theme: 'dark', locale: 'en' }} // New object every render!

/>

);

}

// Better approach

function App() {

const [count, setCount] = useState(0);

const settings = useMemo(() => ({

theme: 'dark',

locale: 'en'

}), []);

return (

<UserList

users={users}

settings={settings} // Stable reference

/>

);

}

- Step 3: Children's Reconciliation

For children, React uses keys to match elements between renders:

// React's internal reconciliation logic (simplified)

function reconcileChildren(oldChildren, newChildren) {

const updates = [];

// If children have keys, use key-based matching

if (newChildren.some(child => child.key)) {

const oldChildrenByKey = mapByKey(oldChildren);

const newChildrenByKey = mapByKey(newChildren);

for (const key in newChildrenByKey) {

if (oldChildrenByKey[key]) {

// Child exists - check if it needs updates

updates.push(compareAndUpdate(oldChildrenByKey[key], newChildrenByKey[key]));

} else {

// New child - mount it

updates.push(mount(newChildrenByKey[key]));

}

}

} else {

// No keys - fall back to index-based matching

for (let i = 0; i < Math.max(oldChildren.length, newChildren.length); i++) {

// This is much less efficient for dynamic lists

}

}

return updates;

}

The Fiber Resolution: Making Reconciliation Interuptible

React 16 introduced a complete rewrite of the reconciliation algorithm called Fiber. The old “Stack Reconciler“ had a critical flaw: once it started reconciling, it couldn’t stop until it finished.

// Old Stack Reconciler (pseudo-code)

function reconcileRecursively(element) {

// This runs to completion - can't be interrupted

const children = element.children;

for (let child of children) {

reconcileRecursively(child); // Blocks the main thread

}

updateDOM(element);

}

This could block the main thread for hundreds of milliseconds on complex apps, making animations janky and interactions unresponsive.

Fiber solves this by breaking work into units:

// Fiber Reconciler (simplified)

function workLoop() {

while (nextUnitOfWork && shouldYieldToMainThread()) {

nextUnitOfWork = performUnitOfWork(nextUnitOfWork);

}

if (nextUnitOfWork) {

// Yield to main thread, resume later

scheduleCallback(workLoop);

} else {

// All work done, commit changes

commitRoot();

}

}

React Fiber divides the update into units of work. It can assign the priority to each unit of work, and has the ability to pause, reuse, or abort the unit of work if not needed.

This enables some incredible features:

Time slicing: React can pause work to handle user interactions

Priority-based updates: Animations get higher priority than data fetching

Concurrent rendering: Multiple updates can be processed simultaneously

Comprehensive Guide on React Re-renders

Original post: React re-render guide: everything, all at once.

When talking about React performance, there are two major stages that we need to care about:

initial render: happens when a component first appears on the screen

re-render: second and consecutive render of a component that is already on the screen

Re-render happens when React needs to update the app with some new data. Usually, this happens as a result of a user interacting with the app or some external data coming through via an asynchronous request or some subscription model.

Non-interactive apps that don’t have any asynchronous data updates will never re-render, and therefore don’t need to care about re-render performance optimization.

Watch “intro to re-renders” on Youtube.

Necessary re-renders: re-render of a component that is the source of the changes, or a component that directly uses the new information. For example, if a user types in an input field, the component that manages its state needs to update itself on every keystroke.

Unnecessary re-renders: re-render of a component that is propagated through the app via different re-render mechanisms due to either mistakes or inefficient app architecture. For example, if a user types in an input field and the entire page re-renders on every keystroke, the page has been re-rendered unnecessarily.

Unnecessary re-renders by themselves are not a problem. React is very fast and able to deal with them without users noticing anything.

However, if re-renders happen too often and/or on very heavy components, this could lead to user experience appearing “laggy”, visible delays on every interaction, or even the app becoming completely unresponsive.

When does a React component re-render itself?

There are four reasons why a component would re-render itself: state changes, parent (or children) re-renders, context changes, and hooks changes. There is also a bug myth: that re-renders happen when the component’s props change. By itself, it’s not true.

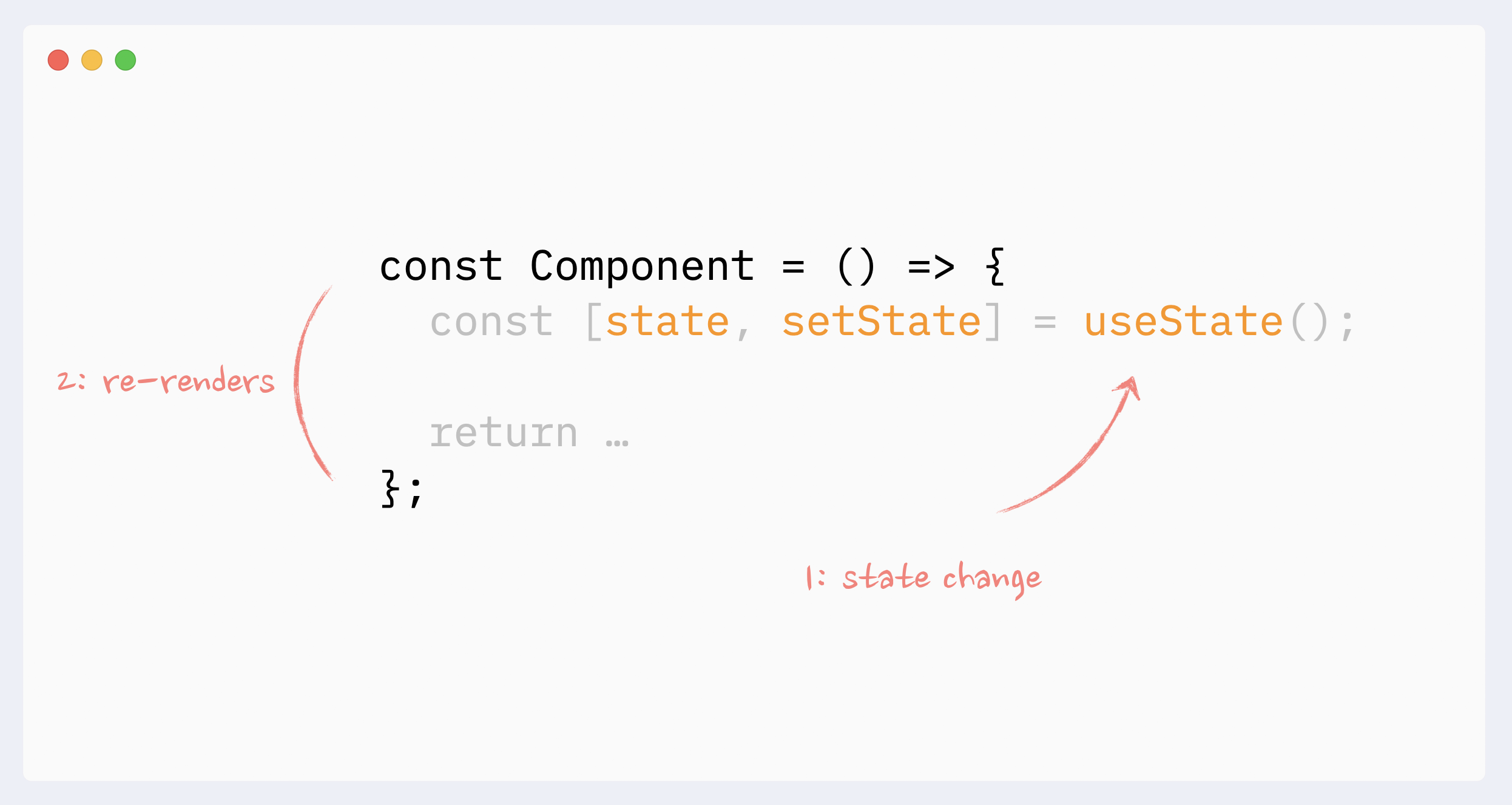

- Re-renders reason: state changes

When a component’s state changes, it will re-render itself. Usually, it happens either in a callback or in useEffect hook.

State changes are the “root” source of all re-renders.

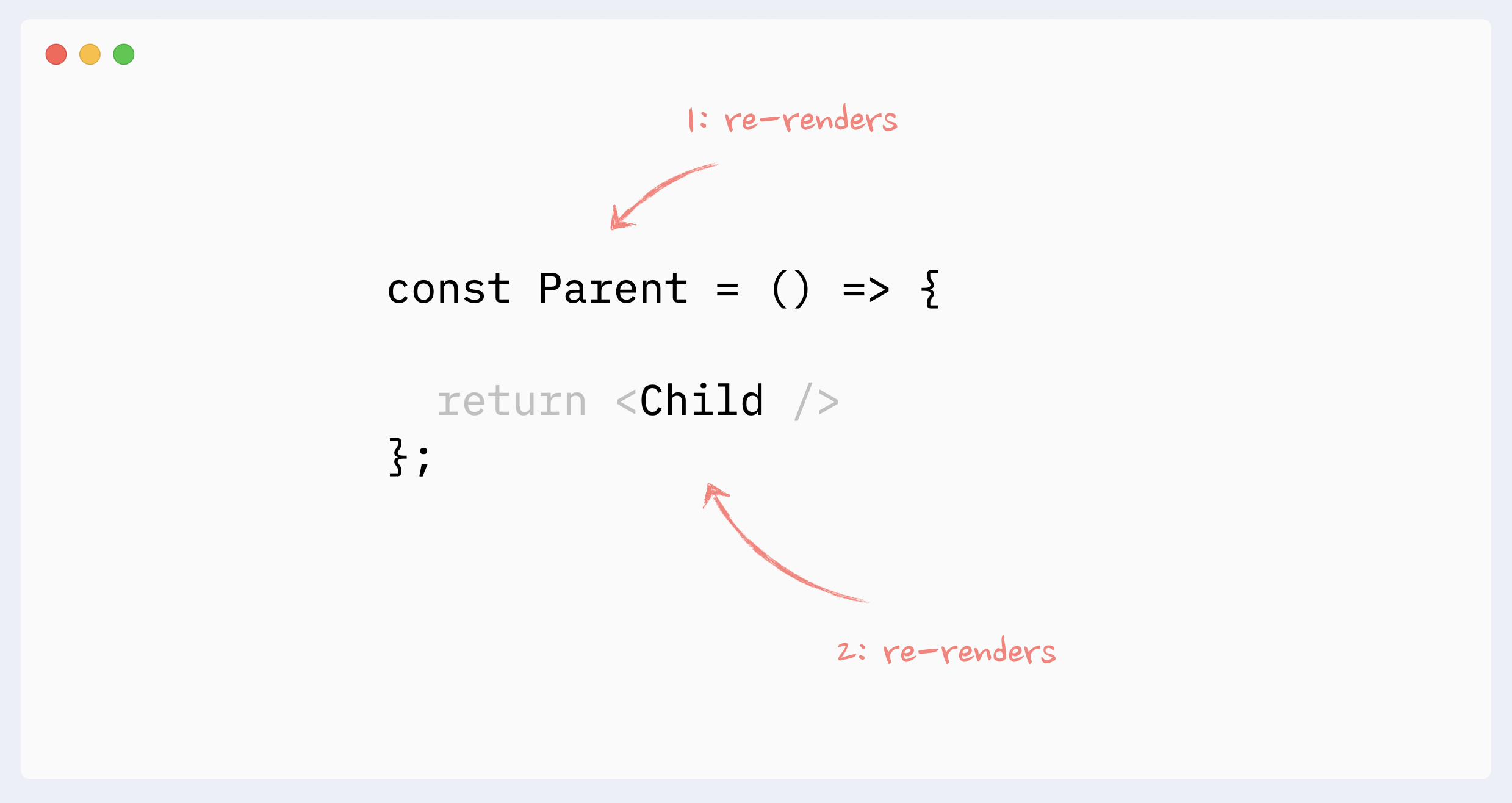

- Re-renders reason: parent re-renders

A component will re-render itself if its parent re-renders. Or, if we look at this from the opposite direction, when a component re-renders, it also re-renders all its children.

It always goes “down” the tree: the re-render of a child doesn’t trigger the re-render of a parent. (There are a few caveats and edge cases here, see the full guide for more details: The mystery of React Element, children, parents, and re-renders)

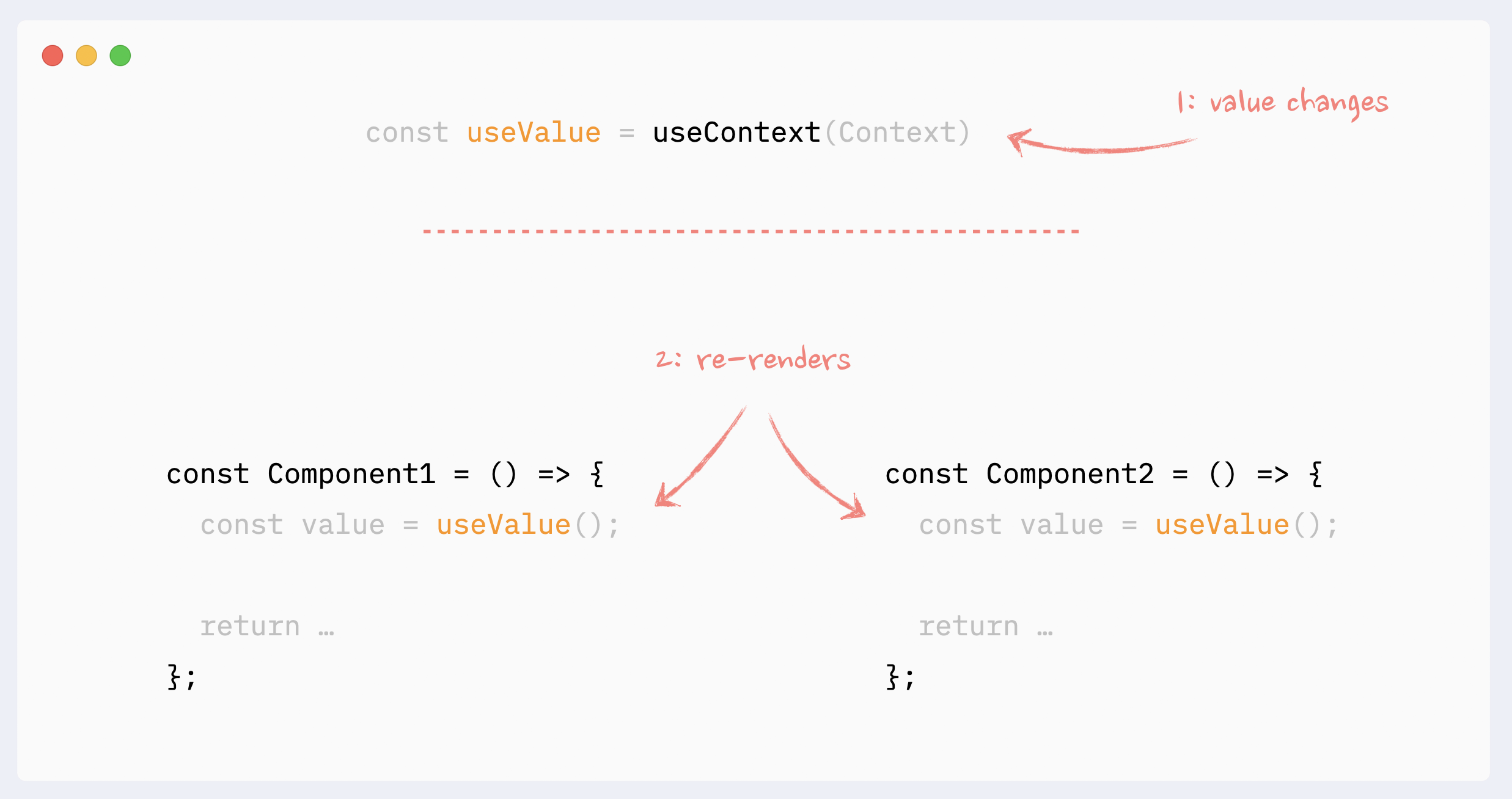

- Re-renders reason: context changes

When a value of the Context Provider changes, all components that use this context will re-render, even if they don’t use the changed portion of the data directly. Those re-renders can not be prevented with memoization directly, but there are a few workarounds that can simulate it

- Re-renders reason: hooks changes

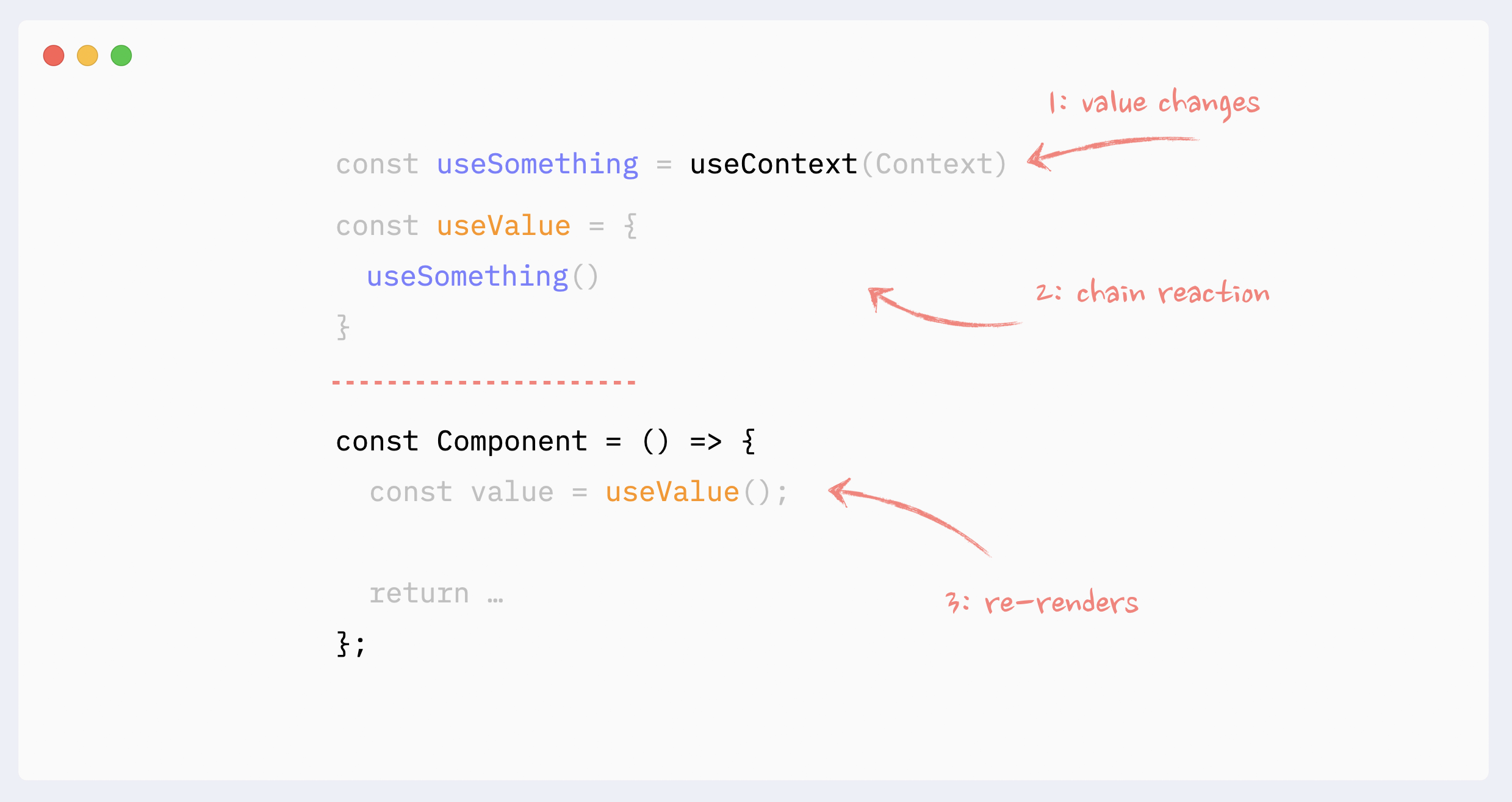

Everything that is happening inside a hook “belongs” to the component that uses it. The same rules regarding Context and State changes apply here:

State change inside the hook will trigger an unpreventable re-render of the “host” component

If the hook uses Context and Context’s value changes, it will trigger an unpreventable re-render of the “host” component

Hooks can be chained. Every single hook inside the chain still “belongs” to the “host” component, and the same rules apply to any of them.

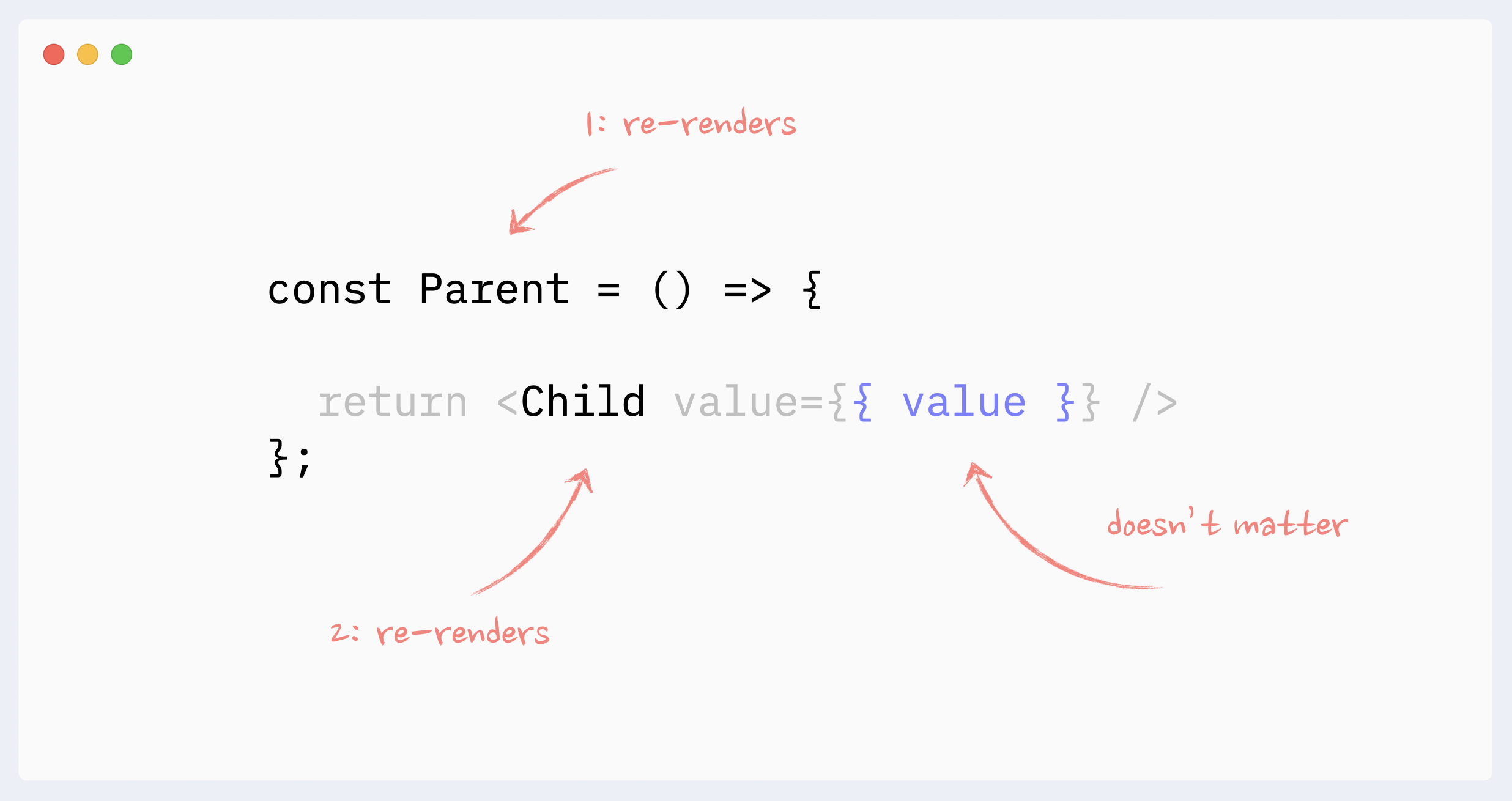

- Re-renders reason: props changes (the big myth)

It doesn’t matter whether the component’s props change or not when talking about re-renders of non-memoized components.

In order for props to change, they need to be updated by the parent component. This means the parent would have to re-render, which will trigger re-render of the child component regardless of its props.

Only when memoization techniques are used (React.memo, useMemo), then props change becomes important.

Preventing re-renders with composition

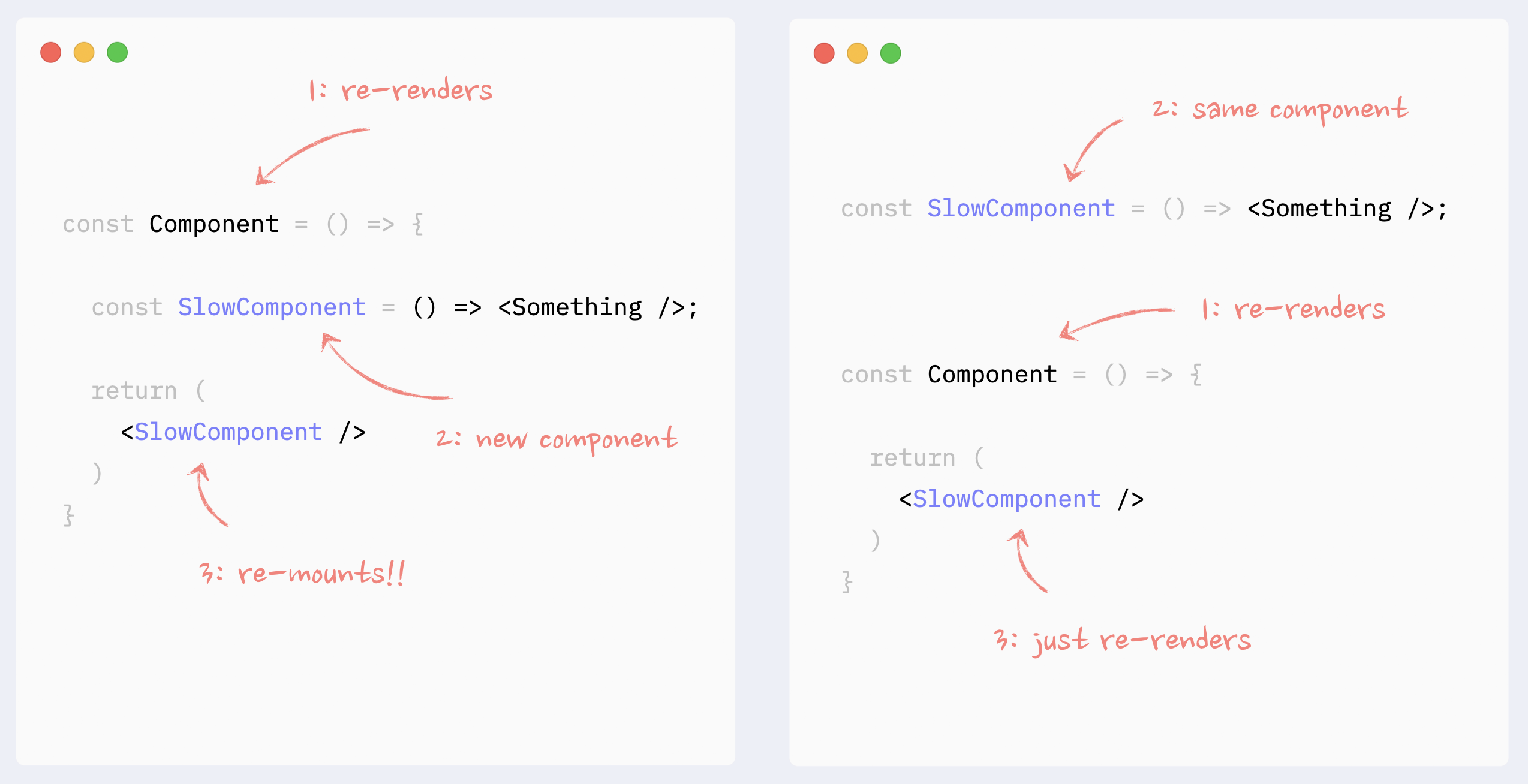

- ⛔️ Antipattern: Creating components in a render function

Creating components inside a render function of other components is an anti-pattern that can be the biggest performance killer. On every re-render, React will re-mount this component (destroy it and re-create it from scratch), which is going to be much slower than a normal render. On top of that, this will lead to such bugs as (possible flashes of content during re-renders, state being reset in the component with every re-render, useEffect with no dependencies triggered on every re-render, if a component was focused, focus will be lost.)

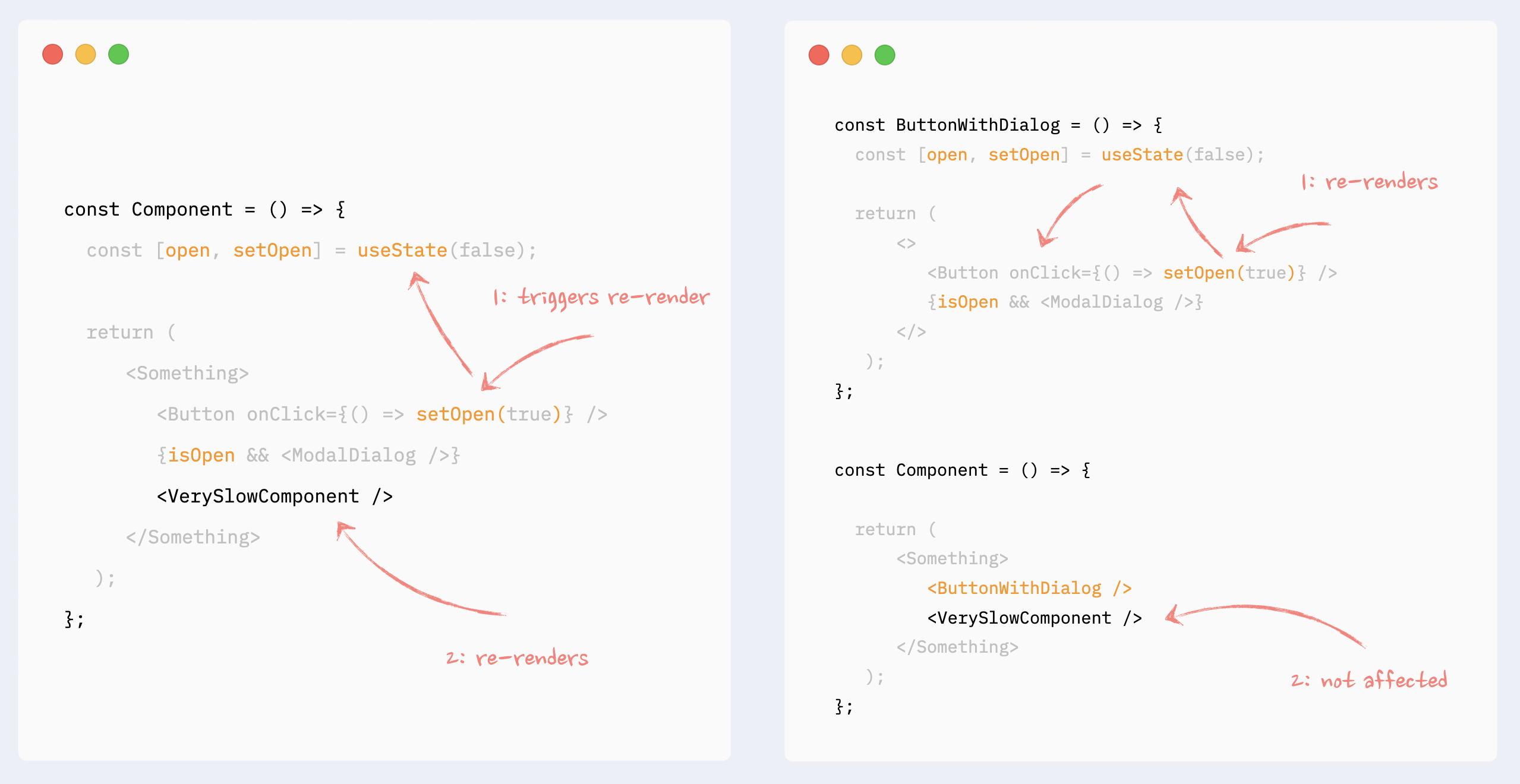

- ✅ Preventing re-renders with composition: moving state down

This pattern can be beneficial when a heavy component manages state, and this state is only used on a small, isolated portion of the render tree. A typical example would be opening/closing a dialog with a button click in a complicated component that renders a significant portion of a page.

In this case, the state that controls modal dialog appearance, dialog itself, and the button that triggers the update can be encapsulated in a smaller component. As a result, the bigger component won’t re-render on those state changes.

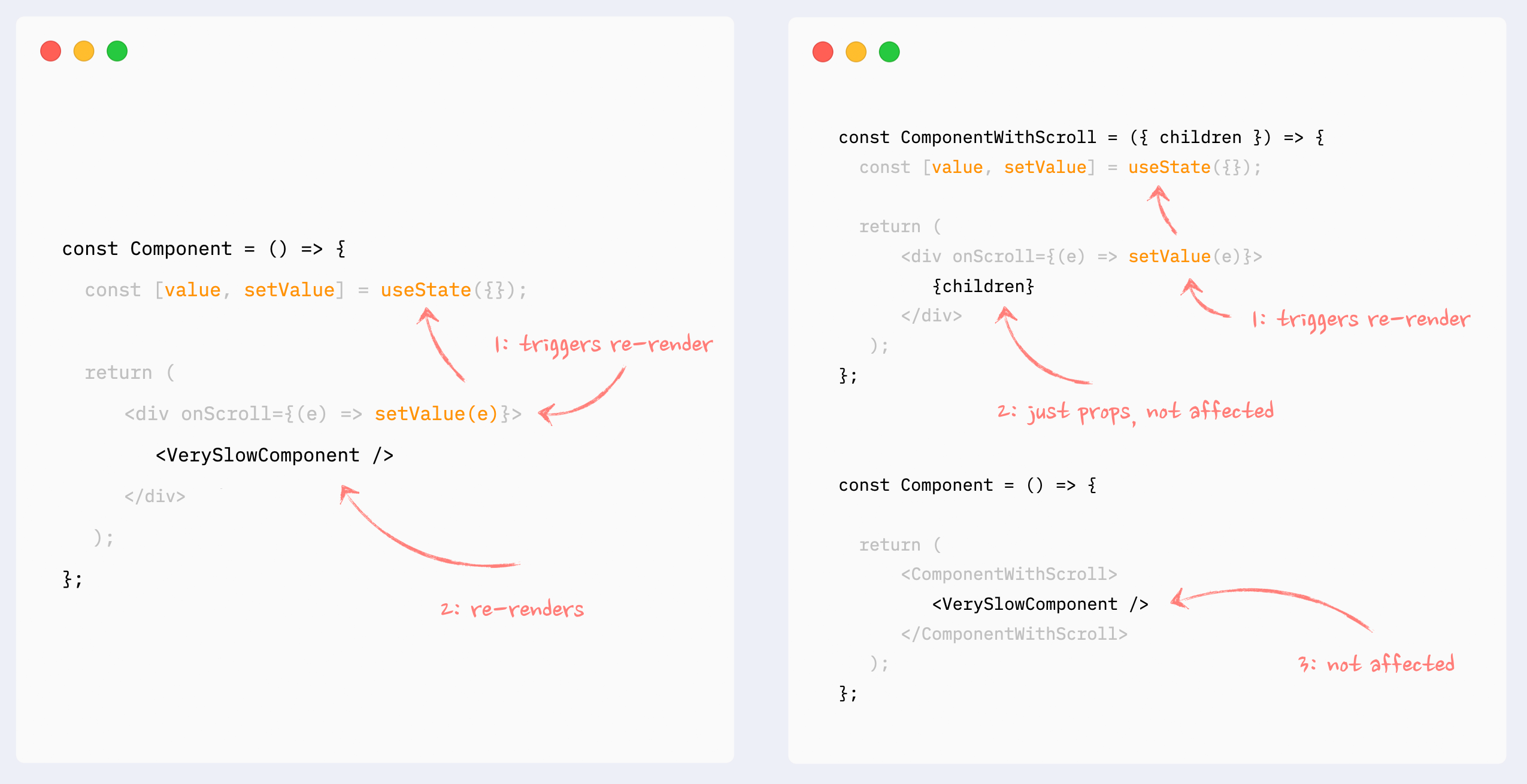

- ✅ Preventing re-renders with composition: children as props

This can also be called “wrap state around children”. This pattern is similar to “moving state down”: it encapsulates state changes in a smaller component. The difference here is that the state is used on an element that wraps a slow portion of the render tree, so it can’t be extracted that easily. A typical example would be onScroll or onMouseMove callbacks attached to the root element of a component.

In this situation, state management and components that use that state can be extracted into a smaller component, and the slow component can be passed to it as children. From the smaller component perspective children are just props, so they will not be affected by the state change and therefore won’t re-render.

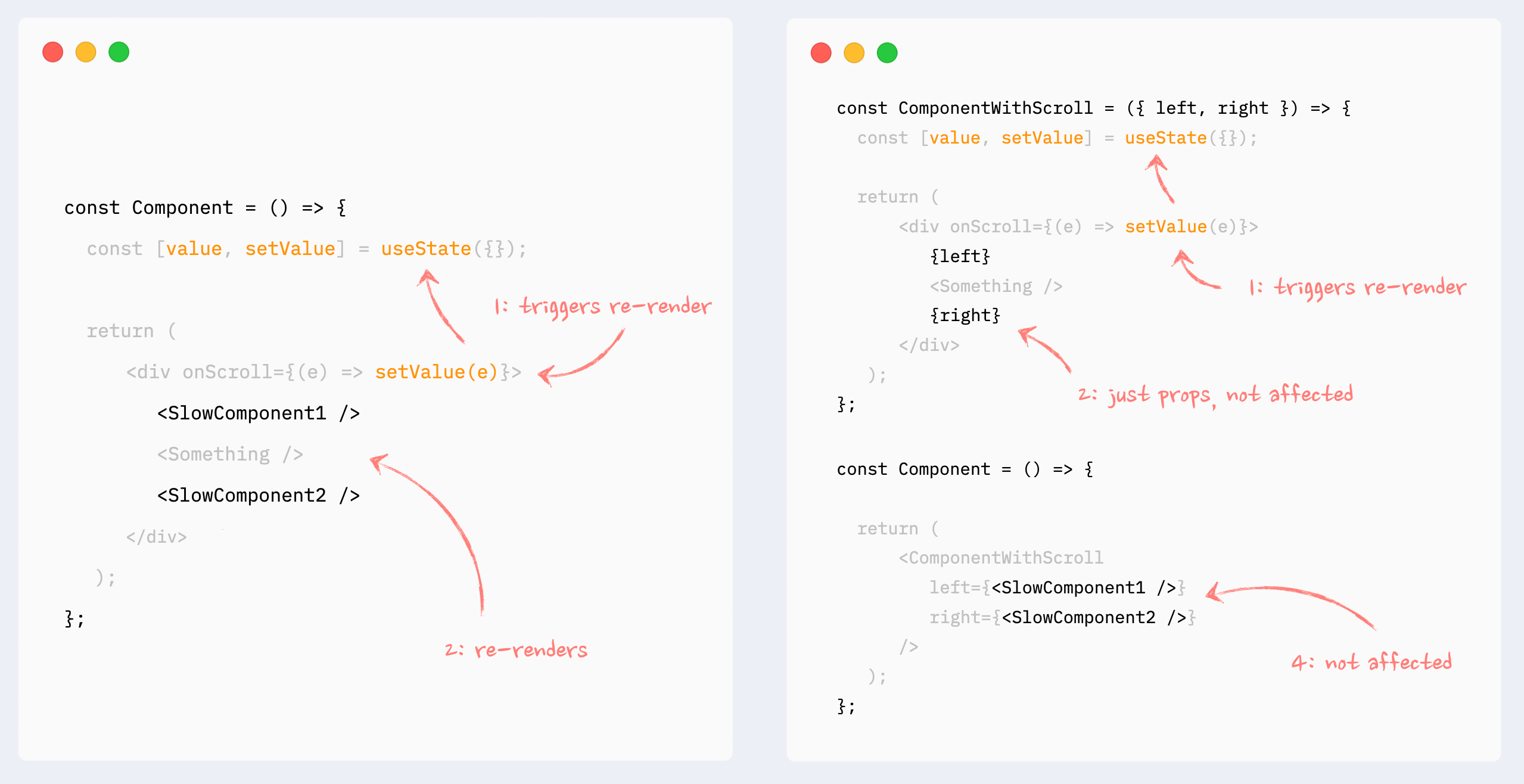

- ✅ Preventing re-renders with composition: components as props

Pretty much the same as the previous pattern, with the same behavior: it encapsulates the state inside a smaller component, and heavy components are passed to it as props. Props are not affected by the state change, so heavy components won’t re-render.

It can be useful when a few heavy components are independent from the state, but can’t be extracted as children as a group.

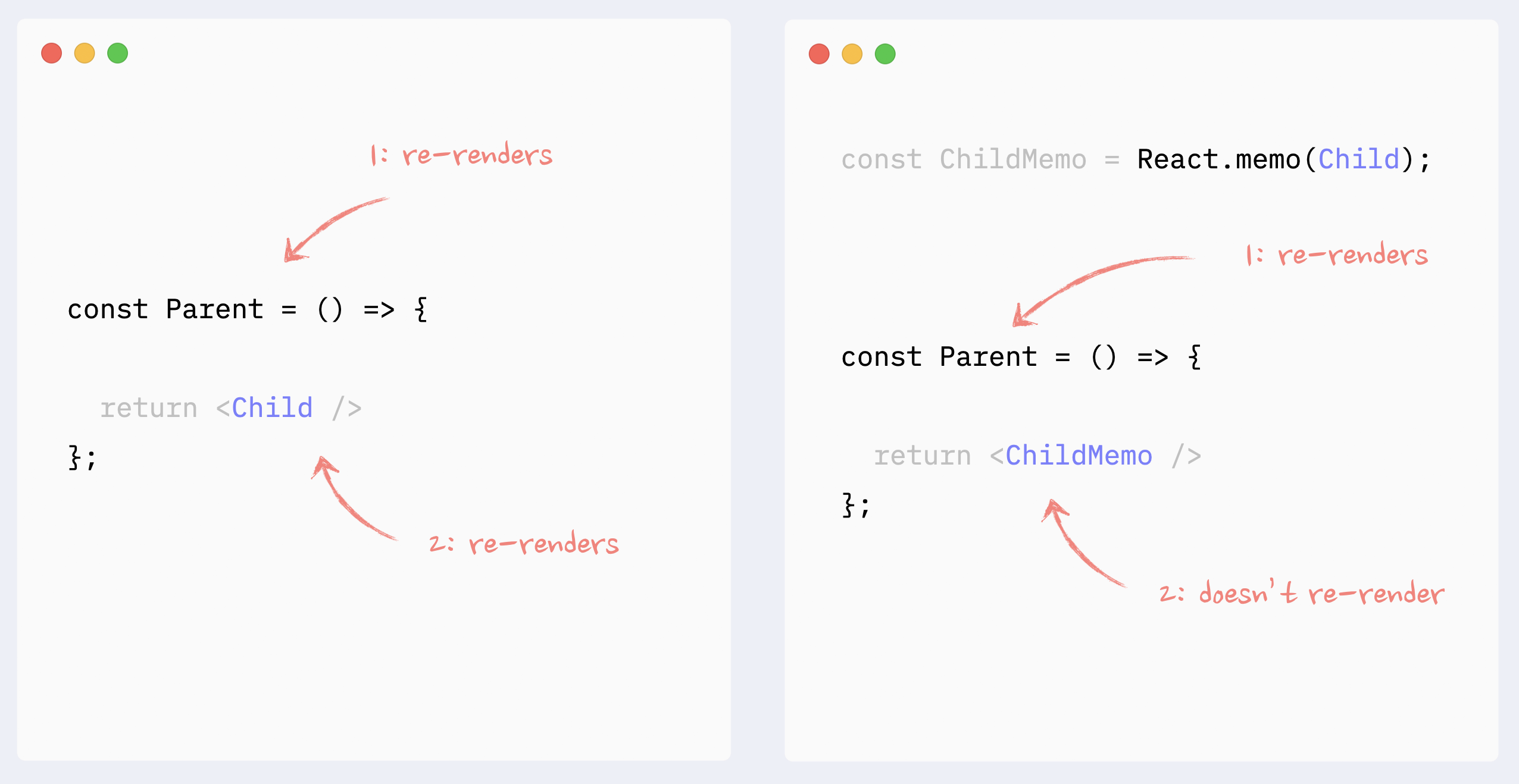

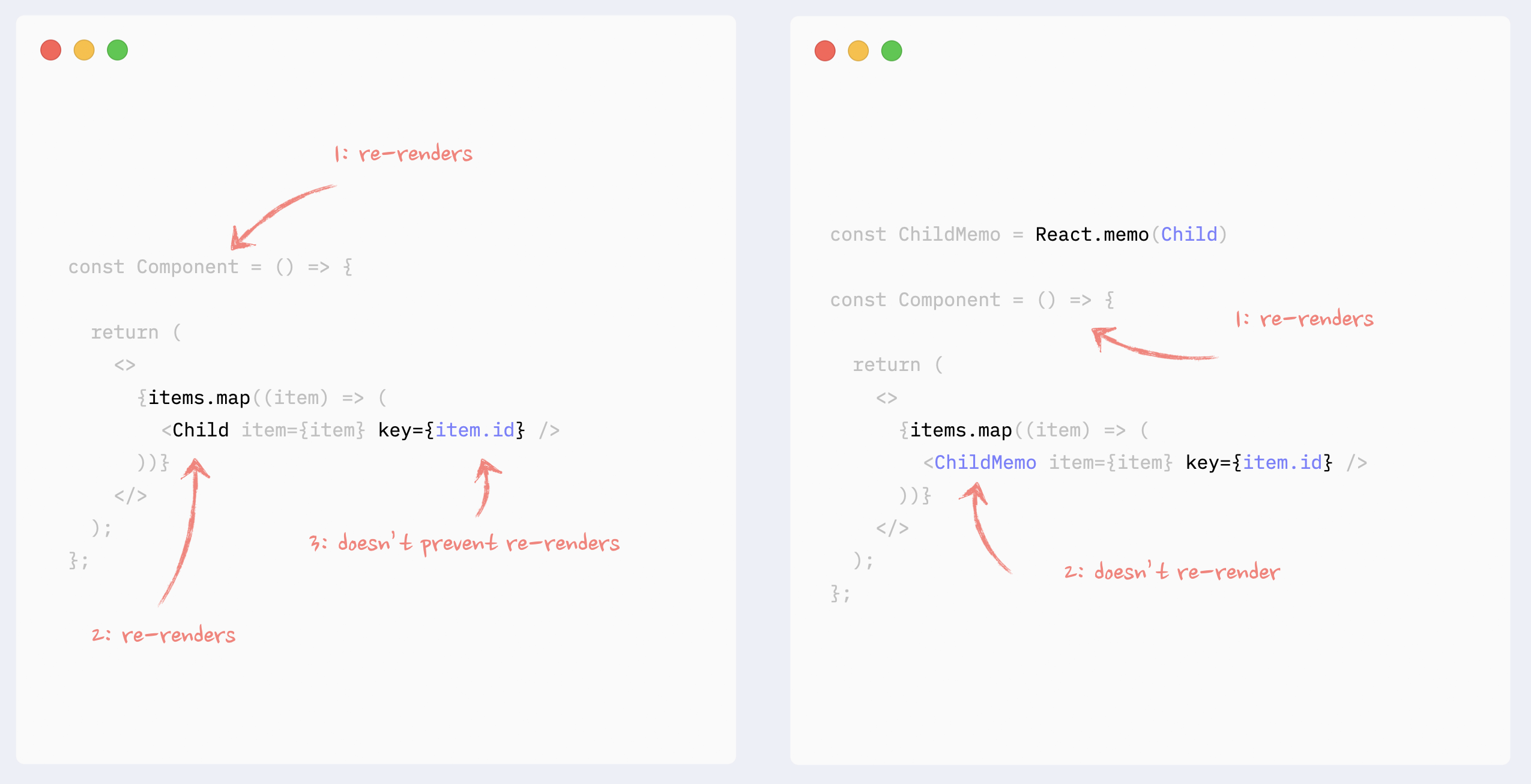

Preventing re-renders with React.memo

Wrapping a component in React.memo will stop the downstream chain of re-renders that is triggered somewhere up the render tree, unless this component’s props have changed.

This can be useful when rendering a heavy component that is not dependent on the source of re-renders (i.e., state, changed data).

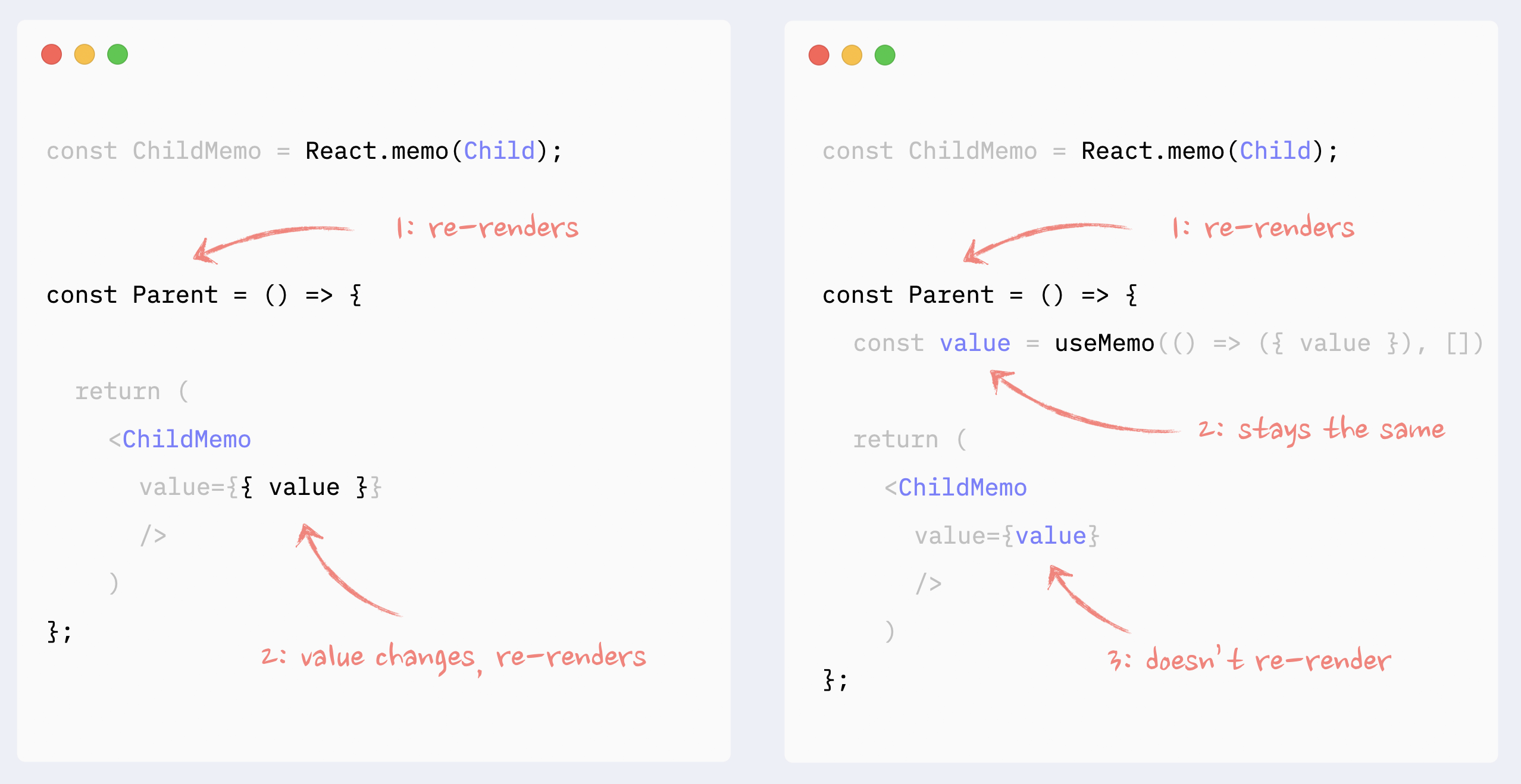

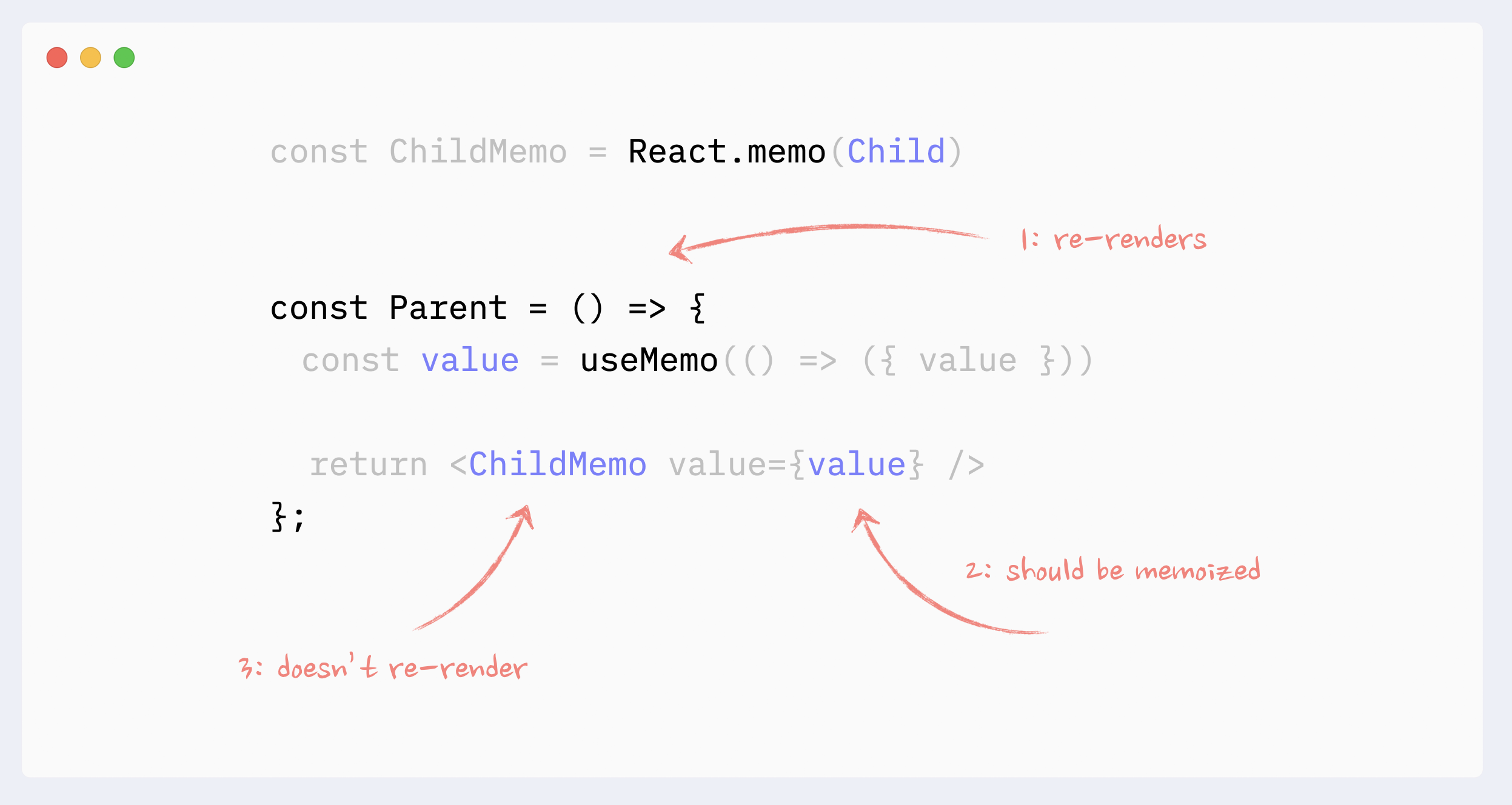

- ✅ React.memo: components with props

All props that are not primitive values have to be memoized for React.memo to work

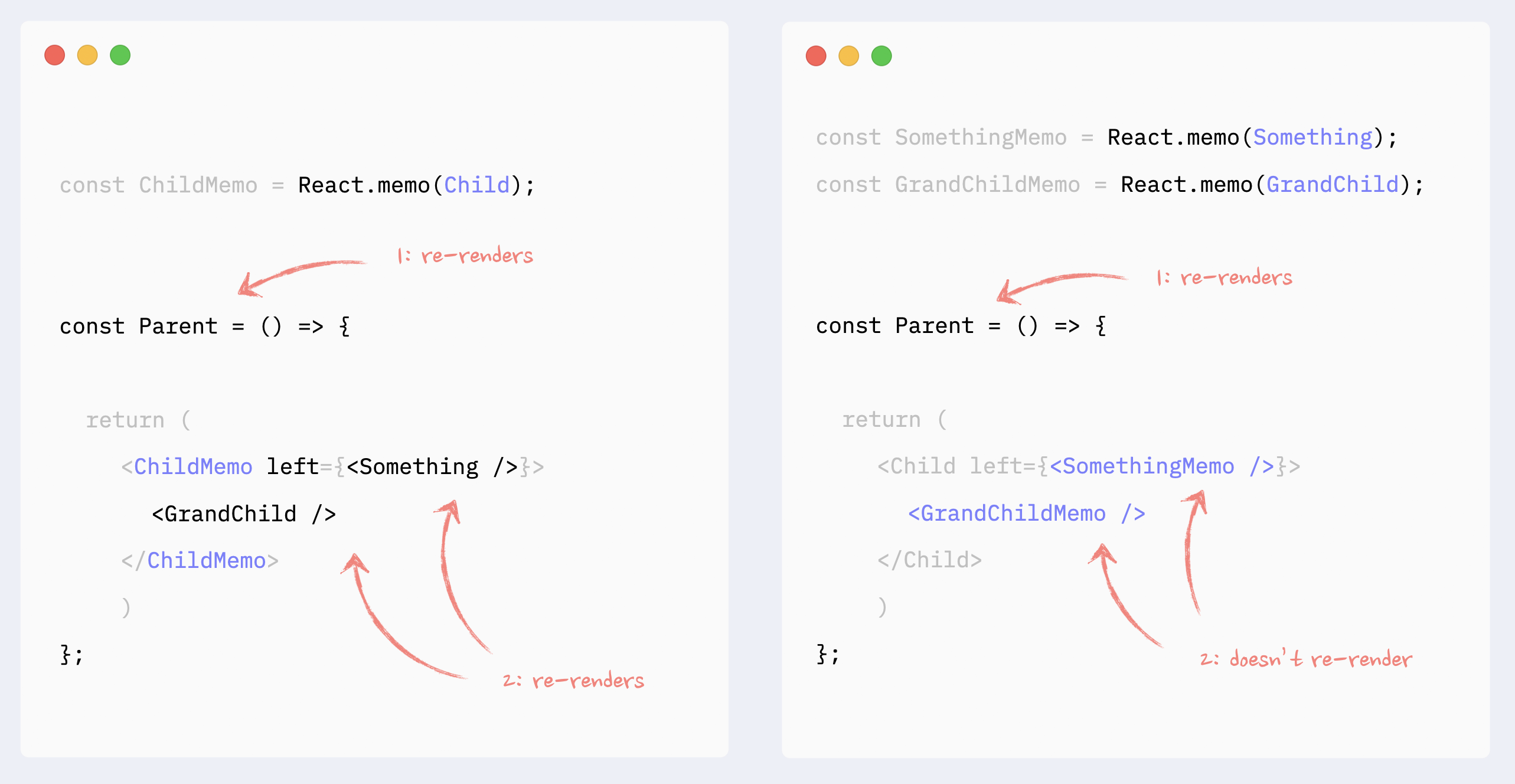

- ✅ React.memo: components as props or children

React.memo has to be applied to the elements passed as children/props. Memoizing the parent component will not work: children and props will be objects, so they will change with every re-render.

Improving re-render performance with useMemo/useCallback

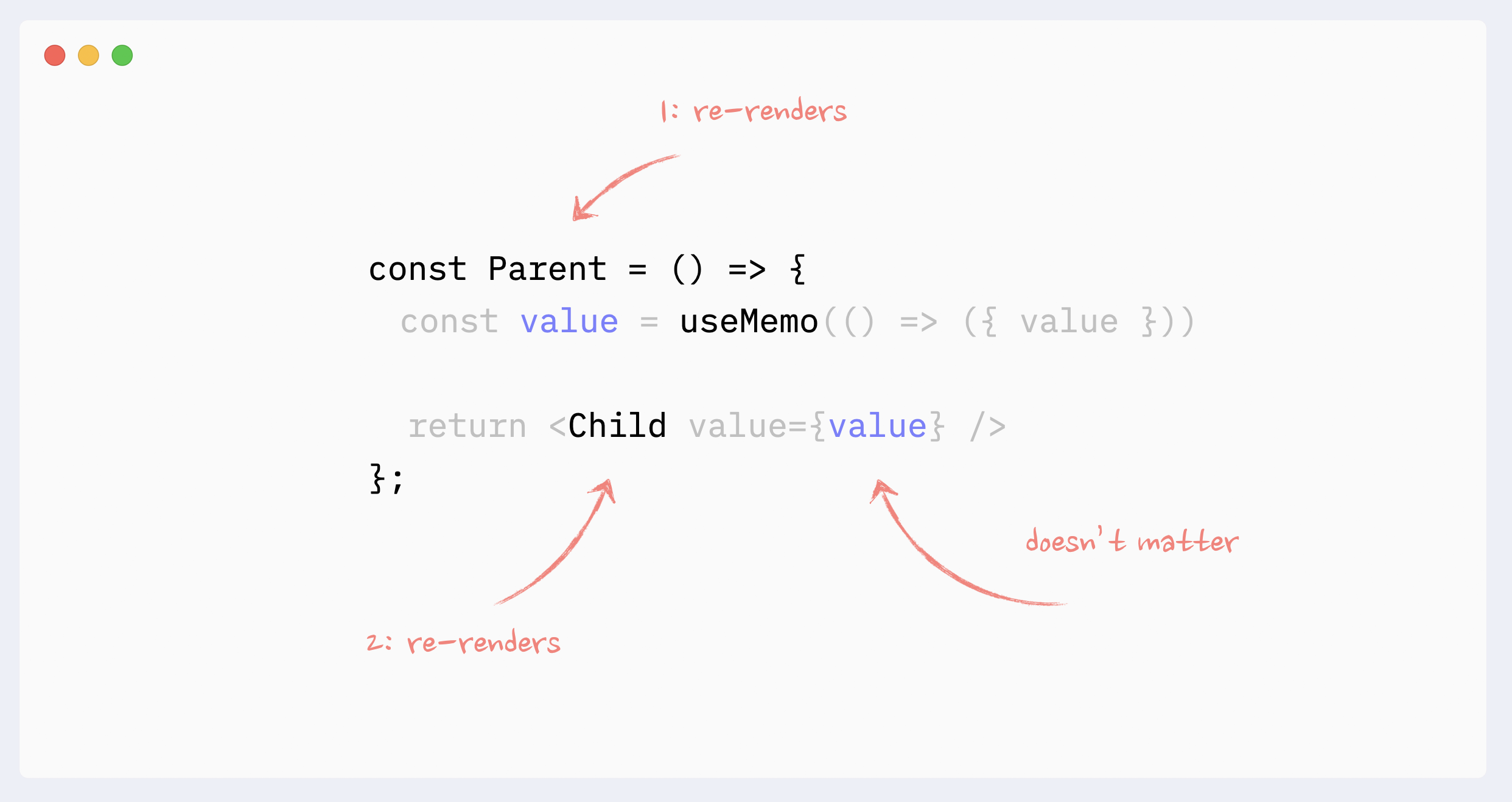

- 🚫 Antipattern: unnecessary useMemo/useCallback on props

Memorizing props by themselves will not prevent re-renders of a child component. If a parent component re-renders, it will trigger re-renders of a child component regardless of its props.

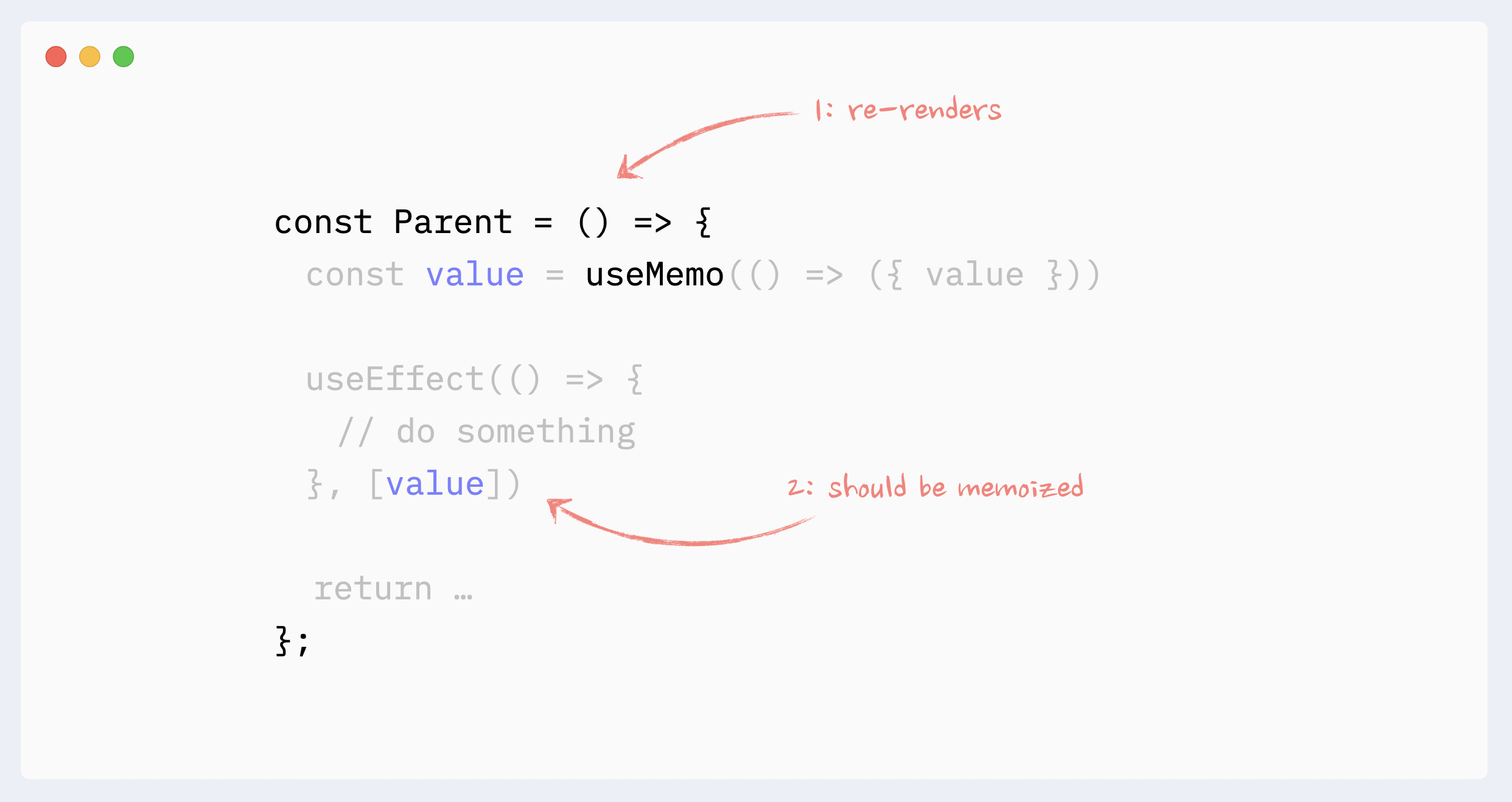

- ✅ Necessary useMemo/useCallback

If a child component is wrapped in React.memo, all props that are not primitive values have to be memorized

If a component uses a non-primitive value as a dependency in hooks like useEffect, useMemo, useCallback, it should be memorized.

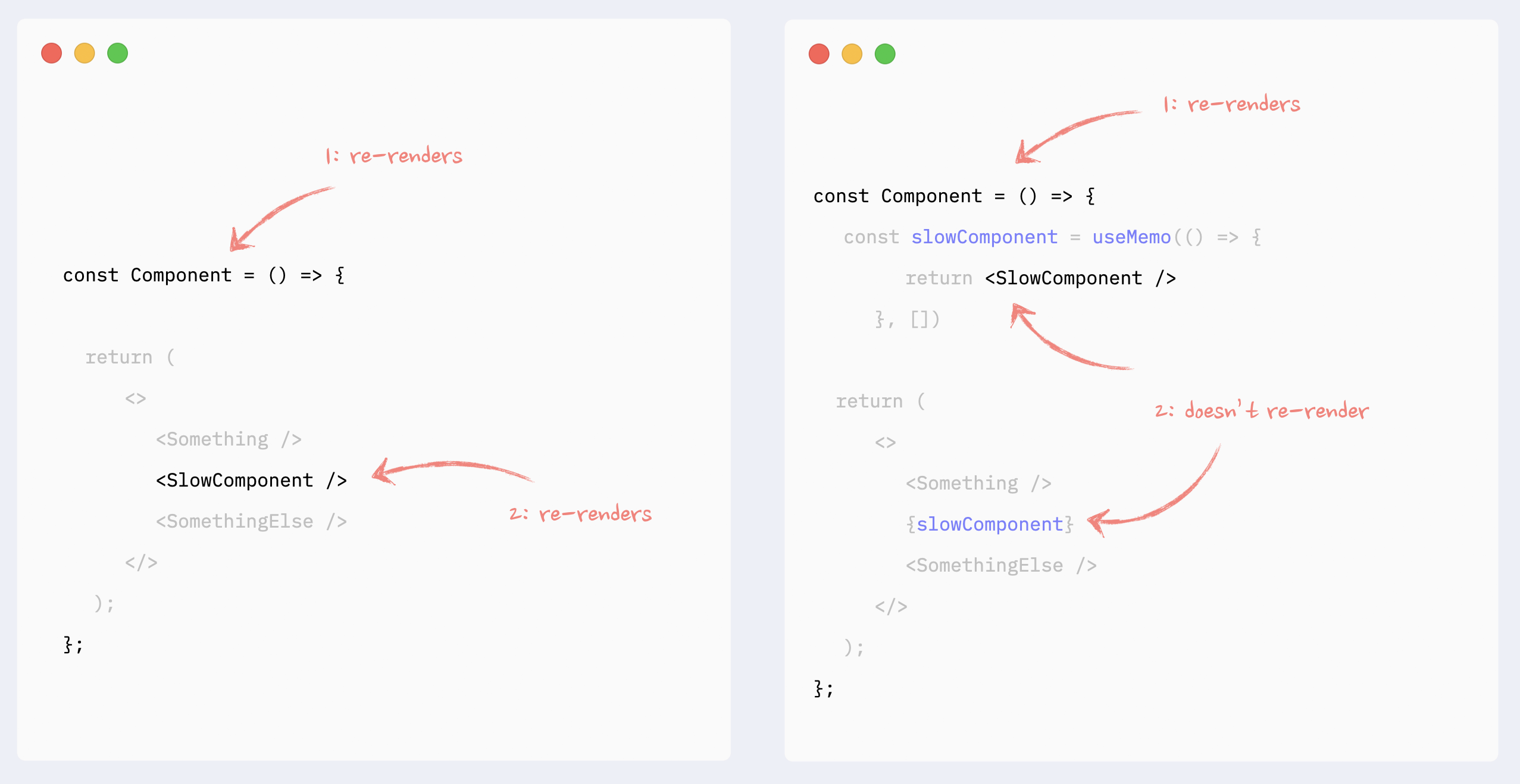

- ✅ useMemo for expensive calculations

One of the use cases for useMemo is to avoid expensive calculations on every re-render.

useMemo has its cost (consumes a bit of memory and makes initial render slightly slower), so it should not be used for every calculation. In React, mounting and updating components will be the most expensive calculation in most cases (unless you’re actually calculating prime numbers, which you shouldn’t do on the frontend anyway).

As a result, the typical use case for useMemo would be to memoize React elements. Usually, parts of an existing render tree or results of a generated render tree, like a map function that returns new elements.

The cost of “pure” JavaScript operations like sorting or filtering an array is usually negligible compared to component updates.

Improving the re-render performance of lists

In addition to the regular render rules and patterns, the key attribute can affect the performance of lists in React.

Important: just providing key attribute will not improve the lists' performance. To prevent re-renders of list elements, you need to wrap them in React.memo and follow all of its best practices.

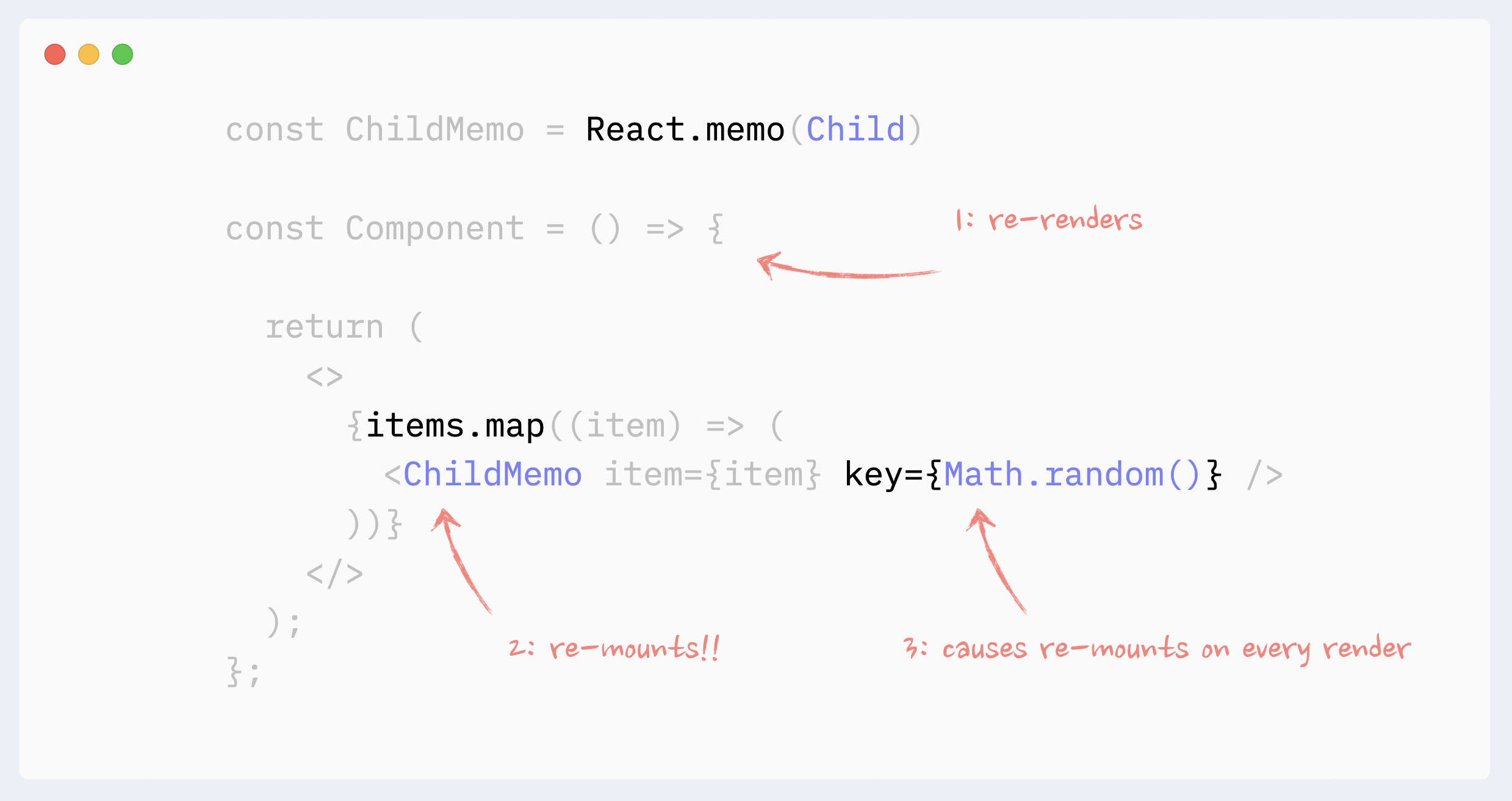

Value in key should be a string that is consistent between re-renders for every element in the list. Typically, item’s id or array’s index is used for that.

It is okay to use array’s index as key, if the list is static, i.e., elements are not added/removed/inserted/re-ordered.

Using an array’s index on dynamic lists can lead to:

bugs if items have state or any uncontrolled elements (like form inputs)

degraded performance if items are wrapped in React.memo

Additional Resources:

Read about keys in detail: React key attribute: best practices for performant lists.

Read about reconciliation: React reconciliation: how it works and why we should care.

Watch about reconciliation: Mastering React reconciliation.

- ⛔️ Antipattern: random value as key in lists

Randomly regenerated values should never be used as values in key attributes in a list. They will lead to React re-mounting items on every re-render, which will lead to (very poor performance of the list, bugs if items have states, or any uncontrolled element such as form inputs)

Preventing re-renders caused by Context

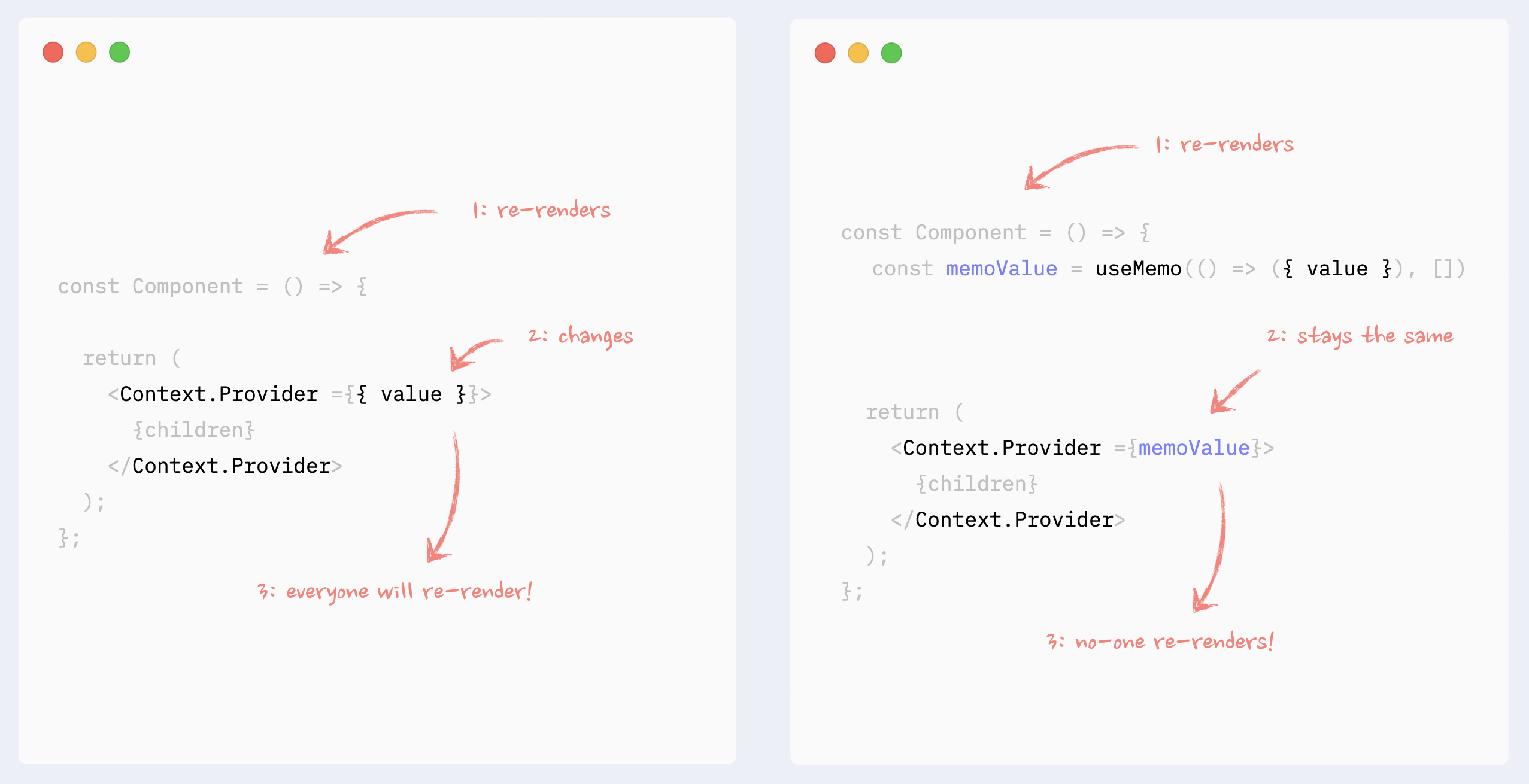

- ✅ Preventing Context re-renders: memorizing Context value

If Context Provider is not placed at the very root of the app, and there is the possibility that it can re-render itself because of changes in its ancestors, its values should be memorized.

See the example in codesandbox.

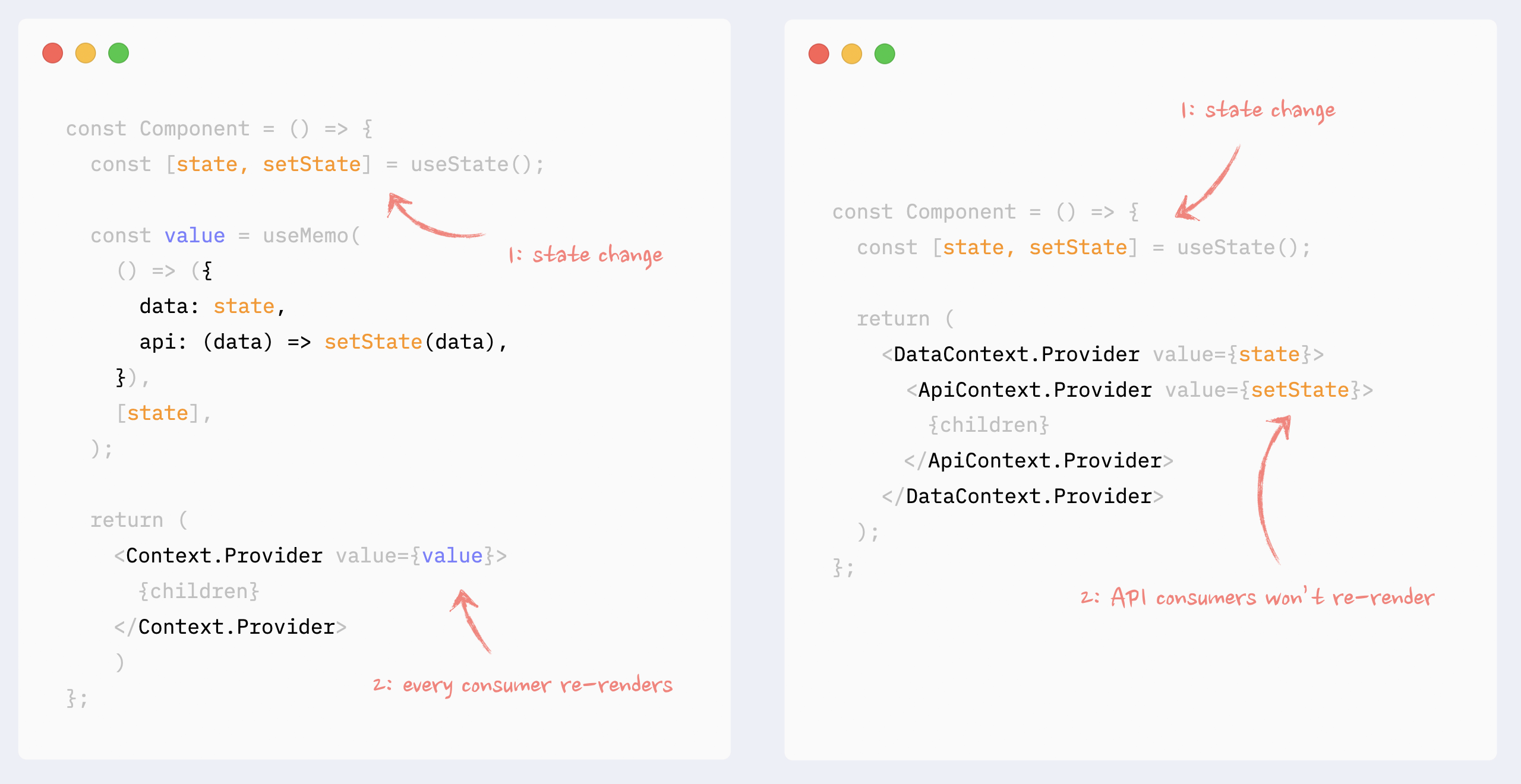

- ✅ Preventing Context re-renders: splitting data and API

If in Context, there is a combination of data and API (getters and setters), they can be split into different Providers under the same component. That way, components that use the API only won’t re-render when the data changes.

Read more about this pattern here: How to write performant React apps with Context.

See the example in codesandbox.

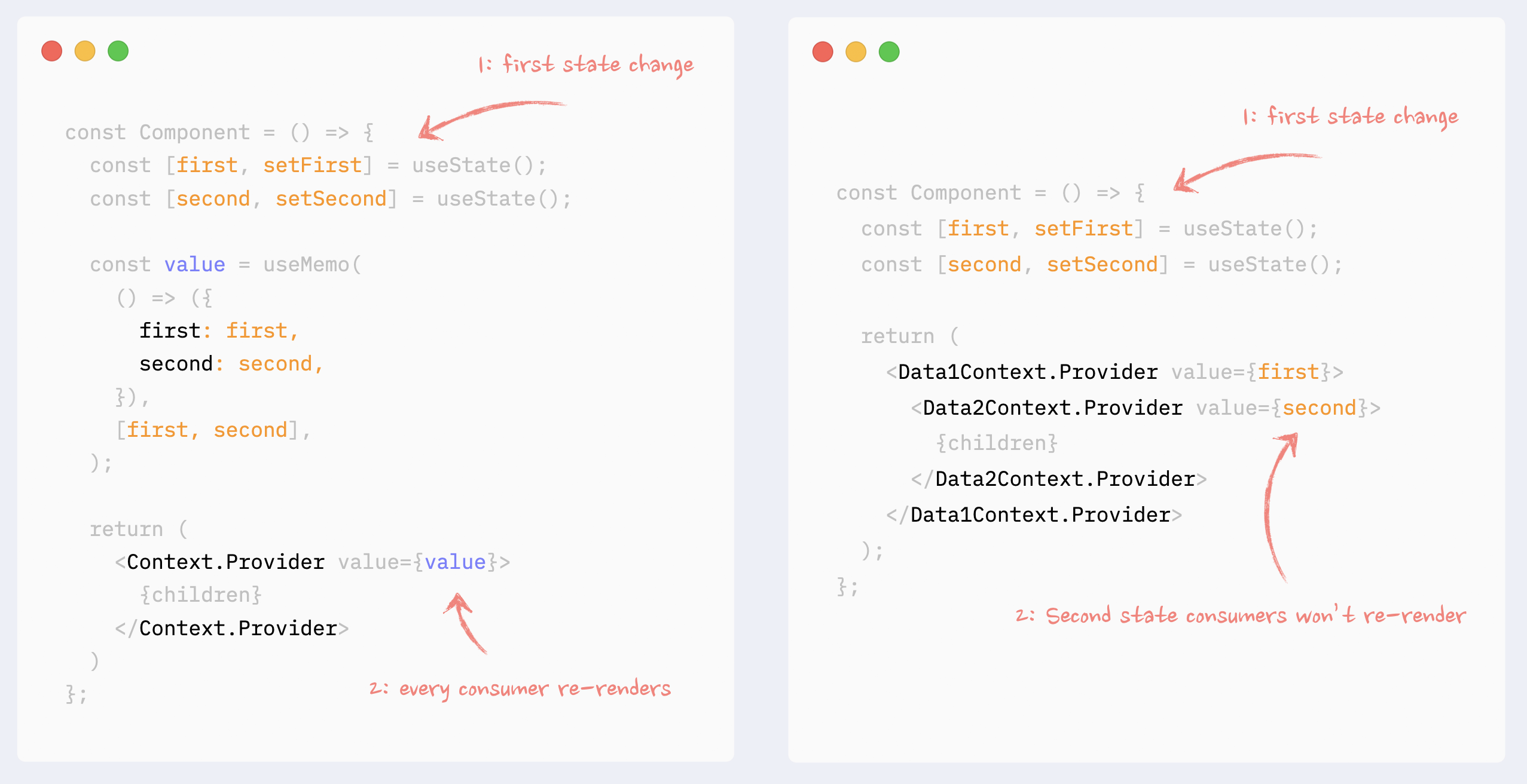

- ✅ Preventing Context re-renders: splitting data into chunks

If Context manages a few dependent data chunks, they can be split into smaller providers under the same provider. That way, only consumers of the changed chunk will re-render.

See the example in codesandbox

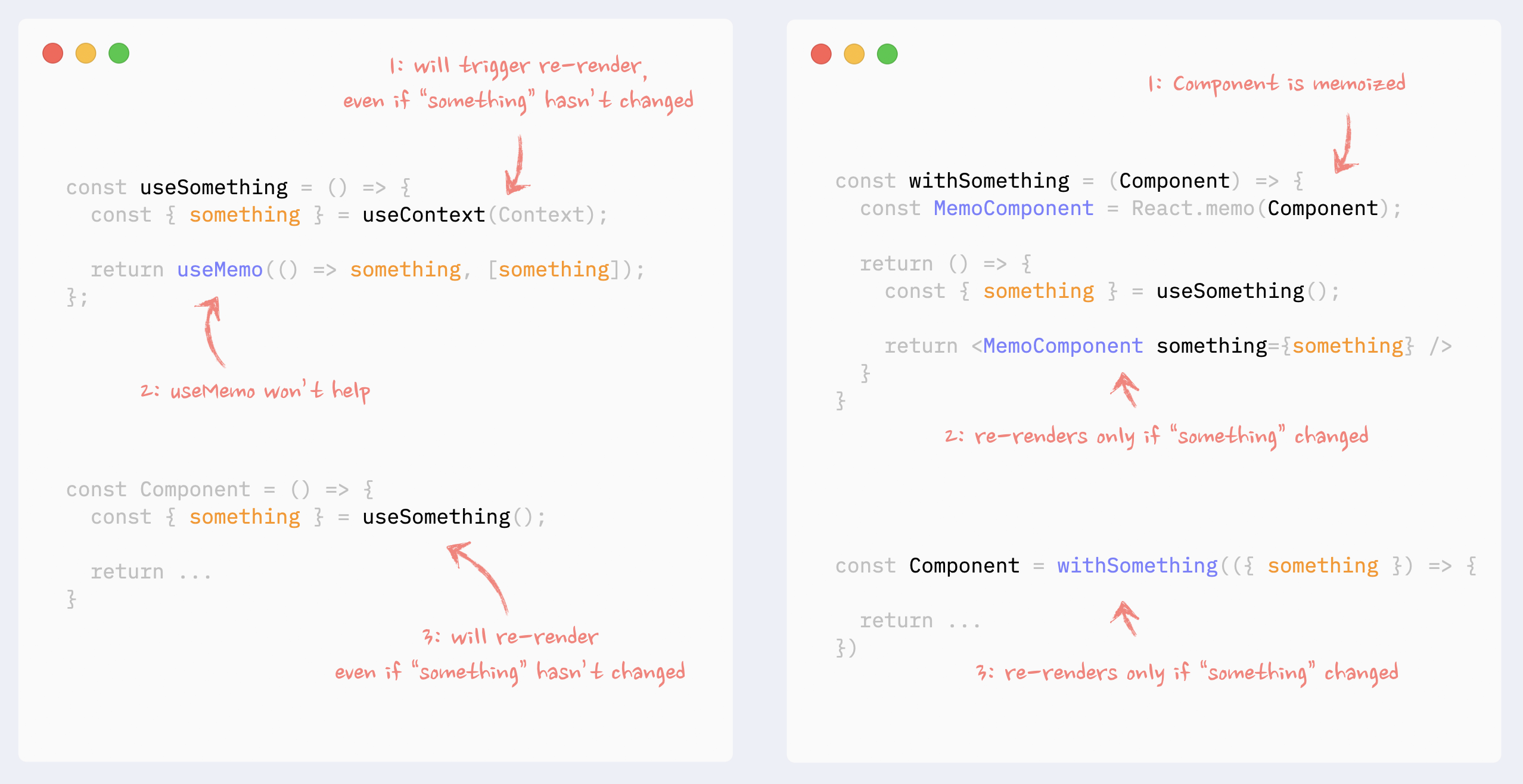

- ✅ Preventing Context re-renders: Context Selectors

There is no way to prevent a component that uses a portion of the Context value from re-rendering, even if the used piece of data hasn’t changed, even with useMemo hook.

Context selectors, however, could be faked with the use of higher-order components and React.memo.

Read more about this pattern here: Higher-Order Components in React Hooks era.

See the example in codesandbox

How Senior Front-end Developers Build Platforms, Not Pages

Most of us start out building components.

We follow the designs, wire up the props, fetch the data, and ship features. But as the app grows, something subtle starts to shift. Files become harder to navigate. Logic feels duplicated. Bugs creep in that don’t seem to belong anywhere. Team overlap. The code doesn’t scale the way we imagined it would.

That’s when you are invited, not always formally, to think like an architect.

I think that is a skill that can be developed at any stage, whether you are a junior, senior, or principal engineer. It doesn’t require a new title; it requires a new lens.

How To Think Like a Front-end Architect (Not Just a Developer)

When we talk about React, we often think about components. Whether it is a simple button, or an entire table, or a complete dashboard screen with charts. That’s how most of us begin. We open a design, see what is on the screen, and try to mirror it with code.

But when we have been building React apps for years, ie. dozens of features, thousands of components, hundreds of edge cases. We realized that React isn’t just really about components. It’s about architecture.

And the way senior developers think about architecture is really different from how juniors think. It’s not that they know some secret design patterns hidden in a book. It’s that…they see the system differently.

- The architect starts before the first component

A junior developer starts with the UI:

Here is the screen. Let’s build the components for it.

A senior developer doesn’t start there. They start with boundaries.

Where does this feature live?

What does it depend on?

Who owns the data, and how far should that data travel?

They see that components are not the first step, but the last. A component is just a surface of something deeper. Underneath lies a flow: data, state, business rules, side effects, and only then, the UI.

This mental shift changes everything. It prevents architecture from collapsing when features grow. This is one of the most important things, in my opinion.

- They keep concerns separate

There is one thing Seniors learn the hard way. That is, when concerns mix, projects do not (kind of).

A junior will happily put an APi call inside the component, mix form validations with UI rendering, sprinkle a bit to fix whatever breaks. It works for a while.

A Senior keeps things apart:

UI layer: just pure presentational components. No logic, no side effects.

State layer: where data is stored, updated, and synchronized.

Domain logic layer: where business rules live, independent of UI.

Why so strict? Because separation buys freedom. If the API changes, only the domain logic changes. If the Ui redesigns, only the components change. Each layer breathes without choking the others.

This is the reason large apps stay alive for years instead of collapsing under their own weight.

- They don’t chase perfection, they make change cheap

A junior wants a perfect architecture. They want to guess the future.

A senior knows the future will surprise us. APIs will break, the design will pivot, and product managers will ask for something entirely new. No amount of foresight can predict all of it.

So instead of aiming for perfection, they design for change.

Clear boundaries so things can be swapped

Simple contracts between layers so code can be replaced

No over-engineering…only abstraction when it is truly earned.

So, I would say, the real skill is not about making the best architecture today. It is in making tomorrow changes painless.

- Data flows like rivers

At its heart, React is about data flowing down and actions following up. But in real apps, these flows multiply. Data comes from APIs, cache, Redux, Contexts, Sockets, and more.

A junior places state whenever it feels convenient.

A Senior asks: Where does this state truly belong?

Does it belong to a single component? Keep it local.

Does it span across a feature? Put it in Context or a slice of state.

Does it belong to the entire app? Centralize it with Redux, Zustand, or another store.

A great analogy would be — they treat data flow like rivers? If it runs downhill in clear streams, the system is healthy. If it leaks, pools, or floods everywhere, the system drowns.

- They organize code by domains, not by blocks

This is one of the most invisible but profound differences.

A junior organizes code by components: buttons, cards, forms

A senior organizes code by domains: users, payments, settings

Why? Because UI is temporary. The same “card“ might exist in ten places, each with a different meaning. But the domain, say “user“, “transaction“, “notification“, those things are permanent.

So instead of a folder called components/, seniors have features/ or domains/. Each domain owns its UI, its state, and its logic. The codebase feels like a map of the product, not a collection of widgets.

That’s why new engineers can work on a Senior’s project and immediately know where things belong.

- They embrace constraints, not options

A common trap for juniors is over-flexibility.

Let’s make this component super reusable. Let’s accept ten props so it can work in all cases.

Seniors go the opposite way: What can we forbid?

A button component should accept just what it needs…nothing more

An API layer should return typed, predictable data…no guessing.

Patterns across the team should be strict — no endless variations.

Constraints reduce mental load. They make the system boring in the best way possible. Every code in the same rhythm. The architecture becomes predictable, and every predictability is power.

- They know architecture is for people, not machines

This is the final, and maybe the most important lesson.

Architecture is not about code at all. It is about people.

Can a new developer understand where to put a file without asking?

Can two engineers work in parallel without stepping on each other’s toes?

Can I feature maintainer, read this code and know what it means without context?

That is the real test of architecture. It’s not always about performance, elegance, or clever abstractions. But whether it helps humans work together without pain.

- Know when to break the rules

Architectural thinking gives us a compass, not a cage.

There will always be moments where we need to shortcut, experiment, hack a fix. That’s okay.

What matters is that we do it consciously — eyes open, knowing why we’re breaking a rule, and where to revisit it later.

It’s not about perfection. It’s about awareness.

The Front-end Architecture Blueprint

When we start, we are focused on screens, components, and making things work. But as features pile up, teams grow, and the codebase ages, everything that once felt fluid becomes fragile. Bugs appear in unrelated parts. A small change breaks 10 other things. And onboarding a new developer feels like dropping them into a jungle without a map.

What we need is a blueprint — a consistent, battle-tested architectural structure that scales with grace.

We won’t be vague or overly academic. Everything here comes from real-world pain, patterns observed in large React codebases, and ideals that made the difference between chaos and clarity.

Let's begin with the bird’s eye view. Most scalable front-end systems can be thought of as layers, each with a purpose.

[ UI Layer ]

[ Behavior Layer ]

[ State Management Layer ]

[ Services Layer ]

[ Utilities / Core Logic ]

Each layer has its own responsibilities and knows only as much as it needs. This separation creates clarity, reduces bugs, and increases developer speed.

- UI Layers: Pure and Presentational

Your UI layer should be predictable and reusable. It knows nothing about where data comes from. It doesn’t fetch. It doesn’t mutate. It just receives props and renders markup:

function UserList({ users, onSearch }) {

return (

<>

<input placeholder="Search..." onChange={(e) => onSearch(e.target.value)} />

<ul>

{users.map(user => (

<li key={user.id}>{user.name}</li>

))}

</ul>

</>

);

}

No useEffect. No useState. Just pure props in, UI out.

Because it doesn’t hold state or side-effects, it’s:

Easy to test

Easy to reason about

Easy to reuse across screens

UI components become visual building blocks, not logic holders.

- Behavior Layers: Where Logic Lives

Now, how does the UI get data? Who tracks the search input? Who do we debounce, or transform, or conditionally load?

That is the Behavior Layer

This layer lives in a custom hook. It’s responsible for:

Local UI state

Effects (but UI-related ones)

Coordination between UI pieces

User interaction logic

function useUserListBehavior() {

const [searchTerm, setSearchTerm] = useState('');

const { data: users = [] } = useQuery(['users', searchTerm], () =>

userService.fetchUsers(searchTerm)

);

return {

users,

onSearch: setSearchTerm

};

}

Notice:

This hook manages state

It can handle debouncing if needed

It does not care about rendering

By isolating behavior, we unlock logic reuse.

We can plug the same behavior into different UIs — maybe a sidebar user picker, or an admin dashboard, or a modal — without duplicating a line of logic.

- State Management Layer: Centralizing Shared State

As your app grows, some states become shared across components — auth info, feature flags, and user settings.

For these, you can use:

React Context (for small apps or low-frequency updates)

Redux / Zustand / Jotai (for predictable and structured global state)

Tip:

Don’t use global state prematurely. Keep things local unless they truly need to be shared.

Also, try to normalize data and treat state updates like reducers — they tell a story.

- Service Layers: Talking To The Outside World

This layer is your interface with APIs, storage, and anything that lives outside your app.

Structure it like:

/services

authService.js

userService.js

notificationService.js

Each service:

Encapsulates API calls

Handles errors, retries, and formatting

Makes it easier to mock in tests

Why it matters:

Your UI shouldn’t care if data comes from REST, GraphQL, or localStorage. The service abstracts that.

- Utilities/ Core Logic Layers

This is where shared helpers, validation functions, formatters, and pure logic reside.

Keep these functions:

Pure

Independent

Tested

Place them in:

/utils

formatDate.js

isEmailValid.js

mergeDeep.js

10 Ways to better organize and design your React application

When building a React application, the way you organize and design your code has a tremendous impact. It can either help you and your team find things easier, make updates quicker, and better handle the app as it grows, or make everything much worse.

It’s the same principle as with buildings. If you have laid the foundations and organized the building well, it can last longer, and its residents will be happy. Otherwise, the building might fall.

Sharky foundation leads to shake results.

- Group components by Domain Responsibility

The organization of files and folders in a React application is crucial for maintaining clarity and manageability. The easier it is to navigate throughout the project, the less time developers spend navigating and wondering where and how to change stuff.

It’s important to structure files not just by technical roles but by their domain responsibilities.

⛔ Avoid grouping components by technical responsibilities.

/src

│ ...

│

└───components

│ │ Header.js

│ │ Footer.js

│ │ ...

└───containers

│ │ InvoicesContainer.js

│ │ PaymentProfilesContainer.js

│ │ ...

└───presenters

│ │ InvoicesPresenter.js

│ │ PaymentProfilesPresenter.js

│ │ ...

│ ...

At first glance, this feels clean — everything in its “bucket“. But as the app grows, so does the complexity. Updating something like the cart feature means bouncing between components/, store/, services/, and utils/. This separation slows you down and creates mental overhead.

✅ Prefer grouping components by domain responsibilities by pages(routes) or modules(domains).

/src

│ ...

│

└───pages --> Actual Pages representing different parts of the app

│ └───billing

│ │ └───invoices

│ │ │ │ index.js

│ │ │ │ ...

│ │ └───payment-profiles

│ │ │ │ index.js

│ │ │ │ ...

│ │ │ ...

│ │ │ index.ts

│ └───login

│ │ index.js

│ │ ...

│ ...

Now, everything related to the cart lives in one folder. You don’t need to mentally piece together the UI, API, and logic — it’s all scoped and colocated.

As you team scales, different squads might handle different domains: authentication, payments, dashboard, etc. With a file-type structure, teams often overlap and touch shared folders, which leads to conflicts and tight coupling. Each team owns everything inside their domain — components, API calls, state management, and styles. This clear boundary makes parallel development much easier. Teams can refactor their own features without worrying about breaking others.

Also, where features are self-contained, refactoring becomes safer. You can confidently update the logic inside features/cart/ knowing it won’t affect unrelated features like auth or dashboard.

You can even write integration tests specific to a feature folder or migrate an entire module to a different project if needed.

- Put components into folders

For complex components, it’s better to organize them into separate folders where you can list their subcomponents.

⛔ Avoid having a single file for each component.

/src

│

└───components

│ │ Accordion.ts

| | Alert.ts

│ │ ...

│

└───...

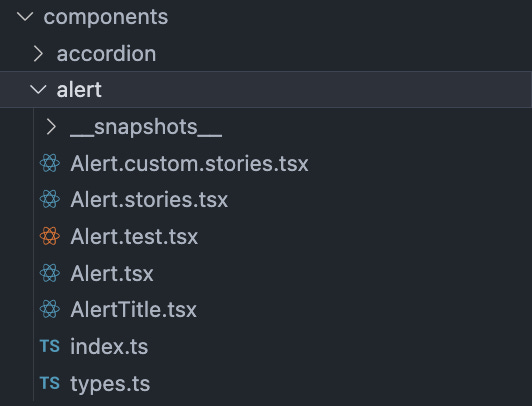

✅ Prefer having a single folder for each component.

/src

│

└───components

│ │ ...

│ └───accordion

│ │ │ index.ts

│ │ │ ...

│ └───alert

│ │ index.ts

│ │ types.ts

│ │ Alert.tsx

│ │ AlertTitle.tsx

│ │ Alert.stories.tsx

│ │ Alert.test.tsx

│ │ ...

│

└───...

- Favor Absolute Paths

Using the right type of paths in your project can simplify navigation and maintenance, especially as your project grows. Refactoring will be much easier.

⛔ Avoid using relative paths, which can become hard to manage and error-prone in large projects.

import { formatDate } from '../../../utils';

✅ Prefer using absolute paths, which improve readability and make refactoring easier.

import { formatDate } from '@common/utils';

- Use a common module

Common modules play a vital role in avoiding redundancy and promoting reusability across your application.

You can store utility methods, constants, calculations, etc., in this common module.

This centralization helps with better management and reuse.

⛔ Avoid spreading common utilities and components across various locations in your project.

✅ Prefer having a dedicated common module for all generic components and utilities used across different pages or modules.

/src

│ ...

│

└───common

│ └───components

│ │ └───dialogs

│ │ │ │ index.js

│ │ └───forms

│ │ │ │ index.js

│ │ │ ...

│ └───hooks

│ │ │ useDialog.js

│ │ │ useForm.js

│ │ │ ...

│ └───utils

│ │ │ ...

└───pages

│ └───billing

│ │ └───invoices

│ │ │ │ index.js

│ │ │ │ ...

│ ...

- Abstracts external libraries and modules

The integration of external libraries or modules requires careful consideration to ensure future flexibility and easier maintenance.

Using 3rd party libraries or components directly in your project can lead to issues if the external APIs change, or you want to replace the components or library with something else. Then, you have to go through all the places it is being used instead of updating them only in a single place.

Wrapping the 3rd party or module in a custom component allows you to maintain a consistent API within your application and makes it easier to replace the module in the future if needed.

⛔ Avoid direct use of 3rd party components or libraries in your project.

// XYZ_Component.ts (file 1)

import { Button } from 'react-bootstrap';

// ABC_Component.ts (file 2)

import { Button } from 'react-bootstrap';

✅ Prefer wrapping external modules or components in a custom component.

// XYZ_Component.ts (file 1)

import { Button } from '@components/ui';

// ABC_Component.ts (file 2)

import { Button } from '@components/ui';

- Manage dependencies between modules/pages

Managing dependencies widely by centralizing commonly used resources in a shared common module can significantly enhance the code’s manageability and reusability.

If something is used more than once across two or more pages and modules, consider moving it to the common module.

Storing shared components or utilities in a common module eliminates the need to duplicate code across different parts of the application, making the codebase leaner and easier to maintain.

It also makes clear dependencies per module and page.

- Keep things as close as where they are used (LoB)

The easier and faster for a developer to find a piece of code, the better.

The principle of Locality of Behaviour (LoB) suggests organizing the codebase so that components, functions, and resources are located near where they are used within an application. This strategy promotes a modular architecture, where each part of the system is self-contained.

This improves readability and maintainability. When developers work on a feature, they have all the related code in proximity, which makes it easier to understand and modify. It also reduces the cognitive load of tracing through distant files and modules.

/src

│ ...

│

└───common

│ └───components

│ │ │ ...

│ └───hooks

│ │ │ ...

│ └───utils

│ │ │ ...

└───pages

│ └───billing

│ │ └───invoices

│ │ │ │ index.js

│ │ │ │ ...

│ │ └───payment-profiles

│ │ │ │ index.js

│ │ │ │ ...

│ │ └───hooks

│ │ │ │ index.js

│ │ │ │ useInvoices.js

│ │ │ │ usePaymentProfiles.js

│ │ │ │ ...

│ │ └───utils

│ │ │ │ index.js

│ │ │ │ formatAmount.js

│ │ │ │ ...

│ ...

- Be careful with utility functions

Utility functions typically handle reusable snippets of code that aren’t tied to the business rules and the application logic. They would rather provide general assistance across the system.

Utility functions should remain pure and purpose-specific, focusing on general tasks like formatting dates, converting data types, etc. Mixing them with business logic, like how data is processed or business-specific decisions, can make these utilities overly complex and less reusable.

Also, business logic and rules change more often than utility functions, so by separating them, you will improve the overall maintainability of the code.

⛔ Avoid adding business logic to utils.

✅ Prefer extracting business logic into separate functions.

- Be careful with business logic

Integrating business logic directly into UI components can lead to problems like harder-to-test code and poor separation of concerns. This can also lead to bulky components that are difficult to manage and update.

In React, custom hooks are a great tool for abstracting business logic from components. By using hooks, you can encapsulate business logic and keep your UI clean and focused on rendering.

This separation not only makes your component more modular and easier to manage but also enhances reusability and maintainability.

⛔ Avoid mixing business logic with UI.

✅ Prefer separating business logic from UI. Use custom hooks.

- Pin Dependencies

When managing a JavaScript project, your package.json plays a crucial role. It defines your project’s dependencies - the external packages your project relies on to function. Pinning these dependencies refers to specifying exact versions of these packages rather than allowing version ranges.

Pinning dependencies ensures that everyone working on the project, as well as the production environment, uses the exact same version of each package, eliminating discrepancies that might occur due to minor updates and patches.

⛔ Avoid using version ranges in your package.json.

{

"dependencies": {

"express": "^4.17.1",

"react": ">=16.8.0"

}

}

✅ Prefer using exact versions in your package.json.

{

"dependencies": {

"express": "4.17.1",

"react": "16.8.0"

}

}

https://nagibaba.medium.com/ternary-design-system-for-scalable-react-applications-1fad84e369ab

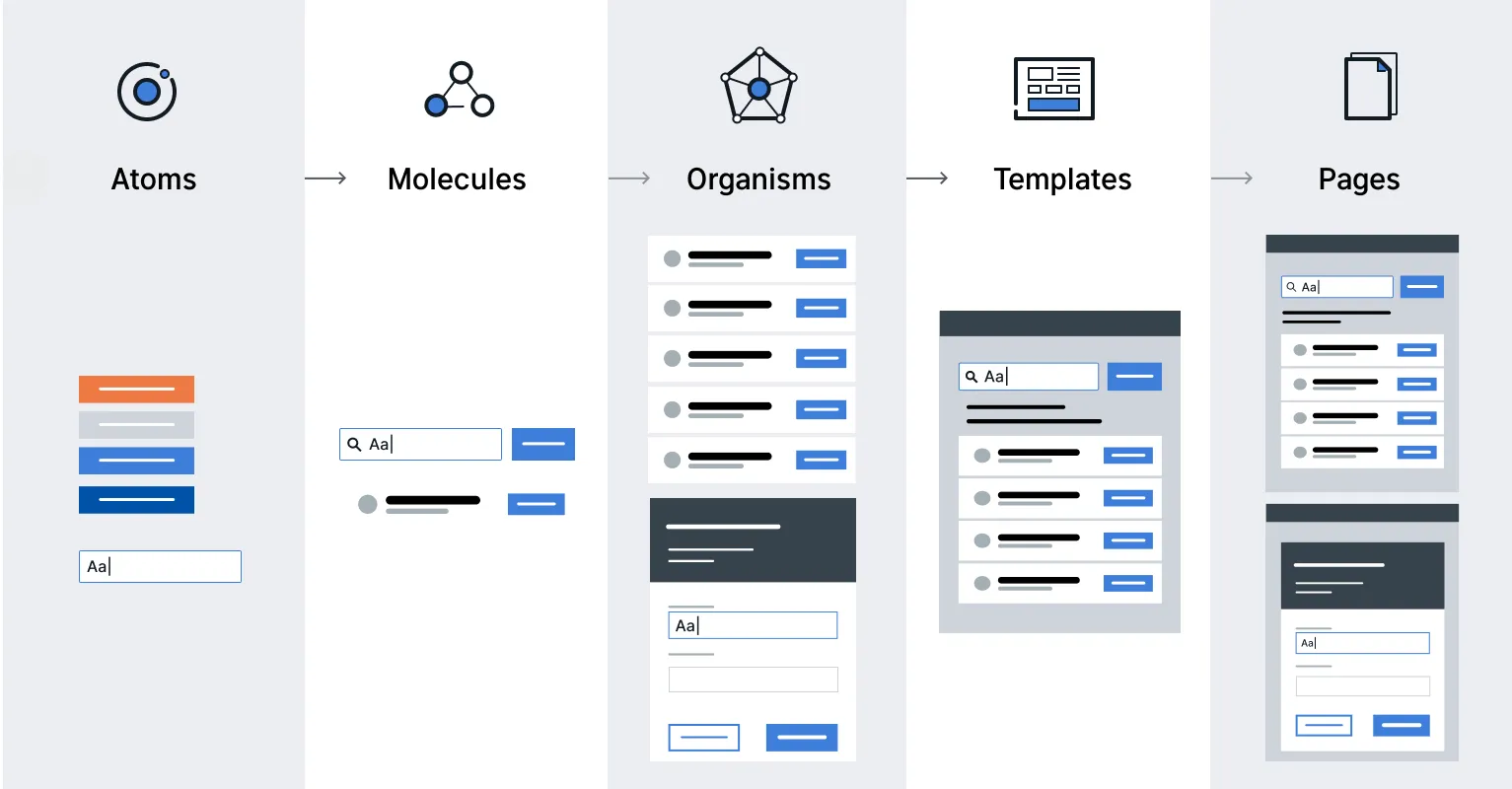

Use the Atomic Design Pattern to structure your React application

As we build scalable applications in React, we often encounter challenges in managing the growing complexity of component structures. The Atomic Design Pattern has emerged as a powerful methodology for organizing and structuring applications. This pattern, inspired by chemistry, breaks down the interface into multiple fundamental building blocks, promoting a more modular and scalable approach to application design. It serves as a powerful methodology to enhance the readability, maintainability, and flexibility of our application code.

The Atomic Design Pattern was introduced by Brad Frost and Dave Olsen and is based on the idea that a design system should be broken down into its smallest parts, which are used to build up increasingly complex and reusable components. The goal is not to create a strict hierarchy but rather to provide a mental model to better understand and create user interfaces.

The Atomic Design methodology breaks down design into 5 distinct levels:

Atoms: These are the basic building blocks of your application, like an input field, a button, or a form label. In React, these would be represented as individual components. They serve as foundational elements that are not exactly useful on their own but are fundamental for building more complex components.

Molecules: Molecules are groups of atoms that are combined together to form a functional unit. For example, a form might be a molecule that includes atoms like labels, an input field, and a submit button.

Organisms: Organisms are relatively complex UI components composed of groups of molecules and/or atoms. These are larger sections of an interface, like a header, footer, or navigation bar, and therefore can have their own states and functionality.

Templates: Templates are page-level objects that place components into a layout and articulate the design’s underlying content structure. They usually consist of groups of organisms, representing a complete layout.

Pages: Pages are specific instances of templates that show what a UI looks like with real representative content in place. These pages serve as ecosystems that display different template renders.

To implement Atomic Design in a React application, we can consider the following key points:

Component Categorization: Organize the components into atoms, molecules, organisms, templates, and pages. This categorization should be reflected in our project’s file structure.

State Management: We also need to decide how the state should be managed across different levels of components. Atoms and molecules might not hold a state, while organisms and templates might need to.

Documentation: It’s important to have thorough documentation of its components and usage. This can be facilitated by tools like Storybook, as this will allow us to create a living style guide.

Let’s see a very simple example of how a React application built on the atomic design principle would look structurally like.

import React from 'react';

const noop = () => {};

// Atoms

const Button = ({ onClick, children, type }) => <button type={type} onClick={onClick}>{children}</button>;

const Label = ({ htmlFor, children }) => <label htmlFor={htmlFor}>{children}</label>;

const Input = ({ id, type, onChange, value = "" }) => <input id={id} type={type} onChange={onChange} value={value} />;

const Search = ({ onChange }) => <input type="search" onChange={onChange} />;

const NavMenu = ({ items }) => <ul>{items.map((item) => <li>{item}</li>)}</ul>;

// Molecules

const Form = ({ onSubmit }) => (

<form onSubmit={onSubmit}>

<Label htmlFor="email">Email:</Label>

<Input id="email" type="email" onChange={noop} />

<Button type="submit" onClick={noop}>Submit</Button>

</form>

);

// Organisms

const Header = () => (

<header>

<Search onChange={noop} />

<NavMenu items={[]} />

</header>

);

const Content = ({ children }) => (

<main>

{children}

<Form onSubmit={noop} />

</main>

);

// Templates

const MainTemplate = ({ children }) => (

<>

<Header />

<Content>{children}</Content>

</>

);

// Pages

const HomePage = () => (

<MainTemplate>

<h2>My Form</h2>

<p>This is a basic example demonstrating Atomic Design in React.</p>

</MainTemplate>

);

Why Atomic Design?

The Atomic Design Pattern aligns perfectly with React’s component-based architecture. It allows us to:

Promote reusability: By breaking down the interface into the smallest parts, it becomes easier to reuse components and leverage modular composition across different parts of an application or even across different projects.

Ensure Consistency: Atomic Design helps maintain UI consistency, which is crucial for user experience and brand identity.

Facilitates Maintenance: When components are well-organized, it becomes much simpler to update or maintain them over time.

Improve Collaboration: A shared design language based on Atomic Design Principles can enhance communications, usage, and contributions since it is easier to understand the codebase.

Promotes Code Quality: As we create a sub-ecosystem for each component feature, each component or service has its isolated environment, including styles, actions, and tests. This isolation makes testing more effective and ensures consistent code quality.

While Atomic Design offers many benefits, we would want to ensure that we implement this principle to our advantage and not over-engineer. It can be easy to over-abstract components, which can lead to unnecessary complexity. Therefore, we should also keep an eye on performance implications when breaking down components into smaller pieces to reap the full benefits of this technique.

React Patterns

HOC Pattern

Within our application, we often want to use the same logic in multiple components. This logic can include applying a certain styling to components, requiring authorization, or adding a global state.

One way of being able to reuse the same logic in multiple components is by using the higher-order component pattern. This pattern allows us to reuse component logic throughout our application.

A Higher Order Component (HOC) is a component that receives another component. The HOC contains certain logic that we want to apply to the component that we pass as a parameter. After applying that logic, the HOC returns the element with the additional logic.

Say that we always wanted to add a certain styling to multiple components in our application. Instead of creating a style object each time locally, we can simply create an HOC that adds the style objects to the component that we pass to it

function withStyles(Component) {

return props => {

const style = { padding: '0.2rem', margin: '1rem' }

return <Component style={style} {...props} />

}

}

const Button = () = <button>Click me!</button>

const Text = () => <p>Hello World!</p>

const StyledButton = withStyles(Button)

const StyledText = withStyles(Text)

We just created a StyledButton and StyledText component, which are the modified versions of the Button and Text components. They now both contain the style that got added in the withStyles HOC!

We can also compose multiple Higher Order Components. Let’s say that we also want to add functionality that shows a Hovering! text box when the user hovers over the DogImages list.

import React from "react";

import withLoader from "./withLoader";

import withHover from "./withHover";

function DogImages(props) {

return (

<div {...props}>

{props.hovering && <div id="hover">Hovering!</div>}

<div id="list">

{props.data.message.map((dog, index) => (

<img src={dog} alt="Dog" key={index} />

))}

</div>

</div>

);

}

export default withHover(

withLoader(DogImages, "https://dog.ceo/api/breed/labrador/images/random/6")

);

A well-known library used for composing HOCs is recompose. Since HOCs can largely be replaced by React Hooks, the recompose library is no longer maintained, thus won’t be covered in this article.

Generally speaking, React Hooks don’t replace the HOC pattern.

“In most cases, Hooks will be sufficient and can help reduce nesting in your tree.” - React Docs

As the React docs tell us, using Hooks can reduce the depth of the component tree. Using the HOC pattern, it’s easy to end up with a deeply nested component tree.

<withAuth>

<withLayout>

<withLogging>

<Component />

</withLogging>

</withLayout>

</withAuth>

By adding a Hook to the component directly, we no longer have to wrap components.

Best use-cases for an HOC:

The same, uncustomized behavior needs to be used by many components throughout the application.

The component can work standalone, without the added custom logic.

Best use-cases for Hooks:

The behavior has to be customized for each component that uses it.

The behavior is not spread throughout the application; only one or a few components use the behavior.

The behavior adds many properties to the component

Render Props Pattern

In the section on Higher Order Components, we saw that being able to reuse component logic can be very convenient if multiple components need access to the same data or contain the same logic.

Another way of making components very reusable is by using the render prop pattern. A render prop is a prop on a component whose value is a function that returns a JSX element. The component itself does not render anything besides the render prop. Instead, the component simply calls the render prop, instead of implementing its own rendering logic.

Imagine that we have a Title component. In this case, the Title component shouldn’t do anything besides rendering the value that we pass. We can use a render prop for this! Let’s pass the value that we want the Title component to render to the render prop.

<Title render={() => <h1>I am a render prop!</h1>} />

Within the Title component, we can render this data by returning the invoked render prop!

const Title = (props) => props.render();

To the Component element, we have to pass a prop called render, which is a function that returns a React element.

import React from "react";

import { render } from "react-dom";

import "./styles.css";

const Title = (props) => props.render();

render(

<div className="App">

<Title

render={() => (

<h1>

<span role="img" aria-label="emoji">

✨

</span>

I am a render prop!{" "}

<span role="img" aria-label="emoji">

✨

</span>

</h1>

)}

/>

</div>,

document.getElementById("root")

);

Pros

Sharing logic and data among several components is easy with the render props pattern. Components can be made very reusable by using a render or children prop. Although the Higher Order Component pattern mainly solves the same issues, namely reusability and sharing data, the render props pattern solves some of the issues we could encounter by using the HOC pattern.

The issue of naming collisions that we can run into by using the HOC pattern no longer applies by using the render props pattern, since we don’t automatically merge props. We explicitly pass the props down to the child components, with the value provided by the parent component.

Since we explicitly pass props, we solve the HOC’s implicit props issue. The props that should get passed down to the element are all visible in the render prop’s arguments list. This way, we know exactly where certain props come from.

We can separate our app’s logic from rendering components through render props. The stateful component that receives a render prop can pass the data onto stateless components, which merely render the data.

Cons

The issues that we tried to solve with render props have largely been replaced by React Hooks. As Hooks changed the way we can add reusability and data sharing to components, they can replace the render props pattern in many cases.

Since we can’t add lifecycle methods to a render prop, we can only use it on components that don’t need to alter the data they receive.

Note (React 18+): The render props pattern is now largely supplanted by Hooks in React’s best practices. Render props often resulted in deeply nested JSX “callback hell”—for example, nesting multiple

<Mutation>components to get multiple pieces of data. Modern libraries like Apollo Client now provide Hooks (e.g.,useMutation,useQuery) that allow you to fetch or compute needed data inside the component, eliminating the need for wrapper components. Hooks don’t create new component boundaries, so state can be shared more directly and the React Compiler can statically analyze the code more easily. While render props are still possible, if you find yourself writing a component whose sole purpose is to callprops.render()or use children-as-a-function, ask if a custom Hook could achieve the same result more directly.

Container/Presentational Pattern

In React, one way to enforce separation of concerns is by using the Container/Presentational pattern. With this pattern, we can separate the view from the application logic.

Let’s say we want to create an application that fetches 6 dog images and renders these images on the screen.

Ideally, we want to enforce separation of concerns by separating this process into two parts:

Presentational Components: Components that care about how data is shown to the user. In this example, that’s the rendering of the list of dog images.

Container Components: Components that care about what data is shown to the user. In this example, that’s fetching the dog images.

A presentational component receives its data through props. Its primary function is to simply display the data it receives the way we want it to, including styles, without modifying that data.

The primary function of container components is to pass data to presentational components, which they contain. Container components themselves usually don’t render any other components besides the presentational components that care about their data. Since they don’t render anything themselves, they usually do not contain any styling either.

Combining these two components makes it possible to separate the handling of application logic from the view.

In many cases, the Container/Presentational pattern can be replaced with React Hooks. The introduction of Hooks made it easy for developers to add statefulness without needing a container component to provide that state. Hooks make it easy to separate logic and view in a component, just like the Container/Presentational pattern. It saves us the extra layer that was necessary in order to wrap the presentational component within the container component.

Note (React 18+): Modern React strongly favors Hooks over container components for separating logic from views. Custom Hooks can replace class-based containers entirely—for example, a useDogImages The hook can fetch data using useState and useEffect, then any component can simply call const dogs = useDogImages() to get the data. This achieves the same separation of concerns (data fetching vs UI) with less boilerplate and no wrapper component. This Hook-based approach is also friendly to React’s upcoming optimizations—the React Compiler can better optimize functional components and Hooks than class lifecycles.

Hooks Pattern

React 16.8 introduced a new feature called Hooks. Hooks make it possible to use React state and lifecycle methods without having to use an ES2015 class component.

Although Hooks are not necessarily a design pattern, Hooks play a very important role in your application design. Many traditional design patterns can be replaced by Hooks.

Custom Hooks

Besides the built-in hooks that React provides (useState, useEffect, useReducer, useRef, useContext, useMemo, useImperativeHandle, useLayoutEffect, useDebugValue, useCallback), we can easily create our own custom hooks.

You may have noticed that all hooks start with use. It’s important to start your hooks with use in order for React to check if it violates the rules of hooks

Let’s say we want to keep track of certain keys the user may press when writing the input. Our custom hook should be able to receive the key we want to target as its argument.

function useKeyPress(targetKey) {}

We want to add a keydown and keyup event listener to the key that the user passed as an argument. If the user pressed that key, meaning the keydown event gets triggered, the state within the hook should toggle to true. Otherwise, when the user stops pressing that button, the keyup event gets triggered and the state toggles to false.

function useKeyPress(targetKey) {

const [keyPressed, setKeyPressed] = React.useState(false);

function handleDown({ key }) {

if (key === targetKey) {

setKeyPressed(true);

}

}

function handleUp({ key }) {

if (key === targetKey) {

setKeyPressed(false);

}

}

React.useEffect(() => {

window.addEventListener("keydown", handleDown);

window.addEventListener("keyup", handleUp);

return () => {

window.removeEventListener("keydown", handleDown);

window.removeEventListener("keyup", handleUp);

};

}, []);

return keyPressed;

}

Instead of keeping the key press logic local to the Input component, we can now reuse the useKeyPress hook throughout multiple components, without having to rewrite the same logic over and over.

Another great advantage of Hooks is that the community can build and share hooks. We just wrote the useKeyPress hook ourselves, but that actually wasn’t necessary at all! The hooks was already built by someone else and ready to use in our application if we just installed it!

Here are some websites that list all the hooks built by the community, and ready to use in your application.

Compound Pattern

In our application, we often have components that belong to each other. They’re dependent on each other through the shared state, and share logic together. You often see this with components like select, dropdown components or menu items. The compound component pattern allows you to create components that all work together to perform a task.

Let’s look at an example: we have a list of squirrel images! Besides just showing squirrel images, we want to add a button that makes it possible for the user to edit or delete the image. We can implement a FlyOut A component that shows a list when the user toggles the component.

Within a FlyOut component, we essentially have three things:

The

FlyOutwrapper, which contains the toggle button and the listThe

Togglebutton, which toggles theListThe

List, which contains the list of menu items

Using the Compound component pattern with React’s Context API is perfect for this example

First, let’s create the FlyOut component. This component keeps the state, and returns a FlyOutProvider with the value of the toggle to all the children it receives.

const FlyOutContext = createContext();

function FlyOut(props) {

const [open, toggle] = useState(false);

return (

<FlyOutContext.Provider value={{ open, toggle }}>

{props.children}

</FlyOutContext.Provider>

);

}

We now have a stateful FlyOut component that can pass the value of open and toggle to its children!

Let’s create the Toggle component. This component simply renders the component on which the user can click in order to toggle the menu.

function Toggle() {

const { open, toggle } = useContext(FlyOutContext);

return (

<div onClick={() => toggle(!open)}>

<Icon />

</div>

);

}

In order to actually give Toggle access to the FlyOutContext provider, we need to render it as a child component of FlyOut! We could simply render this as a child component. However, we can also make the Toggle component, a property of the FlyOut component!

const FlyOutContext = createContext();

function FlyOut(props) {

const [open, toggle] = useState(false);

return (

<FlyOutContext.Provider value={{ open, toggle }}>

{props.children}

</FlyOutContext.Provider>

);

}

function Toggle() {

const { open, toggle } = useContext(FlyOutContext);

return (

<div onClick={() => toggle(!open)}>

<Icon />

</div>

);

}

FlyOut.Toggle = Toggle;

This means that if we ever want to use the FlyOut component in any file, we only have to import FlyOut!

import React from "react";

import { FlyOut } from "./FlyOut";

export default function FlyoutMenu() {

return (

<FlyOut>

<FlyOut.Toggle />

</FlyOut>

);

}

Just a toggle is not enough. We also need to have a List with list items, which open and close based on the value of open.

function List({ children }) {

const { open } = React.useContext(FlyOutContext);

return open && <ul>{children}</ul>;

}

function Item({ children }) {

return <li>{children}</li>;

}

The List component renders its children based on whether the value of open is true or false. Let’s make List and Item a property of the FlyOut component, just like we did with the Toggle component.

const FlyOutContext = createContext();

function FlyOut(props) {

const [open, toggle] = useState(false);

return (

<FlyOutContext.Provider value={{ open, toggle }}>

{props.children}

</FlyOutContext.Provider>

);

}

function Toggle() {

const { open, toggle } = useContext(FlyOutContext);

return (

<div onClick={() => toggle(!open)}>

<Icon />

</div>

);

}

function List({ children }) {

const { open } = useContext(FlyOutContext);

return open && <ul>{children}</ul>;

}

function Item({ children }) {

return <li>{children}</li>;

}

FlyOut.Toggle = Toggle;

FlyOut.List = List;

FlyOut.Item = Item;

We can now use them as properties on the FlyOut component! In this case, we want to show two options to the user: Edit and Delete. Let’s create a FlyOut.List that renders two FlyOut.Item components, one for the Edit option, and one for the Delete option.

import React from "react";

import { FlyOut } from "./FlyOut";

export default function FlyoutMenu() {

return (

<FlyOut>

<FlyOut.Toggle />

<FlyOut.List>

<FlyOut.Item>Edit</FlyOut.Item>

<FlyOut.Item>Delete</FlyOut.Item>

</FlyOut.List>

</FlyOut>

);

}

Perfect! We just created an entire FlyOut component without adding any state in the FlyOutMenu itself!

The compound pattern is great when you’re building a component library. You’ll often see this pattern when using UI libraries like Semantic UI.

Pros