🔥 AI Handbook

I am a developer creating open-source projects and writing about web development, side projects, and productivity.

AI Mindset

Tech hiring in the AI era: Why everyone’s at zero

How AI amplifies developers instead of replacing them, and why this is the React moment all over again.

Headlines scream about tech layoffs at Netflix, Amazon, and Microsoft, but what’s happening beneath the surface tells a different story. In this episode, Taylor Desseyn, VP of Global Community at Torc, joins the We Love Open Source podcast to share why tech hiring isn’t actually dead, how AI changes team composition without replacing developers, and why everyone’s on the same playing field when it comes to learning these new tools.

https://www.youtube.com/watch?v=KvflH9NMaiU

Taylor reframes the tech hiring narrative by looking beyond big tech. While FAANG companies (or MANGO, as he’s heard it called now) make layoff headlines, the startup ecosystem is thriving. AI-specific tech startup funding is through the roof. Torc and Randstad are both growing, driven largely by the AI boom. The reality beneath the headlines shows tech hiring evolving, not dying.

AI won’t replace developers, but it will change headcount dynamics. Taylor frames it simply: Think of an engineer as 1.0. With AI tools like Claude or Cursor, that engineer becomes 1.25, 1.5, or even 1.75. A team that previously needed 50 engineers might now need 25 or 20 really good engineers who know how to use AI effectively. It’s not about replacement, it’s about amplification.

For developers worried about AI taking their jobs, Taylor draws a parallel to earlier shifts. He’s been recruiting since 2011, through .NET MVC replacing VB.NET and React’s emergence. AI is the same kind of inflection point. The key insight: Everyone’s at zero right now. Senior, staff, and principal engineers are just getting started with Claude alongside junior developers. It’s a level playing field, but only if you engage.

Key takeaways

Tech hiring is evolving, not dying: Startup ecosystem and AI-specific funding are thriving while big tech layoffs make headlines. Look beneath the surface to see where growth is actually happening.

AI amplifies engineers rather than replacing them: Teams will hire fewer developers but those developers become 1.5x or 1.75x more productive with AI tools. The focus shifts to hiring engineers who know how to use Claude and Cursor effectively.

Everyone’s at zero with AI right now: Senior and junior developers alike are just getting started. Find a mentor, document your learning publicly, and be known for how you’re using AI.

Taylor’s message is clear: This is the React moment for AI. You can ignore it or you can learn it, but everyone who’s learning is starting from the same place. The opportunity is there for developers who take it seriously.

https://allthingsopen.org/articles/tech-hiring-ai-era-everyone-at-zero?ref=dailydev

https://leadershipinchange.com/p/stop-learning-ai-tools

https://substack.com/home/post/p-188178090?source=queue

Most Important AI Concepts

LLMs

https://substack.com/home/post/p-188649002

What is Generative AI?

While today’s generative models are built upon a decade of progress, 2022 was the year when they triggered an “Aha!” moment for most. Generative AI is a subfield of machine learning. It involves training artificial intelligence models on large volumes of real-world data to generate new content (text, images, code, etc.) that resembles human creation.

This may have been a mouthful. Let’s clarify these terms first before we jump into LLMs. Here’s a plain-English map of those first words you’ll see.

AI is the big umbrella: getting computers to do things that look intelligent.

Machine learning (ML) lives inside AI: systems learn from data instead of hard-coded rules.

Deep learning (DL) is a way for computers to learn patterns by practicing on a huge number of examples.

NLP (natural language processing) is the part of AI that works with human language. As simple as that.

Generative AI is the branch that creates new content (text, images, audio, code). Whatever it is, it just means it generates things rather than predicts things, as done with more classical AI systems.

LLMs (Large Language Models) are deep learning models within the generative AI family that specialize in text generation.

That’s all you need for now: AI → ML → DL → (NLP) → LLMs. With the labels straight, we can understand what an LLM actually does.

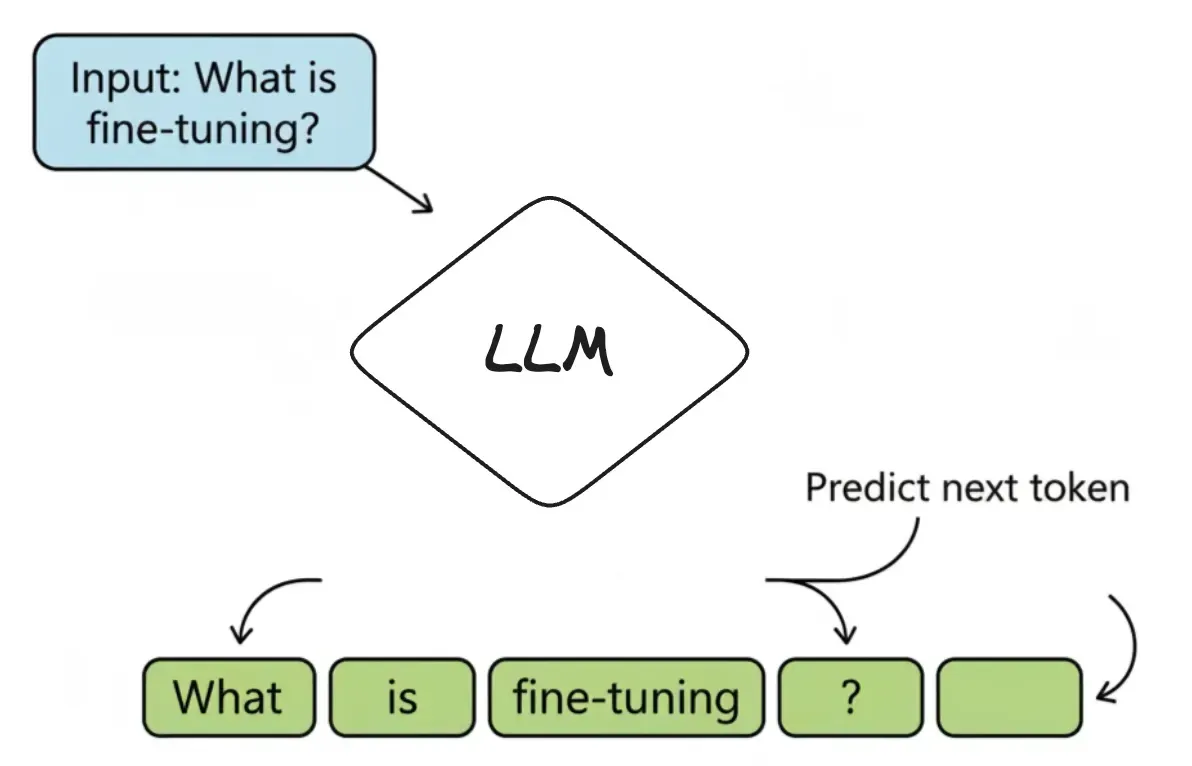

What is an LLM?

An LLM is a powerful autocomplete system. It’s a machine built to answer one simple question over and over again: “Given this sequence of text, what is the most probable next token?” That “piece of text” is called a token—it can be a word, a part of a word (like runn and ing), or punctuation.

For example, if a user asks ChatGPT, “What is fine-tuning?”, it doesn’t “know” the answer. It just predicts the next token, one at a time:

The most probable first token is “Fine-tuning”.

Given that, the next most probable token is “is”.

Next is “the”, and so on...

Until it generates a full sentence: “Fine-tuning is the process of training a pre-trained model further on a smaller, specific dataset.”

It’s called a Large Language Model for three simple reasons:

Large: It has billions of internal variables (called parameters) and was trained on a massive amount of text.

Language: It’s specialized for understanding and generating human language.

Model: It’s a mathematical representation of the patterns it learned.

So, at its heart, an LLM is just a very advanced guessing machine: predicting the next token again and again until a full answer appears. So, how does it get good at making those guesses in the first place?

To get there, the model undergoes a long study session of its own—a process called pre-training. For example, if a student is asked to read every book in a giant library (a huge slice of the internet, in the case of LLMs). They’re not trying to memorize pages word-for-word. Instead, they learn patterns—how words, sentences, and ideas fit together—well enough to predict the next piece of text in any sentence. This is how a base model like GPT-5 is built during pre-training.

https://newsletter.systemdesign.one/p/llm-concepts?utm_source=publication-search

AI Agents

AI Workflows

AI workflows can be either non-agentic or agentic.

Non-Agentic Workflow: An LLM is given an instruction and produces a response. For example, in a question answering workflow, the input is a question, the LLM generates an answer, and the workflow returns that answer.

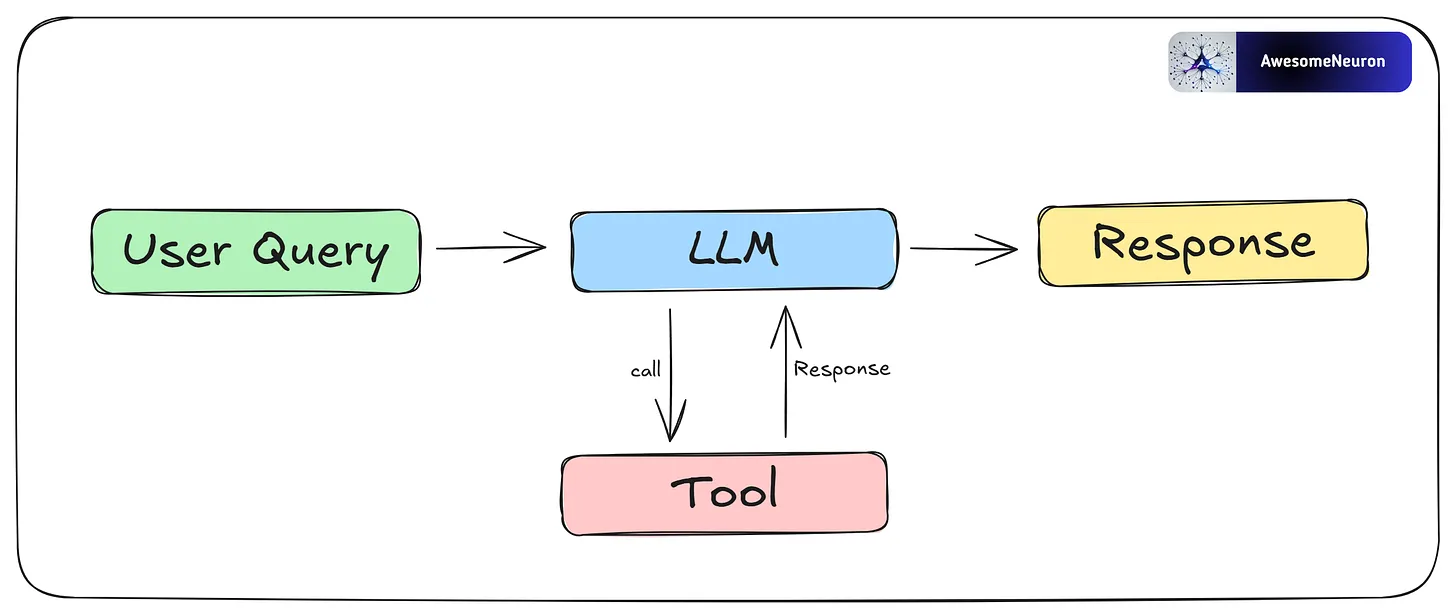

Even if you give the LLM an extra tool, the workflow is still non-agentic if it follows a fixed path and the LLM has no control over decisions or actions.

Agentic Workflow: An agentic workflow is a set of connected steps carried out by an agent or multiple agents to complete a task or goal. These workflows use key features of AI agents, such as reasoning, planning, tool use, and memory.

Now let’s take a closer look at AI agents.

AI Agents

AI agents are systems that use LLMs for reasoning and decision-making, along with tools to interact with the real world. This allows them to handle complex tasks with minimal human input. Each agent is given a specific role and a certain level of autonomy to achieve its goal. They also have memory, which helps them learn from past actions and improve over time.

https://newsletter.systemdesign.one/p/ai-agents-explained

https://substack.com/home/post/p-181466313

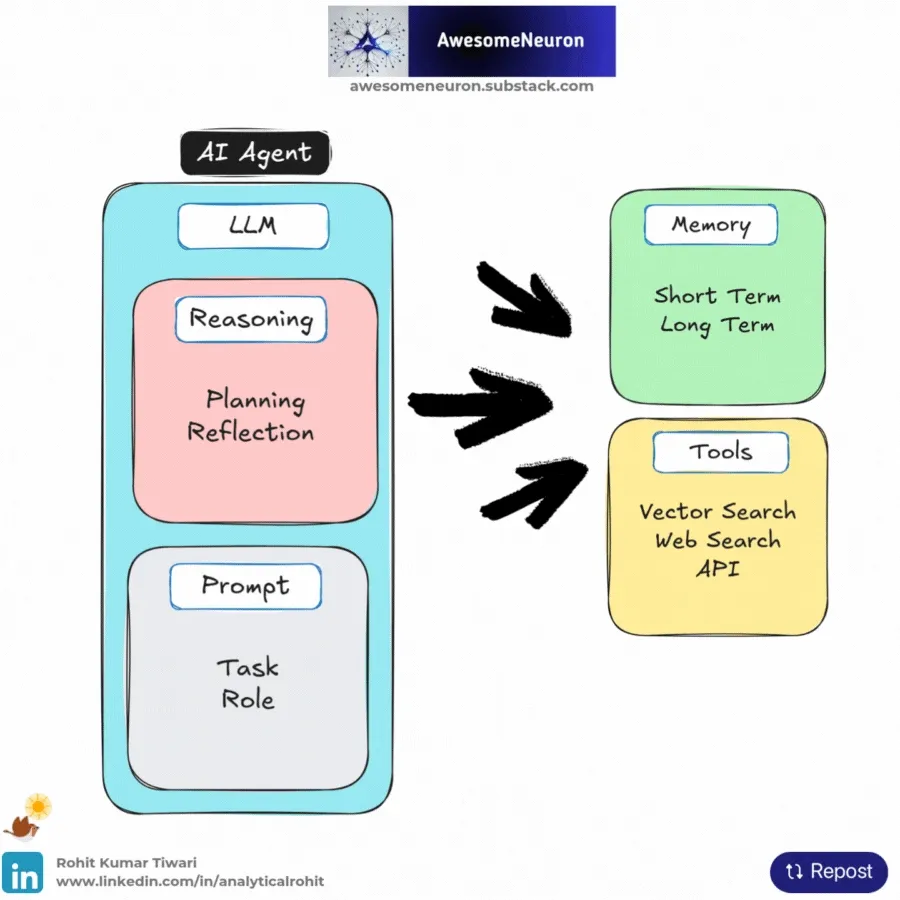

Components of an AI Agent

Reasoning: AI agents work well because they can reason step by step. This ability comes from the LLM (with a defined role and task) and supports two main functions: Planning and reflecting.

Memory: Stores context and relevant information. Short-Term memory tracks the current interaction, while Long-Term memory holds past knowledge and experiences.

Tools (vector search, web search, APIs, etc.): Extends the agent’s abilities beyond text generation, giving it access to external data, real-time information, or APIs.

Agentic Patterns

These patterns enable agents to dynamically adapt, plan, and collaborate, ensuring that the system can handle complex, real-world tasks with precision and scalability.

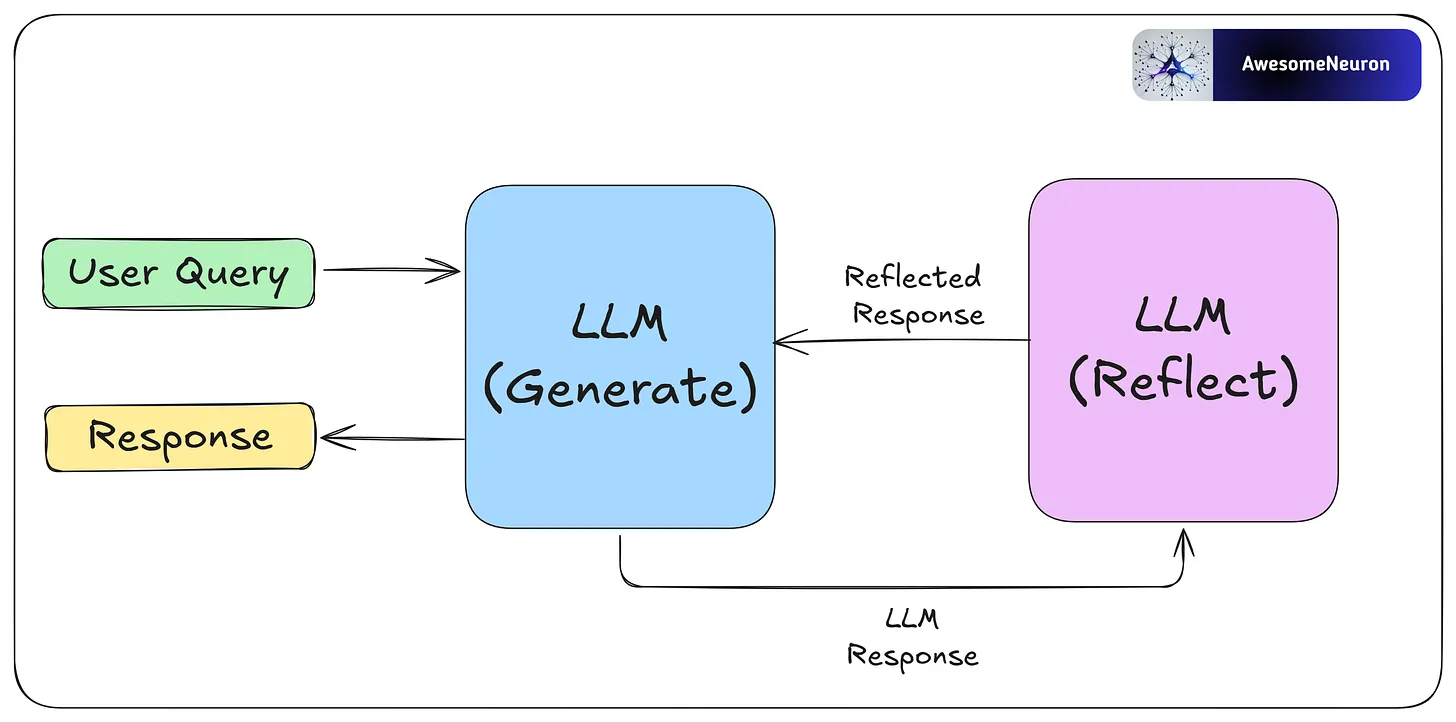

- Reflection

The reflection pattern is a self-feedback mechanism, enabling agents to iteratively evaluate and refine their outputs.

Reflection is especially useful for tasks like coding, where the agent may not succeed right away. For example, it can write code, test it, analyze any errors, and use that feedback to fix and improve the code until it works.

By critiquing its own work and learning from each step, the agent can improve without human help. These reflections can be saved in memory so the agent solves future problems faster and adapts to user needs over time.

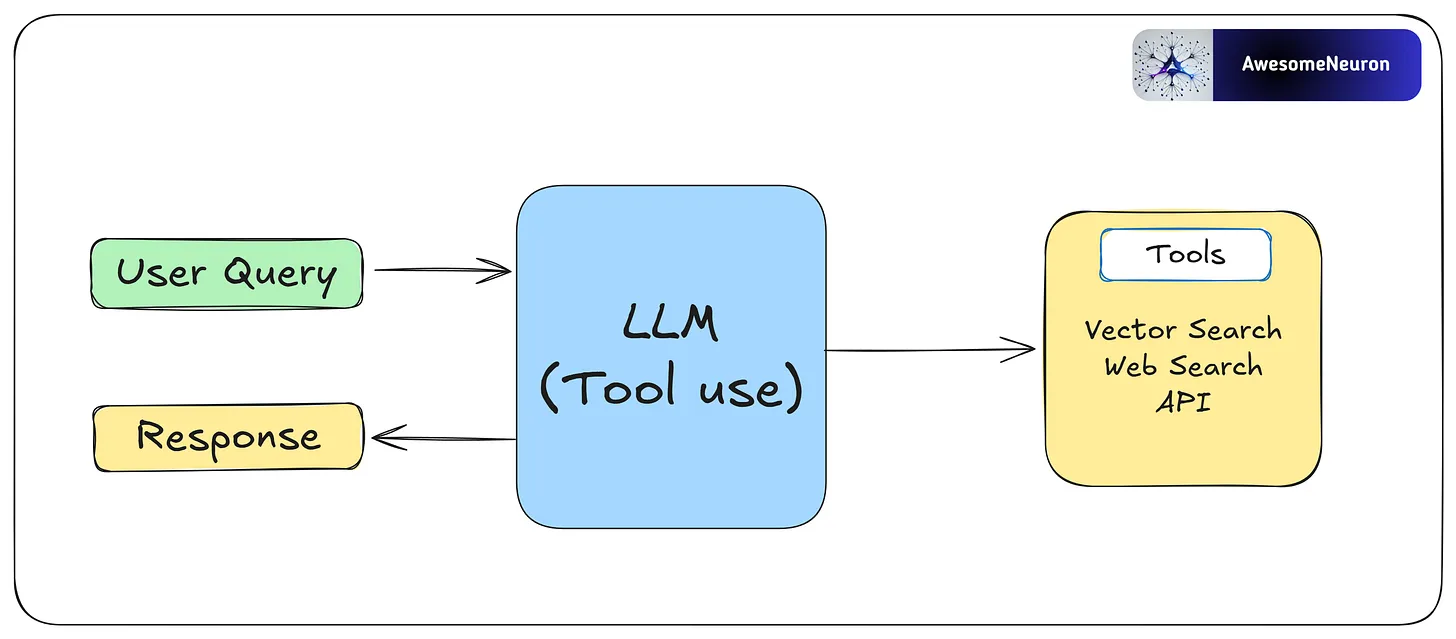

- Tool Use

Agents interact with external tools like vector search, APIs, or web search to expand their capabilities.

This pattern allows agents to gather information, perform computations, and manipulate data beyond their pre-trained knowledge. By dynamically integrating tools into workflows, agents can adapt to complex tasks and provide more accurate and contextually relevant outputs.

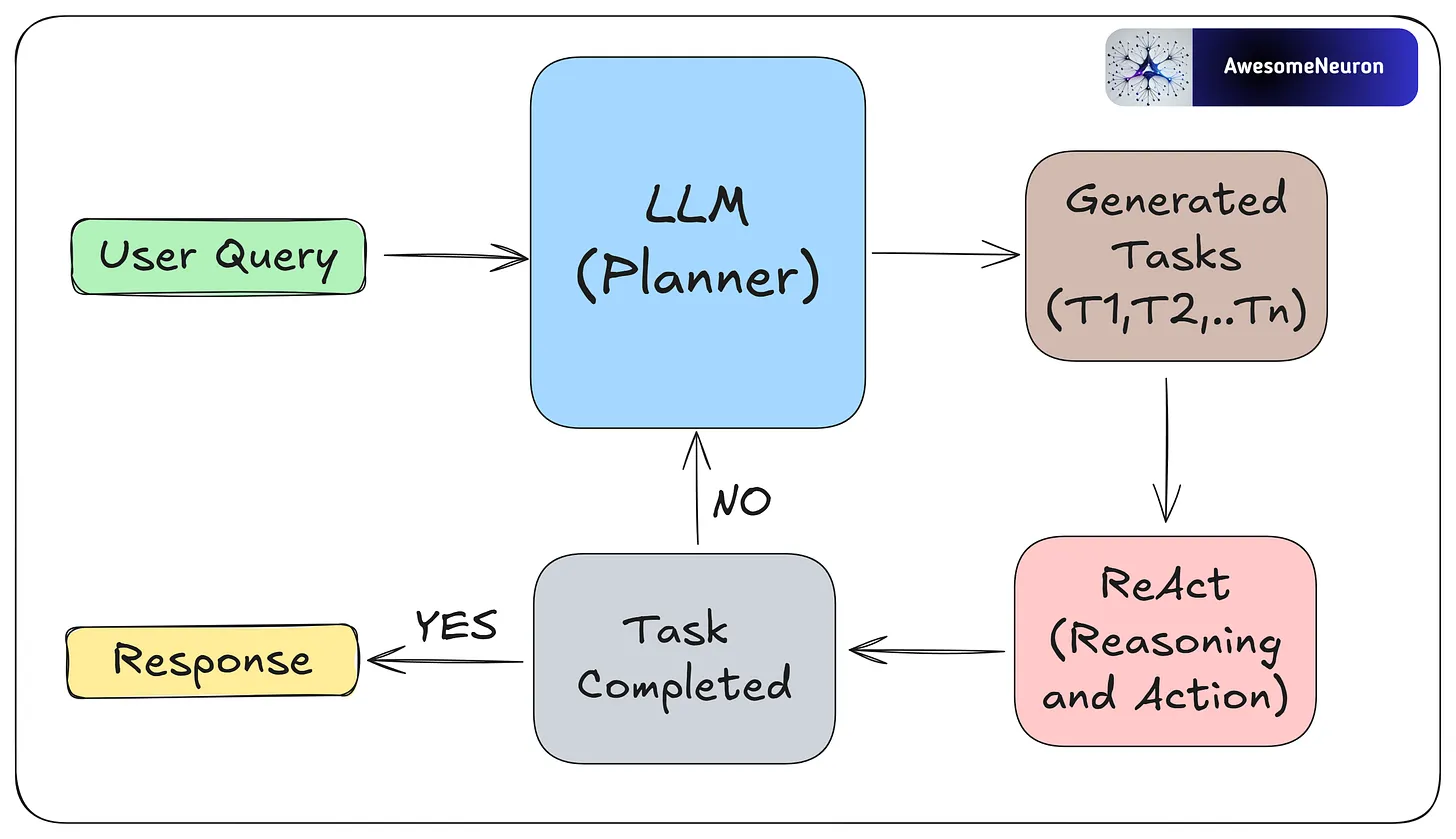

- ReAct

Reflection’s good. Tools are good. But when you let your agent think and act in loops, it gets even better.

That’s what the ReAct pattern is all about: Reasoning + Acting.

Instead of answering everything in one go, the model reasons step-by-step and adjusts its actions as it learns more.

Example:

Goal: “Find the user’s recent invoices.”

Step 1: “Query payments database.”

Step 2: “Hmm, results are outdated. Better ask the user to confirm.”

Step 3: Adjust query, repeat.

It’s not just responding — it’s navigating.

To make ReAct work, you’ll need three things:

Tools (for taking action)

Memory (for keeping context)

A reasoning loop (to track progress)

ReAct makes your agents flexible. Instead of sticking to a rigid script, they think through each step, adapt in real-time, and course-correct as new information comes in.

If you want to build anything beyond a quick one-off answer, this is the pattern you need.

- Planning

Planning is a key agentic pattern that enables agents to autonomously decompose complex tasks into smaller, manageable subtasks.

Planning works best when the path to the goal is unclear and flexibility is important. For example, to fix a software bug, an agent might first read the bug report, find the relevant code, list possible causes, and then choose a debugging method. If the first fix fails, it can adjust based on the new error.

This is useful for solving complex problems that require flexibility and multiple steps.

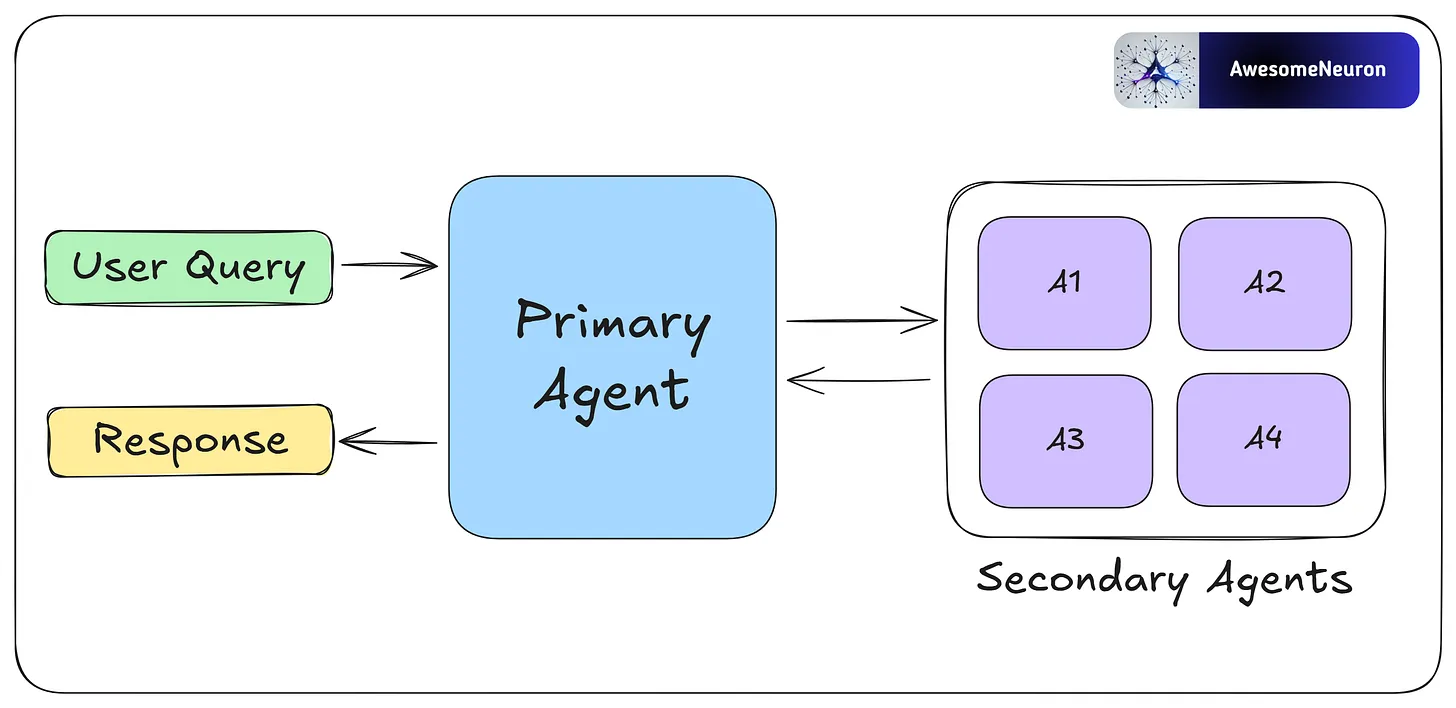

- Multi-Agent

Multi-agent collaboration allows agents to specialize in different tasks and work in parallel. Agents share progress and results to keep the overall workflow efficient and consistent.

By breaking complex tasks into smaller parts and assigning them to different agents, this approach makes workflows more scalable and flexible. Each agent can use its own memory, tools, reflection, or planning, which supports dynamic and collaborative problem-solving.

Benefits of Agentic Workflows

Flexible and adaptable: Agentic workflows adjust to changing tasks and challenges. Unlike rule-based systems, they evolve based on task complexity and can be customized using different patterns.

Efficiency Gains: Agentic workflows can automate repetitive tasks with high accuracy.

Self-correcting and learning: Using feedback and memory, agents refine their actions over time. This leads to better performance with each use.

Better at complex tasks: By breaking complex problems into smaller steps, agents handle complex tasks more effectively.

Challenges of Agentic Workflows

Overkill for simple tasks: AI agents can create extra overhead when used for simple tasks, leading to unnecessary complexity and higher costs.

Less predictable: More autonomy means more unpredictability. Without proper guardrails, agent outputs can become unreliable or hard to control.

Ethical concerns: Not all decisions should be left to AI. Sensitive tasks need human oversight to avoid mistakes or harm.

Agentic workflows are powerful, but they come with extra complexity and computation. Use them only when needed.

Context Engineering vs Prompt Engineering

Prompt Engineering

The “Static Script”.

If you’ve been working with LLMs, you already know Prompt Engineering. It’s the craft of writing effective instructions for a model, and for the past two years, it’s been the primary way developers have tried to improve AI output.

Think of an LLM as a talented improv actor:

Prompt Engineering is the script you hand them before the curtain rises. It defines their character, the scene's tone, and the rules of engagement. A good script can make a significant difference in performance.

The standard developer toolkit for prompt engineering includes:

Role Assignment – Setting the persona with instructions like “You are an expert travel agent.” This primes the model to use vocabulary and behaviors associated with that role.

Few-Shot Examples – Providing input/output pairs demonstrating the exact format you want. For example, showing the model three examples of properly formatted JSON responses before asking it to generate one.

Chain of Thought (CoT) – Instructions like “Let’s think step by step” that encourage the model to show its reasoning. This dramatically reduces errors in logic and math problems by forcing the model to “work through” the problem rather than jumping to an answer.

Constraint Setting – Hard limits on output, like “Limit your response to 50 words,” or “Respond only with valid JSON.”

These techniques are valuable and worth mastering.

But they share a fundamental limitation that becomes apparent when you move from demos to production.

The “Paris, Kentucky” Failure

The limitation of Prompt Engineering is that it is static.

Let’s return to our travel agent. The user says: “Book me a hotel in Paris for the DevOps conference.”

An agent relying only on prompt engineering sees the words “Paris” and “DevOps.” It has no access to external data, so it does what LLMs do when they lack information: it makes a probabilistic guess. There’s a Paris in France, sure, but there’s also a Paris in Kentucky, Texas, Tennessee, and several other states. Without additional context, the model has no way of knowing which one you mean.

The result?

Your user gets booked into the Best Western in Paris, Kentucky.

No amount of clever prompt phrasing can fix this. You could write “Always book hotels in major international cities”—but what about the user who actually wants Paris, Texas? The problem isn’t the prompt. The problem is that the model lacks three critical pieces of information:

User lives in London (suggesting international travel is more likely)

DevOps conference this year is actually in Paris, France

The user’s company policy requires booking Marriott properties under €200/night

This is where the paradigm shifts.

We need to move from optimizing static scripts to dynamically assembling the right information at runtime. We need Context Engineering.

https://andrewships.substack.com/p/prompt-driven-development

https://newsletter.systemdesign.one/p/context-engineering-vs-prompt-engineering

https://addyo.substack.com/p/the-prompt-engineering-playbook-for

https://blog.bytebytego.com/p/a-guide-to-effective-prompt-engineering

What is Context?

https://newsletter.systemdesign.one/p/what-is-context-engineering

https://blog.bytebytego.com/p/a-guide-to-context-engineering-for

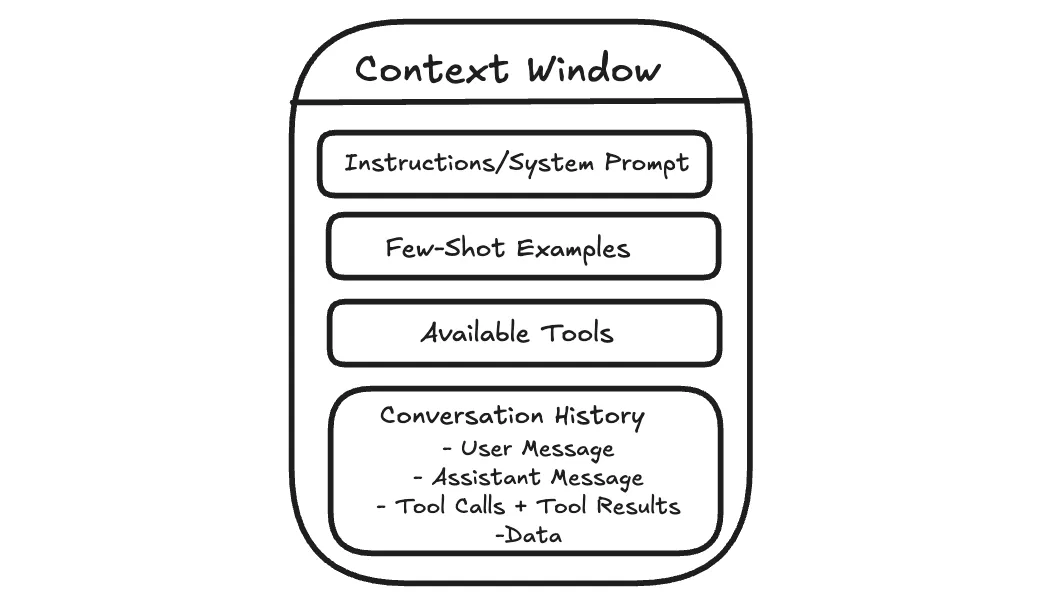

Before getting into techniques or frameworks, it helps to be clear about what “context” actually means.

When you send a message to an AI assistant, it doesn’t just see your latest question. It sees the information included with your message, such as system instructions that guide its behavior, relevant parts of the conversation so far, any examples you provide, and sometimes documents or tool outputs.

All of that together is the context.

This matters because the model lacks long-term memory as humans do. It cannot recall past conversations unless that information is included again. Each response is generated solely from the current context.

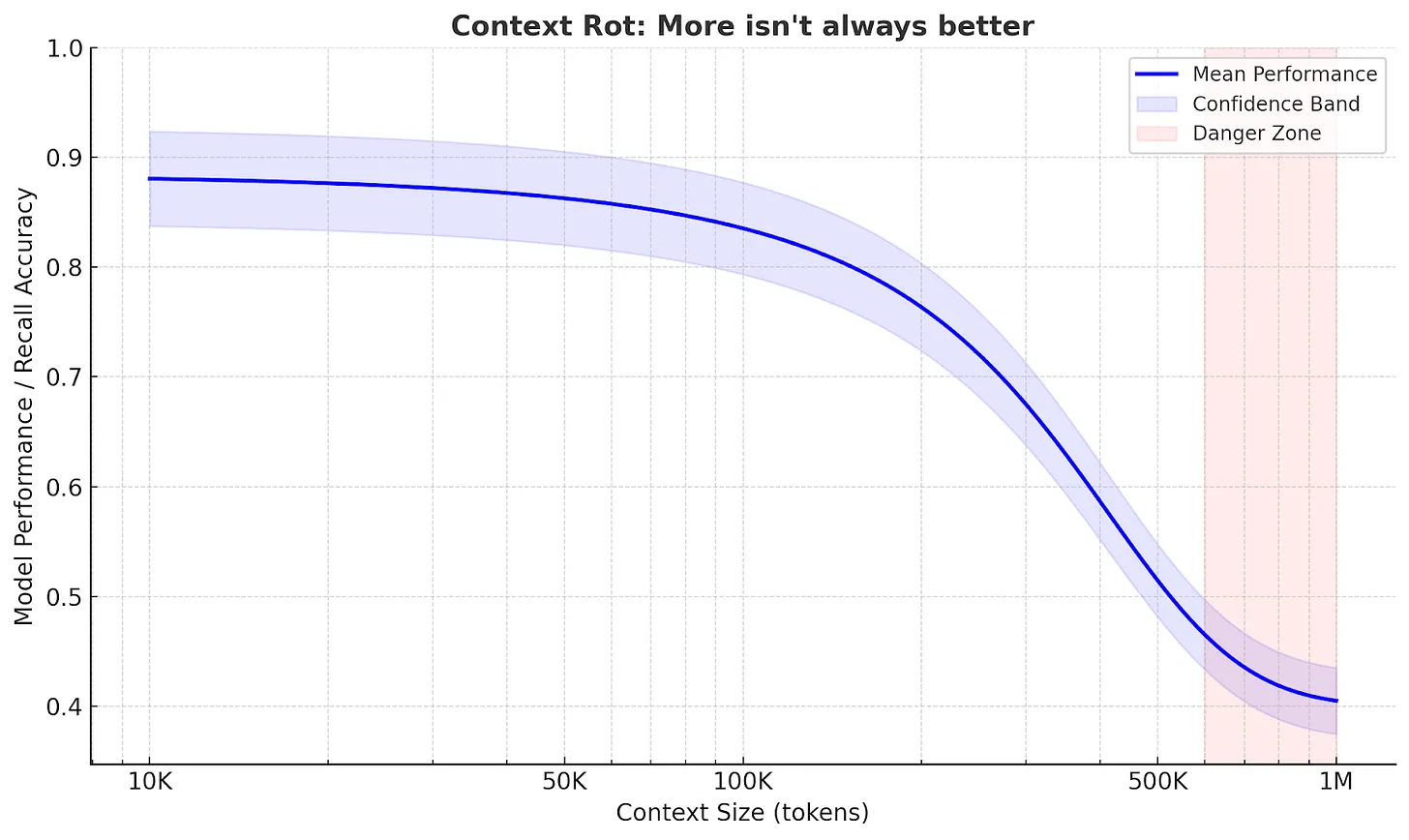

The model can pay attention to only a limited amount of text at once. This limit is often called the context window. Dumping more into that space often makes answers worse, not better.

Context engineering is about managing that working space.

The goal is not to give the model as much information as possible, but to give it the right information at the moment it needs to respond.

Why does this matter for agents?

This becomes more important when the AI is doing more than answering a single question.

A simple chatbot takes your question, replies, and stops. But more advanced AI systems, often called agents, work on tasks that unfold over many steps. They might search for information, read results, summarize what matters, and then decide what to do next.

Each step generates new information that is added to what the model sees next, such as search results, summaries, and intermediate notes. Over time, the context grows, and much of it becomes no longer relevant to the current step. This is called context rot, where useful information gets buried under outdated details.

Agents often work well on focused tasks.

But when a task is broad and requires many steps, the quality can vary. As the context gets heavier, important details from earlier can get lost.

The Anatomy of Context

Understanding what causes context rot is the first step.

The next is knowing exactly what goes into the context window so you can control it.

When an AI generates a response, it is not just reacting to your last message. It is responding to a structured bundle of inputs. Each part plays a different role, but they all compete for the same limited space.

- System Prompt and User Prompt

The system prompt determines the model's overall behavior.

It describes how the assistant should act, the rules it should follow, and the kinds of responses expected.

Most of the time, you do not see the system prompt directly. It’s defined by the product or application you are using. This is why two assistants built on the same underlying model can behave very differently.

For example, ChatGPT tends to answer politely, refuse certain requests, and format responses in predictable ways, even if you never explicitly asked it to do so.

The user prompt is your message.

This includes your current question and, in a chat setting, earlier messages that are still included.

Both are sent to the model together. The system prompt guides behavior, and the user prompt describes what to do right now.

If you are building an AI feature and you control the system prompt, the hard part is balance. If the instructions are too strict, the assistant can become brittle when something unexpected occurs. If they are too vague, responses become inconsistent.

A practical approach is to start minimal, test with real use cases, and add rules only when you see specific failures.

- Examples

Sometimes the clearest way to guide an AI is to show it what you want.

Instead of writing a long list of rules, you can include one or two example inputs and the exact outputs you expect. This is often called few-shot prompting.

You have probably done this in ChatGPT without realizing it. If you say, “Format it like this,” and paste a sample answer, the model will usually follow the pattern.

Examples work because they remove ambiguity. They show tone, structure, and level of detail in a way that instructions often cannot.

The tradeoff is space. Examples take up room in the context window, so they need to earn their spot. A few well-chosen examples are usually better than a long list.

- Message History

In a chat, the model can respond to follow-up questions because earlier messages remain in context.

For example, if you ask ChatGPT, “What is the capital of France?” and then ask, “What is the population?”, it can usually infer you still mean the capital you just discussed.

This works because the conversation so far acts like shared scratch paper. The model does not truly remember the earlier exchange. It is simply reading it again as part of the input.

The problem is that the message history grows over time. As more turns accumulate, older messages take up space even when they are no longer relevant. That can make the model less focused. It may repeat itself, follow outdated assumptions, or miss a detail that matters now.

Managing message history usually means keeping what is still relevant, summarizing what is settled, and letting the rest drop out of the active context.

- Tools

On its own, an LLM can only generate text. Tools let it do more than that.

Tools allow an agent to search the web, read documents, run code, query databases, or interact with external systems. When a tool is used, the result is usually fed back into the context so the model can use it in the next step.

You have seen this in ChatGPT when it searches the web or analyzes a file you uploaded. The output becomes part of what the model sees before it responds.

Tools are powerful, but every tool call adds more text that competes for attention. If a tool returns too much information or in an unclear format, it can overwhelm the model rather than help it.

Good tool design keeps results focused and predictable. Clear names, narrow responsibilities, and concise outputs make it easier for the model to use tools effectively.

- Data

Beyond messages and tools, agents often work with external data.

This can be a document you upload, an article you paste into the chat, or files the system can access. When that information is included, it becomes part of the context.

Large documents do not always behave the way you expect. The model may focus on the wrong section or miss details. This is often a context management problem, not carelessness.

Managing documents usually means breaking them into smaller pieces, pulling in what is relevant to the current step, and leaving the rest out of the active context until needed.

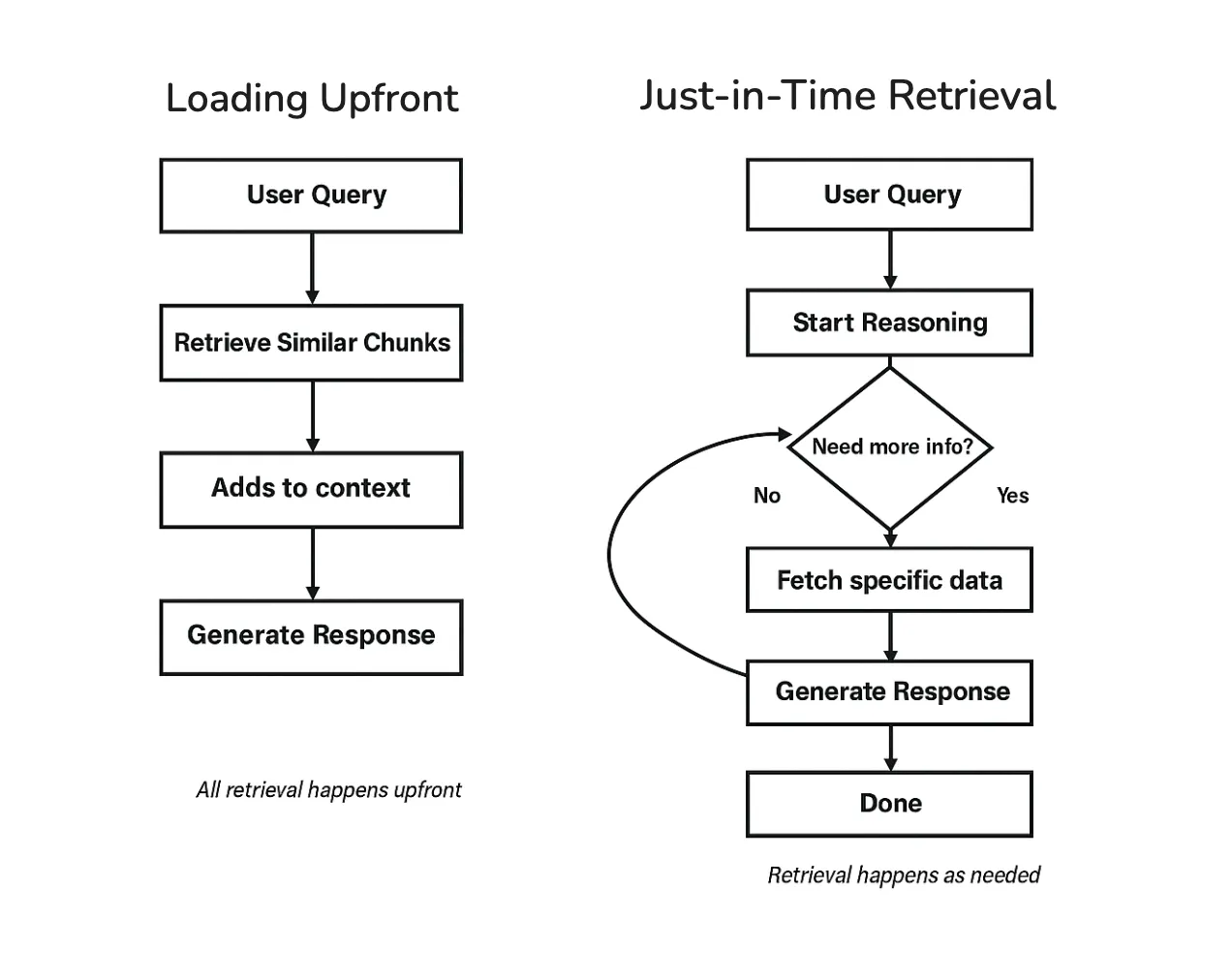

Context Retrieval Strategies

System instructions, examples, tools, and message history are the context in which you can write directly.

But often the most important information is not known in advance. It has to be retrieved during the task.

For example, if you ask ChatGPT a question about a PDF you uploaded, it needs to find the relevant section. If you ask it to search the web, it has to decide what to search for and which results matter.

How an agent retrieves and injects information is a major part of context engineering. There are two main approaches: loading everything upfront, or retrieving as you go.

- Loading Upfront

The simplest approach is to retrieve relevant information before the model starts responding, then include it in the context all at once.

This is what happens when ChatGPT searches the web and then writes an answer using the results it just found. The model is not answering from memory. It is answering based on the information that was retrieved and added to its context.

This pattern is commonly called retrieval augmented generation (RAG).

https://newsletter.systemdesign.one/p/how-rag-works

Loading upfront works well when the question is clear, and the agent can predict what information will be useful. The downside is that the agent makes an early retrieval decision and may stick with it.

If something important is missing or the task changes direction, it can be harder to correct course.

- Just-in-Time Retrieval

Another approach is to retrieve information as the task unfolds.

Instead of loading everything at the start, the agent takes a step, looks at what it has learned so far, and retrieves more information only when needed. You can sometimes see this in ChatGPT when it searches, reads, refines the query, and searches again during longer tasks.

This keeps the context cleaner because only the information actually needed gets pulled in. The tradeoff is that it takes more steps and requires the agent to decide when to retrieve and when to stop.

A useful pattern within just-in-time retrieval is to start broad and then drill down. This specification is called Progressive Disclosure.

Rather than loading full documents immediately, the agent may start with short snippets or summaries, identify what looks relevant, and pull in more detail only then.

This is how humans tackle research, too.

You do not read every article in a database. You scan titles, read abstracts of promising ones, and dive deep only into the sources that matter.

- Hybrid Strategy

Fortunately, you don’t have to pick one or the other.

Many agents combine both approaches. They load a small amount of baseline information upfront, then retrieve more as needed.

You can see this in tools like ChatGPT. Some instructions and conversation history are already present, and additional information, such as search results or document excerpts, is pulled in based on what you ask.

For simpler use cases, loading upfront is often enough. As tasks get more complex and span multiple steps, retrieving as you go becomes more important.

The right choice depends on how predictable your agent’s information needs are.

Techniques for Long-Horizon Tasks

Retrieval helps an agent pull in the right information.

But some tasks create a different problem. They run long enough that the agent produces more text than can fit in the context window.

You may have seen this in ChatGPT during long conversations or research tasks. Early responses are clear, but after many steps, the answers can drift or repeat themselves, especially when you send very long instructions, like asking for help with entire code bases. Over a long task, the agent can encounter far more information than it can keep in working memory at once.

Larger context windows are not a complete solution. They can be slower and more expensive, and they still accumulate irrelevant information over time. A better approach is to actively manage what stays in context and preserve the important parts as the task grows.

Three techniques help with this:

compressing the context when it gets full,

keeping external notes,

splitting work across multiple agents.

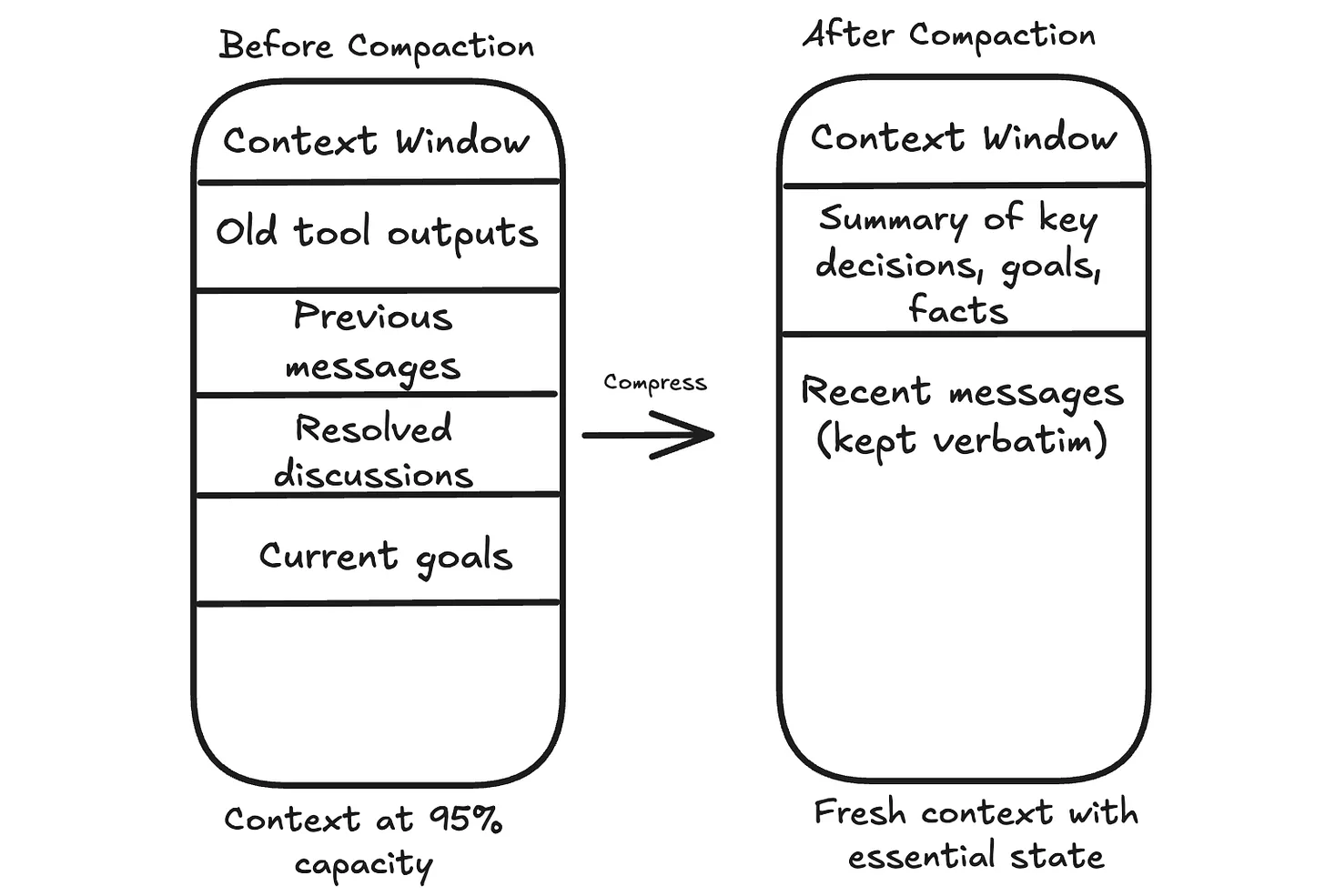

- Compaction

When the context approaches its limit, one option is to compress what’s there.

The agent summarizes the conversation so far, keeping the essential information and discarding the rest. This compressed summary becomes the new starting point, and the conversation continues from there.

You may have noticed something like this in long ChatGPT conversations. After many messages, earlier details can fade. This often happens because older parts of the conversation are shortened or dropped to make room for new input.

The hard part is deciding what to keep.

The goal, key constraints, open questions, and decisions that affect future steps should stay. Raw tool outputs that have already been used can usually go. Repeated back and forth that does not change the plan can go too.

There is always a risk of losing something that matters later. A common safeguard is to store important details outside the context before discarding them, so the agent can retrieve them if needed.

- Structured Note Taking

Compaction occurs when you’re running out of space. Structured note-taking happens continuously.

As the agent works, it keeps a small set of notes outside the context window. These notes capture stable information, such as the goal, constraints, decisions made so far, and a short list of what remains.

You can see a user-level version of this idea in features like ChatGPT’s memory. If you tell it to remember something, that information can persist beyond a single conversation and be brought back when relevant.

This works well for tasks with checkpoints or tasks that span multiple sessions.

A coding agent might keep a checklist of completed steps. A support assistant might store user preferences so that it does not have to ask the same questions again.

- Sub-Agent Architectures

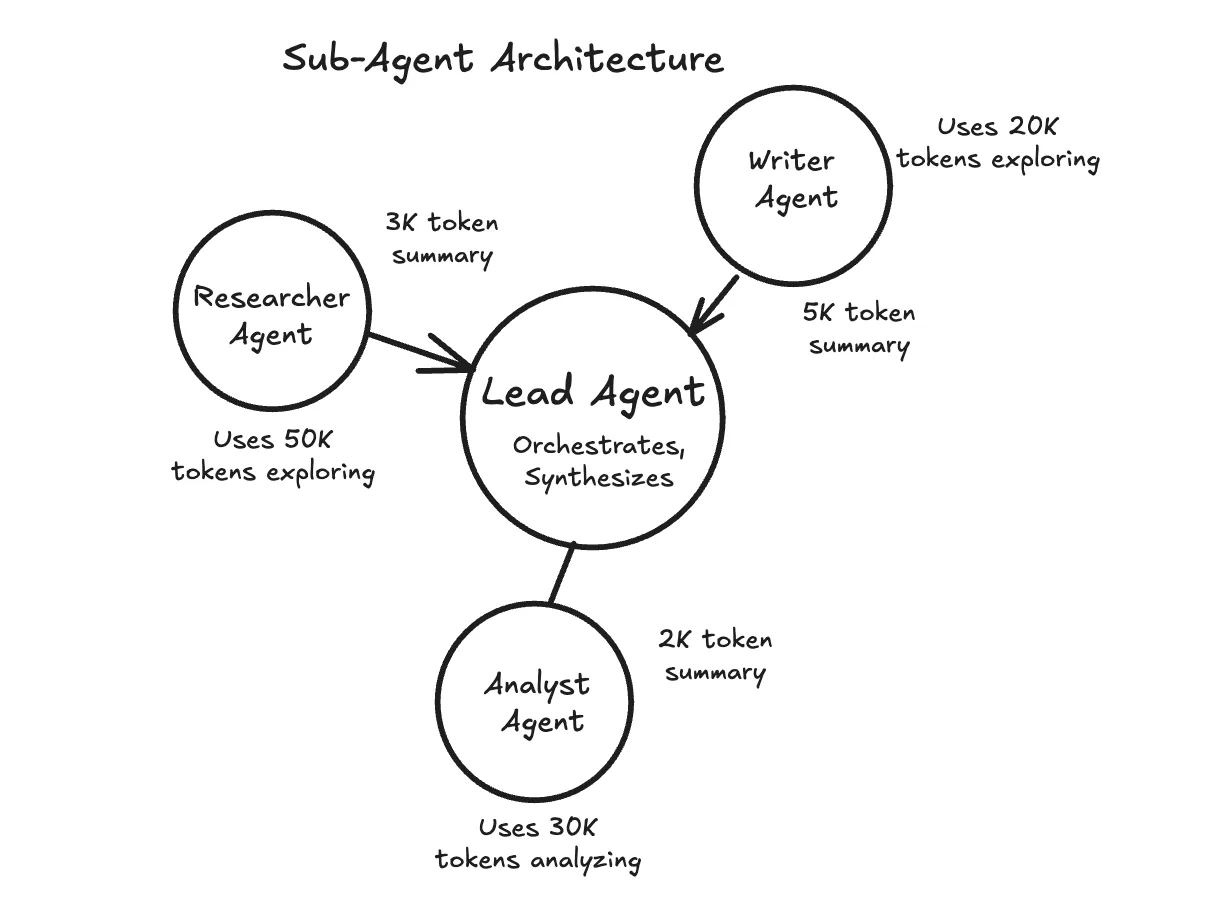

Sometimes the best approach is to break a large task into pieces and assign each piece to a separate agent with its own context window.

In many research-style agent designs, a main agent coordinates the overall task, while sub-agents handle focused subtasks. A sub-agent explores one area in depth, then returns a short summary. The main agent keeps the summary and moves on without carrying all the raw details forward.

You can think of research features in tools like ChatGPT as an example of the kind of workflow where this pattern is useful.

This works well when subtasks can run independently or require deep exploration.

The tradeoff is complexity. Coordinating multiple agents is harder than managing a single one, so it is usually best to start with simpler techniques and add sub-agents when a single agent becomes overwhelmed.

Choosing the Right Technique

There’s no one-size-fits-all solution. The right approach depends on your “agent and your use case”. These rules of thumb can help:

Compaction works best for long, continuous conversations where context gradually accumulates.

Structured notes work best for tasks with natural checkpoints or when information needs to persist across sessions.

Sub-agents work best when subtasks can run in parallel or require deep, independent exploration.

These techniques can be combined. Start with the simplest approach and add complexity as needed.

Putting It All Together

Context engineering is not a single technique.

It’s an approach to designing AI systems. At each step, you decide what goes into the model’s context, what stays out, and what gets compressed.

The components we covered work together.

System prompts and examples shape behavior. Message history maintains continuity. Tools let the agent take actions. Data gives it information to work with. Retrieval strategies determine how and when that information gets loaded. For long-running tasks, compaction, external notes, and sub-agents help manage context that would otherwise overflow.

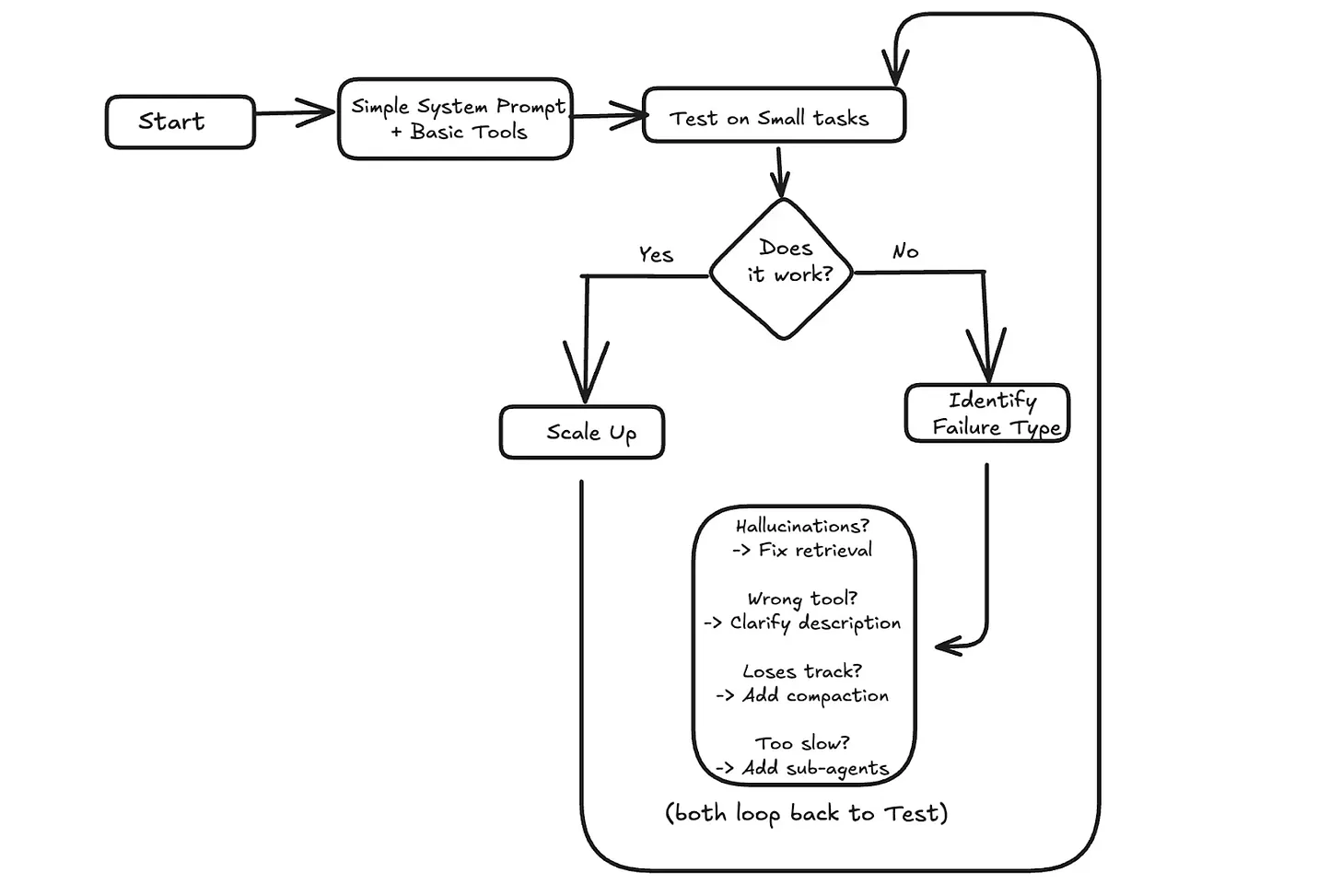

When something goes wrong, context is often the place to look. If the agent hallucinates, it might need better retrieval to ground its answers. If it picks the wrong tool, the tool descriptions might be unclear. If it loses track after many turns, the message history might need summarization.

A practical approach is to start simple.

Test with small tasks first. If it works, scale up. If it fails, identify what went wrong and address the specific issue.

How Does Memory For AI Agents Work?

https://www.decodingai.com/p/how-does-memory-for-ai-agents-work

AI Agent's Memory

One year ago at ZTRON, we faced a challenge that many AI builders encounter: how do we give our agent access to the right information at the right time? Like most teams, we jumped straight into building a complex multimodal Retrieval-Augmented Generation (RAG) system. We built the whole suite: embeddings for text, embeddings for images, OCR, summarization, chunking, and multiple indexes.

Our ingestion pipeline became incredibly heavy. It introduced unnecessary complexity around scaling, monitoring, and maintenance. At query time, instead of a straight line from question to answer, our agent would zigzag through 10 to 20 retrieval steps, trying to gather the right context. The latency was terrible, costs were high, and debugging was a nightmare.

Then we realized something essential. Because we were building a vertical AI agent for a specific use case, our data wasn’t actually that big. Through virtual multi-tenancy and smart data siloing, we could retrieve relevant data with simple SQL queries and fit everything comfortably within modern context windows—around 65,000 tokens maximum, well within Gemini’s 1 million token input capacity.

We dropped the entire RAG layer in favor of Context-Augmented Generation (CAG) with smart context window engineering. Everything became faster, cheaper, and more reliable. This experience taught me that the fundamental challenge in building AI agents isn’t just about retrieval. It is about understanding how to architect memory systems that match your actual use case.

The core problem we are solving is the fundamental limitation of LLMs: their knowledge is vast but frozen in time. They are unable to learn by updating their weights after training, a problem known as “continual learning”. An LLM without memory is like an intern with amnesia. They might be brilliant, but they cannot recall previous conversations or learn from experience.

To overcome this, we use the context window as a form of “working memory.” However, keeping an entire conversation thread plus additional information in the context window is often unrealistic. Rising costs per turn and the “lost in the middle” problem—where models struggle to use information buried in the center of a long prompt limit this approach. While context windows are increasing, relying solely on them introduces noise and overhead.

Memory tools act as the solution. They provide agents with continuity, adaptability, and the ability to “learn” without retraining. When we first started building agents, working with 8k or 16k token limits forced us to engineer complex compression systems. Today, we have more breathing room, but the principles of organizing memory remain essential for performance.

In this article, we will explore:

The four fundamental types of memory for AI agents.

A detailed look at long-term memory: Semantic, Episodic, and Procedural.

The trade-offs between storing memories as strings, entities, or knowledge graphs.

The complete memory cycle, from ingestion to inference.

The 4 Memory Types for AI Agents

To build effective agents, we must distinguish between the different places information lives. We can borrow terms from biology and cognitive science to categorize these layers useful for engineering.

There are four distinct memory types based on their persistence and proximity to the model’s reasoning core.

Internal Knowledge: This is the static, pre-trained knowledge baked into the LLM’s weights. It is the best place to store general world knowledge—models know about whole books without needing them in the context window. However, this memory is frozen at the time of training.

Context Window: This is the slice of information we pass to the LLM during a specific call. It acts as the RAM of the LLM. It is the only “reality” the model sees during inference.

Short-Term Memory: This is the RAM of the entire agentic system. It contains the active context window plus recent interactions, conversation history, and details retrieved from long-term memory. We slice this short-term memory to create the context window for a single inference step. It is volatile and fast, simulating the feeling of “learning” during a session

Long-Term Memory: This is the external, persistent storage system (disk) where an agent saves and retrieves information. This layer provides the personalization and context that internal knowledge lacks and short-term memory cannot retain [4].

The dynamic between these layers creates the agent’s intelligence. First, part of the long-term memory is “retrieved” and brought into short-term memory. This retrieval pipeline queries different memory types in parallel. Next, we slice the short-term memory into an active context window through context engineering. Finally, during inference, the LLM uses its internal weights plus the active context window to generate output.

Another useful way to visualize this is by proximity to the LLM. Internal memory is intrinsic, while long-term memory is the furthest away, requiring specific retrieval mechanisms to become useful.

Categorizing memory this way is critical for engineering. Internal knowledge handles general reasoning. Short-term memory manages the immediate task. Long-term memory handles personalization and continuity. No single layer can perform all three functions effectively. To better understand long-term memory, we can further apply cognitive science definitions to specific data types.

MCP - A Deep Dive

https://portkey.ai/blog/understanding-mcp-authorization/?ref=dailydev

In November 2024, Anthropic launched the Model Context Protocol (MCP) to fix this problem. This wasn’t just another developer tool. It aimed to tackle a key infrastructure issue in AI engineering.

The challenge?

Every AI application needs to connect to data sources. Until now, they had to build custom integrations from scratch. This approach creates a fragmented ecosystem that does not scale.

For example, the world isn’t frozen in time, but LLMs are…

If you want an AI to “monitor server logs,” you can’t feed it a file. You would have to build a data pipeline that polls an API every few seconds. Filter out noise, then push only the relevant anomalies into the AI’s context window. If the API changes its rate limits or response format, your entire agent breaks.

A better way to think of MCP is like a USB-C port.

The Old Way (Before USB):

If you bought a mouse, it had a PS/2 plug. A printer had a massive parallel port. A camera had a proprietary cable. If you wanted to connect these to a computer, the computer needed a specific physical port for each one.

This is the “N×M” problem:

Each device maker had to figure out how to connect to each computer. So, computer makers had to create ports for every device.

The MCP Way (With USB-C):

Now, everything uses a standard port.

Computer (AI Model) needs one USB-C port. It doesn’t need to know if you are plugging in a hard drive or a microphone; it just speaks “USB.”

Device (Data Source) requires a USB-C port. It doesn’t need to know if it’s plugged into a Mac, a PC, or an iPad.

The result: you can plug anything into anything instantly.

The analogy makes sense in theory. But to understand why MCP matters, you need to see how painful the current reality actually is…

The Integration Complexity Problem

Every AI assistant needs context to be useful:

Claude needs access to your codebase. ChatGPT may require your Google Drive files. Custom agents need database connections. But how do these AI systems actually get that context?

Traditionally, each connection requires a custom integration.

If you’re building an AI coding assistant, you need to:

Write code to connect to the GitHub API.

Add authentication and security.

Create a way to change GitHub’s data format into a version that your AI can understand.

Handle rate limiting, errors, and edge cases.

Repeat this entire process for GitLab, Bitbucket, and every other source control system.

This creates the N×M problem:

‘N’ AI assistants multiplied by ‘M’ data sources equals N×M unique integrations that need to be built and maintained.

When Cursor wants to add Notion support, they build it from scratch. When GitHub Copilot wants the same thing, they build it again. The engineering effort gets duplicated across every AI platform.

The traditional approaches have fundamental limitations:

Static, build-time integration:

Integrations are hard-coded into applications. You can’t add a new data source without updating the application itself. This makes rapid experimentation impossible and forces users to wait for official support.

Application-specific security implementations:

Every integration reinvents authentication, authorization, and data protection. This leads to inconsistent security models and increases the attack surface.

No standard way to discover:

AI systems can’t find out what capabilities a data source has. Everything needs clear programming. This makes it hard to create agents that adapt. They can’t use the new tools on their own.

The result is a ‘broken’ ecosystem!

Innovation is stuck because of integration work. Users can access only the connections that application developers create. So that’s a mess.

Now let’s look at how MCP cleans it up…

How MCP Solves It

MCP’s solution is straightforward: define a single protocol that functions across all systems.

Instead of building N×M integrations, you build N clients (one per AI application) and M servers (one per data source). The total integration work drops from N×M to N+M.

When a new AI assistant wants to support all existing data sources, it just needs to implement the MCP client protocol once.

When a new data source wants to be available to all AI assistants, it just needs to implement the MCP server protocol once.

Two critical architectural features power this efficiency:

1. Dynamic Capability Discovery

In traditional integrations, the AI application must know the data source details in advance.

If the API changes, the application breaks…

MCP flips this. It uses a “handshake” model. When an AI connects to an MCP server, it asks, “What can you do?”.

The server returns a list of available resources and tools in real time.

RESULT:

You can add a new tool to your database server, like a “Refund User” function. The AI agent finds it right away the next time it connects. You won’t need to change any code in the AI application.

2. Decoupling Intelligence from Data

MCP separates the thinking system (AI model) from the knowing system (the data source).

For Data Teams: They can build robust, secure MCP servers for their internal APIs without worrying about which AI model will use them.

For AI Teams: They can swap models without having to rebuild their data integrations.

This decoupling means your infrastructure doesn’t become obsolete whenever a new AI model gets released.

You build your data layer once, and it works with whatever intelligence layer you choose to plug into it.

An AI agent using MCP doesn’t need to know in advance what tools are available. It simply connects, negotiates capabilities, and uses them as needed. This enables a level of flexibility and scale that is impossible with traditional static integrations.

The high-level concept is straightforward.

But the real elegance is in how the architecture actually works under the hood…

MCP Architecture Deep Dive

MCP’s architecture has three main layers.

These layers separate concerns and allow for easy scalability:

- The Three-Layer Model

Hosts are user-facing apps.

This includes the Claude Desktop app, IDEs like VSCode, or custom AI agents you create. Hosts are where users connect with the AI. They are also where requests begin. They do more than display the UI; they orchestrate the entire user experience.

The host application interprets the user’s prompt.

It decides whether external data or tools are needed to fulfill the request. If access is necessary, the host creates and manages several internal clients. It keeps one client for each MCP server it connects to.

The protocol layer handles the mechanics of data access.

Clients are protocol-speaking connection managers that run on hosts.

Each client maintains a dedicated 1:1 connection with a single MCP server. They serve as the translation layer.

They convert abstract AI requests from the host into clear MCP messages. These messages, like tools/call or resources/read, can be understood by the server. Clients do more than send messages. They manage the entire session lifecycle. This includes handling connection drops, reconnections, and state.

When a connection starts, the client takes charge of capability negotiation. It asks the server which tools, resources, and prompts it supports.

Servers provide context.

They act as the layer that connects real-world systems, such as PostgreSQL databases, GitHub repositories, or Slack workspaces.

A server connects the MCP protocol to the data source. It translates MCP requests into the system’s native operations. For example, it turns an MCP read request into a SQL SELECT query. They are very flexible. They can run on a user’s machine for private access or remotely as a cloud service.

Servers share their available capabilities when connected. They inform the client about the Resources, Prompts, and Tools they offer.

https://newsletter.systemdesign.one/p/how-mcp-works

AGENTS.MD

An AGENTS.md file is a markdown file you check into Git that customizes how AI coding agents behave in your repository. It sits at the top of the conversation history, right below the system prompt.

Think of it as a configuration layer between the agent's base instructions and your actual codebase. The file can contain two types of guidance:

Personal scope: Your commit style preferences, coding patterns you prefer

Project scope: What the project does, which package manager you use, your architecture decisions

The AGENTS.md file is an open standard supported by many - though not all - tools.

Why Massive AGENTS.MD Files Are a Problem

There's a natural feedback loop that causes AGENTS.md files to grow dangerously large:

The agent does something you don't like

You add a rule to prevent it

Repeat hundreds of times over months

File becomes a "ball of mud"

Different developers add conflicting opinions. Nobody does a full style pass. The result? An unmaintainable mess that actually hurts agent performance.

Another culprit: auto-generated AGENTS.md files. Never use initialization scripts to auto-generate your AGENTS.md. They flood the file with things that are "useful for most scenarios" but would be better progressively disclosed. Generated files prioritize comprehensiveness over restraint.

The Instruction Budget

Kyle from Humanlayer's article mentions the concept of an "instruction budget":

Frontier thinking LLMs can follow ~ 150-200 instructions with reasonable consistency. Smaller models can attend to fewer instructions than larger models, and non-thinking models can attend to fewer instructions than thinking models.

Every token in your AGENTS.md file gets loaded on every single request, regardless of whether it's relevant. This creates a hard budget problem:

| Scenario | Impact |

|---|---|

Small, focused AGENTS.md |

More tokens available for task-specific instructions |

Large, bloated AGENTS.md |

Fewer tokens for the actual work; agent gets confused |

| Irrelevant instructions | Token waste + agent distraction = worse performance |

Taken together, this means that the ideal AGENTS.md file should be as small as possible.

Stale Documentation Poisons Context

Another issue for large AGENTS.md files is staleness.

Documentation goes out of date quickly. For human developers, stale docs are annoying, but the human usually has enough built-in memory to be skeptical about bad docs. For AI agents that read documentation on every request, stale information actively poisons the context.

This is especially dangerous when you document file system structure. File paths change constantly. If your AGENTS.md says "authentication logic lives in src/auth/handlers.ts" and that file gets renamed or moved, the agent will confidently look in the wrong place.

Instead of documenting structure, describe capabilities. Give hints about where things might be and the overall shape of the project. Let the agent generate its own just-in-time documentation during planning.

Domain concepts (like "organization" vs "group" vs "workspace") are more stable than file paths, so they're safer to document. But even these can drift in fast-moving AI-assisted codebases. Keep a light touch.

Cutting Down Large AGENTS.md File

Be ruthless about what goes here. Consider this the absolute minimum:

One-sentence project description (acts like a role-based prompt)

Package manager (if not npm; or use

corepackfor warnings)Build/typecheck commands (if non-standard)

That's honestly it. Everything else should go elsewhere.

The One-Liner Project Description

This single sentence gives the agent context about why they're working in this repository. It anchors every decision they make.

Example: This is a React component library for accessible data visualization.

That's the foundation. The agent now understands its scope.

Package Manager Specification

If you're In a JavaScript project and using anything other than npm, tell the agent explicitly:

This project uses pnpm workspaces.

Without this, the agent might default to npm and generate incorrect commands.

Use Progressive Disclosure

Instead of cramming everything into AGENTS.md, use progressive disclosure: give the agent only what it needs right now, and point it to other resources when needed.

Agents are fast at navigating documentation hierarchies. They understand context well enough to find what they need.

Move Language-Specific Rules to Separate Files

If your AGENTS.md currently says:

Always use const instead of let.

Never use var.

Use interface instead of type when possible.

Use strict null checks.

...

Move that to a separate file instead. In your root AGENTS.md:

For TypeScript conventions, see docs/TYPESCRIPT.md

Notice the light touch, no "always," no all-caps forcing. Just a conversational reference.

The benefits:

TypeScript rules only load when the agent writes TypeScript

Other tasks (CSS debugging, dependency management) don't waste tokens

File stays focused and portable across model changes

Nest Progressive Disclosure

You can go even deeper. Your docs/TYPESCRIPT.md can reference docs/TESTING.md. Create a discoverable resource tree:

docs/

├── TYPESCRIPT.md

│ └── references TESTING.md

├── TESTING.md

│ └── references specific test runners

└── BUILD.md

└── references esbuild configuration

You can even link to external resources, Prisma docs, Next.js docs, etc. The agent will navigate these hierarchies efficiently.

Use Agent Skills

Many tools support "agent skills" - commands or workflows the agent can invoke to learn how to do something specific. These are another form of progressive disclosure: the agent pulls in knowledge only when needed.

AGENTS.MD in Monorepos

You're not limited to a single AGENTS.md at the root. You can place AGENTS.md files in subdirectories, and they merge with the root level.

This is powerful for monorepos:

What Goes Where:

| Level | Content |

|---|---|

| Root | Monorepo purpose, how to navigate packages, shared tools (pnpm workspaces) |

| Package | Package purpose, specific tech stack, package-specific conventions |

Root AGENTS.md:

This is a monorepo containing web services and CLI tools.

Use pnpm workspaces to manage dependencies.

See each package's AGENTS.md for specific guidelines.

Package-level AGENTS.md (in packages/api/AGENTS.md):

This package is a Node.js GraphQL API using Prisma.

Follow docs/API_CONVENTIONS.md for API design patterns.

Don't overload any level. The agent sees all merged AGENTS.md files in its context. Keep each level focused on what's relevant at that scope.

Fix A Broken Agents.md with this prompt

If you're starting to get nervous about the AGENTS.md file in your repo, and you want to refactor it to use progressive disclosure, try copy-pasting this prompt into your coding agent:

I want you to refactor my AGENTS.md file to follow progressive disclosure principles.

Follow these steps:

1. **Find contradictions**: Identify any instructions that conflict with each other. For each contradiction, ask me which version I want to keep.

2. **Identify the essentials**: Extract only what belongs in the root AGENTS.md:

- One-sentence project description

- Package manager (if not npm)

- Non-standard build/typecheck commands

- Anything truly relevant to every single task

3. **Group the rest**: Organize remaining instructions into logical categories (e.g., TypeScript conventions, testing patterns, API design, Git workflow). For each group, create a separate markdown file.

4. **Create the file structure**: Output:

- A minimal root AGENTS.md with markdown links to the separate files

- Each separate file with its relevant instructions

- A suggested docs/ folder structure

5. **Flag for deletion**: Identify any instructions that are:

- Redundant (the agent already knows this)

- Too vague to be actionable

- Overly obvious (like "write clean code")

Don’t Build a Ball of Mud

When you're about to add something to your AGENTS.md, ask yourself where it belongs:

| Location | When to use |

|---|---|

Root AGENTS.md |

Relevant to every single task in the repo |

| Separate file | Relevant to one domain (TypeScript, testing, etc.) |

| Nested documentation tree | Can be organized hierarchically |

The ideal AGENTS.md is small, focused, and points elsewhere. It gives the agent just enough context to start working, with breadcrumbs to more detailed guidance.

Everything else lives in progressive disclosure: separate files, nested AGENTS.md files, or skills.

This keeps your instruction budget efficient, your agent focused, and your setup future-proof as tools and best practices evolve.

Agent Skills

What are the Claude Skills?

Claude Skills, officially launched as "Agent Skills" in October 2024 and significantly expanded in December 2024, are modular capabilities that teach Claude how to perform specific tasks in a repeatable, specialized way.

Think of Skills as custom training modules. Instead of explaining the same process to Claude every single time ("Here's how our company formats PRDs" or "Here's how we analyze user data"), you package those instructions once into a Skill. From then on, Claude automatically recognizes when that Skill is relevant and applies it.

The technical definition: Skills are folders containing instructions, scripts, and resources that Claude loads dynamically when needed to perform specialized tasks. They can include:

Markdown files with instructions and procedures

Executable code for complex operations

Templates and examples

Domain-specific knowledge

The practical reality: Skills turn Claude from a general-purpose AI into a specialist that knows your workflows, your brand guidelines, your data analysis methods, and your organizational processes.

https://resources.anthropic.com/hubfs/The-Complete-Guide-to-Building-Skill-for-Claude.pdf?hsLang=en

A Brief History: How Skills Evolved

Understanding when and why Anthropic introduced Skills helps explain why this matters now:

October 2024: Anthropic quietly launched Agent Skills as a beta feature. Initially, it was developer-focused—available through the API and Claude Code. The idea was simple: let developers package specialized capabilities that Claude could invoke when relevant.

December 18, 2024: The major expansion. Anthropic made Skills available across claude.ai, introduced organization-wide management for Team and Enterprise plans, launched a Skills Directory with partner-built skills from companies like Notion, Figma, Canva, and Atlassian, and most importantly, published Agent Skills as an open standard at agentskills.io.

January 2025: Skills became integrated with other Claude features like Memory, Extended Thinking, and the new Cowork agent. The Skills Directory expanded significantly, and custom skill creation became more accessible to non-technical users.

Current State (January 2026): Skills are now available to Pro, Max, Team, and Enterprise users. You can use pre-built Anthropic Skills (for Excel, PowerPoint, Word, PDFs), install partner Skills from the directory, or create custom Skills tailored to your exact workflows.

The evolution shows Anthropic's strategy: start with developers, prove the concept, then democratize it for everyone. And it's working.

What Claude Skills do?

Teach Claude ONCE, Benefit FOREVER (Zero Re-Explaining): Skills are instruction folders Claude loads automatically when relevant. Stop re-explaining your preferences, processes, and domain expertise in every single conversation. One folder = permanent memory of your workflow. Build in 15-30 minutes using the built-in skill-creator tool. Works for frontend design, research methodology, document creation, sprint planning, customer onboarding, and compliance workflows. Saves hours weekly across teams and individual users.

Progressive Disclosure = Minimal Token Usage (3-Level System): The 3-level system is genius: Level 1 (YAML frontmatter) always stays in Claude's system prompt — just enough for Claude to know WHEN to activate. Level 2 (SKILL.md body) loads only when relevant. Level 3 (linked files) loads only as needed. Result: specialized expertise without bloating every conversation. Same AI power, fraction of the token cost. An estimated 50%+ reduction in tokens per workflow session versus re-prompting every chat. Keep SKILL.md under 5,000 words, move detailed docs to references/ folder

One Skill = All Surfaces (100% Portability): Build a skill ONCE, and it works identically on Claude.ai, Claude Code, and API — no modifications needed. This is unprecedented in AI tooling. Competitors lock you into one interface. Skills follow you everywhere. Deploy to individuals, teams, or your entire organization from one central folder. Organization-wide deployment launched December 18, 2025, with automatic updates and centralized admin management for enterprise teams.

MCP + Skills = Your AI Employee (Kitchen Analogy): MCP provides the professional kitchen: real-time access to Notion, Asana, Linear, Slack, GitHub, and Figma. Skills provide the recipes: step-by-step instructions optimized for YOUR workflow. Without Skills, MCP users get tool access but no workflow guidance — resulting in support tickets and inconsistent results. With Skills, pre-built workflows activate automatically. Anthropic data shows users blame the MCP connector when the real issue is missing workflow knowledge.

5 Battle-Tested Patterns (Real-World Workflows): Anthropic released 5 proven workflow patterns from early adopters: Pattern 1 (Sequential Workflow) for multi-step processes in exact order. Pattern 2 (Multi-MCP Coordination) for workflows spanning Figma, Drive, Linear, and Slack simultaneously. Pattern 3 (Iterative Refinement) for self-validating output loops. Pattern 4 (Context-Aware Tool Selection) for smart routing to the right tool. Pattern 5 (Domain-Specific Intelligence) for compliance, finance, and legal workflows with embedded rules.

OPEN Standard = Platform-Independent (Like MCP): Anthropic published Agent Skills as an OPEN standard. Like MCP, skills work across AI platforms — not locked to Claude. Early ecosystem adoption already underway. Partners from Asana, Atlassian, Canva, Figma, Sentry, and Zapier have published skills. The GitHub repo anthropics/skills contains production-ready skills you can customize TODAY instead of building from scratch. Community-built skills are multiplying fast — early publishers gain maximum visibility and downloads.

Skill File Structure (15-Minute Setup): A skill is JUST a folder with 4 possible items: SKILL.md (required — Markdown with YAML frontmatter), scripts/ (optional Python or Bash), references/ (optional docs loaded on demand), assets/ (optional templates and icons). Folder name MUST be kebab-case (sprint-planner, NOT Sprint Planner or sprint_planner). SKILL.md must be EXACTLY that spelling — case-sensitive. No README.md inside the skill folder. Start with just SKILL.md — that is the entire minimum viable skill.

API Integration = Production-Scale Deployment (/v1/skills): For production applications, the /v1/skills endpoint lets you manage skills programmatically. Add skills to Messages API requests via the container.skills parameter. Version control through Claude Console. Works with Claude Agent SDK for custom AI agents. Requires Code Execution Tool beta access. Use the API for production deployments, automated pipelines, and agent systems. Individual users should use Claude.ai. API skills unlock enterprise-grade AI workflow automation at unlimited scale.

Trigger Optimization = 90%+ Auto-Activation Rate: The description field is EVERYTHING. Target: skill triggers on 90%+ of relevant queries automatically. Good descriptions include WHAT the skill does AND WHEN to use it with specific trigger phrases. Bad: "Helps with projects." Good: "Manages Linear sprint planning. Use when user mentions 'sprint', 'Linear tasks', or asks to 'create tickets'." Run 10-20 test queries. Ask Claude directly: "When would you use the [skill name] skill?" — Claude quotes your description back and reveals exactly what is missing.

Organization-Wide Skills Deployment (December 2025): Admins can now deploy skills workspace-wide — launched December 18, 2025. Automatic updates push to all users simultaneously. Centralized management from admin panel. One skill update standardizes EVERY team member's workflow instantly. No more inconsistent AI results between teammates who prompt differently. Companies building skills libraries NOW gain compounding efficiency advantages against competitors still re-explaining context every single chat session. This is the AI operations layer enterprises have been waiting for.

Why This Changes Everything

The End of Prompt Engineering (Finally): Prompt engineering required constant re-explanation, relied on individual memory, produced inconsistent results, and could not be shared easily. Skills are shareable, versioned, auto-activating, and organization-deployable. The shift is from art (crafting the perfect prompt) to engineering (building a reliable system). Early adopters building skills libraries NOW will have a 6-12 month workflow advantage over teams still copying prompts from Notion docs and Slack messages.

Solves the "New Chat = New Explanation" Problem: Every new Claude conversation resets context completely. Skills eliminate this permanently. Claude loads your workflow automatically based on what you say — no explicit trigger needed. Power users currently spend 10-20% of their AI time re-establishing context every session. Skills compress that to near-zero. The compounding time savings across 1,000+ sessions per year is enormous — estimated 200+ hours annually per person recovered from pure context-setting overhead.

Democratizes Expert AI Workflows: Previously, getting Claude to follow expert workflows required prompt engineering knowledge, time, and extensive trial-and-error. Skills democratize this: download a community-built skill from GitHub, upload to Claude.ai in 60 seconds, and instantly access workflows built by domain experts. A junior marketer can now operate with the same AI workflow sophistication as a senior AI engineer. The public skills marketplace is still early — first movers can publish skills that thousands of users download and benefit from.

MCP Adoption Accelerator (Critical for Developers): Without skills, MCP integrations face user abandonment: users connect the tool but don't know how to use it effectively. With skills, MCP becomes turnkey. Anthropic's research shows users blame the MCP connector when the real issue is missing workflow guidance. Skills fix this permanently. If you've built an MCP server, adding a companion skill dramatically increases user retention, reduces support tickets, and differentiates your product from the growing field of MCP-only competitors.

Compounding Efficiency = Compounding Advantage: Every skill you build is a one-time investment that pays dividends indefinitely. Build a 30-minute skill today, save 5 minutes per workflow session. At 10 sessions per day = 50 minutes saved daily = 250 minutes per week = 200+ hours per year per person. Teams of 10 = 2,000+ hours annually recovered from a single well-built skill. Organizations building skills libraries systematically gain structural productivity advantages that compound over time against competitors still working session-by-session.

When something deserves to become a Skill

The Skill doesn’t come first. What comes first is a pattern.

Every time I faced messy strategic notes, I was unconsciously applying the same mental structure:

Clarify the context → Extract key signals → Surface risks → Compare trade-offs → Present structured options → Define next steps

That repetition is what changed everything.

Because repetition is the signal that something deserves structure.

Here are the problems I had before using skills (and what they led to):

No defined role → Inconsistent responses

No rules → Shifts in tone

No boundaries → Unnecessary creative drift

No environment → Constant repetition

That shift didn’t happen because I created a Skill. It happened because I stopped improvising.

Structure came first. Automation came second.

The hidden step most people skip can be summarized like this:

The difference is massive. In the first case (left side), you automate an idea. In the second case (right side), you automate a proven framework.

A Skill is not a clever idea. It is a structure that has already proven it works multiple times.

Skills vs. Other Claude Features: What's the Difference?

Claude has several features that sound similar. Here's how they differ:

Skills vs. Projects:

Projects provide static background context that's always loaded when you're in that project

Skills provide dynamic procedures that only load when relevant

Use Projects for persistent knowledge, Skills for repeatable processes

Skills vs. Custom Instructions:

Custom Instructions apply broadly to all your conversations

Skills are task-specific and only activate when needed

Custom Instructions set general preferences; Skills provide specialized procedures

Skills vs. MCP Connectors:

MCP Connectors give Claude access to external tools and data (like Notion, Slack)

Skills teach Claude how to use those tools effectively

They work together: MCP provides access, Skills provide process

Example: An MCP connector gives Claude access to your Notion workspace. A Skill teaches Claude how to format meeting notes according to your team's specific template within Notion.

Potential Drawbacks and Limitations

Let's be honest about where Skills fall short:

1. Requires Code Execution to be Enabled

Skills use Claude's code execution capability. If you've disabled this for security reasons, Skills won't work. This is a consideration for enterprises with strict security policies.

2. Initial Setup Time

Creating good Skills takes time. You need to document processes clearly, provide examples, and iterate based on results. The ROI is there, but it's not instant.

3. Not Ideal for Highly Dynamic Processes

If your workflow changes constantly, maintaining Skills becomes overhead. They're best for processes that are reasonably stable.

4. Potential for Over-Reliance

There's a risk that teams become dependent on Skills without understanding the underlying processes. Junior PMs might use a "Feature Spec" Skill without learning what makes a good spec.

5. Quality Depends on Quality of Instructions

Garbage in, garbage out. Poorly written Skill instructions produce inconsistent results. This requires thoughtful documentation.

6. Limited to Available Plans

Skills are only available on Pro, Max, Team, and Enterprise plans. Free-tier users can't access them.

Finding & Installing Third-Party Skills

- Official Anthropic Repositories

github.com/anthropics/skills → Official skill examples + production document skills (docx, pdf, pptx, xlsx). Apache 2.0 for examples; source-available for doc skills. Functions as a Claude Code Plugin marketplace.

github.com/anthropics/claude-plugins-official → Official plugin marketplace directory. Third-party partners can submit.

github.com/anthropics/knowledge-work-plugins → 11 role-based plugins (Sales, Support, Product Management, Finance, Data, etc.) with skills, commands, and MCP connectors. Open source.

github.com/anthropics/life-sciences → Life sciences MCP servers and skills (PubMed, BioRender, etc.)

- Community Skill Directories & Marketplaces

skills.sh → Primary distribution hub. npx skills add . Leaderboard, multi-platform.

skillsmp.com → Independent directory, aggregates from GitHub. Min 2-star filter.

skillhub.club → 20K+ skills with AI-evaluated quality ratings. Has a playground.

hub.skild.sh → Another community registry

agentskill.sh → 25K+ skills directory, browseable by category/platform

- Key Community Projects

obra/superpowers → Complete software dev workflow: brainstorm → plan → TDD → implement → review. 20+ battle-tested skills. The gold standard for skill composition. Install: /plugin marketplace add obra/superpowers-marketplace then /plugin install superpowers@superpowers-marketplace

obra/superpowers-skills → Community-editable extension to Superpowers

obra/superpowers-lab → Experimental skills (tmux for interactive commands, etc.)

travisvn/awesome-claude-skills → Curated awesome-list of skills, resources, and tools

numman-ali/openskills → Universal skills loader. npx openskills install anthropics/skills. Works across Claude Code, Cursor, Windsurf, Aider, Codex, etc.

vercel-labs/agent-skills → Vercel’s official skills: React, Next.js, React Native patterns + Vercel deploy skill

qufei1993/skills-hub → Desktop app: “Install once, sync everywhere” — manages skills across multiple AI tools

inference.sh skills → 150+ cloud AI app skills (image gen, video, TTS, search) via infsh CLI

Building Your Own Skills — Best Practices

Design Principles

Single-purpose: “SEO optimization for blog posts” is good. “Content marketing helper” is too broad. “Add meta descriptions” is too narrow.

Description is king: Claude routes based on the

descriptionfield. Vague = missed triggers. Overly generic = false triggers. Be specific about when and what.Progressive disclosure: Keep SKILL.md lean. Reference files for deep details. Scripts for deterministic steps.

Imperative instructions: Write as if onboarding a smart junior engineer. Be explicit about steps, inputs, outputs.

Include examples: Show expected inputs and outputs. Show what “good” looks like.

Token budget: Metadata ~50-100 tokens. Full SKILL.md < 5000 tokens. Reference files on-demand.

The Description Field — Most Important 200 Characters You’ll Write

Bad:

yaml

description: Helps with code

Good:

yaml

description: >-

Generate FastAPI backend with React+Vite frontend, Tailwind, shadcn/ui,

and OpenAI integration. Use when asked to scaffold a full-stack AI app.

Claude uses semantic matching, not keyword matching — but vague descriptions still reduce accuracy.

Skill Structure Patterns

Pattern 1: Instructions-only (simplest)

my-skill/

└── SKILL.md # All instructions in one file

Pattern 2: Instructions + References

brand-guidelines/

├── SKILL.md # Overview + when to apply

└── references/

├── colors.md # Detailed color specs

├── typography.md # Font rules

└── voice.md # Tone of voice guide

Pattern 3: Instructions + Scripts (executable)

data-pipeline/

├── SKILL.md

├── scripts/

│ ├── validate.py

│ └── transform.sh

└── templates/

└── output-schema.json

Pattern 4: Full production skill (like the built-in docx skill)

docx/

├── SKILL.md

├── LICENSE.txt

├── scripts/

│ ├── office/

│ │ ├── soffice.py

│ │ ├── unpack.py

│ │ └── validate.py

│ └── accept_changes.py

├── references/

│ └── REFERENCE.md

└── examples/

└── sample-doc.docx

Creating Skills with the Skill-Creator

Claude has a built-in skill-creator skill (available in claude.ai and as a plugin). The workflow:

Tell Claude “I want to create a skill for X”

It interviews you about the workflow

Generates the SKILL.md and folder structure

Creates test prompts

Runs Claude-with-skill on them

You evaluate results

Iterate until satisfied

You can also use the eval/benchmark system for more rigorous testing:

Eval mode: Test individual prompts, compare with/without skill

Improve mode: Iterative optimization with blind A/B comparisons

Benchmark mode: Standardized measurement with variance analysis (3x runs per config)

Claude Code-Specific Features

In Claude Code, skills gain extra powers via frontmatter:

yaml

---

name: deep-research

description: Research a topic thoroughly

context: fork # Runs in a forked subagent

agent: Explore # Uses the Explore agent (read-only tools)

---

Options for context:

Default (omitted): Claude loads it when relevant

fork: Runs as a separate subagent task

Options for agent:

Explore: Read-only codebase explorationPlan: Planning-focusedCustom: Any subagent from

.claude/agents/

Skills can also be invoked as slash commands:

.claude/skills/review/SKILL.mdcreates/review(This replaced the older

.claude/commands/system)

Getting-Up-To-Speed - Practical Plan

Here I outline a practical approach on how to multiply your productivity by integrating agentic skills into your day-to-day workflow with Claude, Codex, or any other AI agent tool of your choice (using Claude as an example).

- Foundation (2-3 hours)

Understand the landscape

Read the Agent Skills specification (15 min)

Read Anthropic’s blog post introducing skills (10 min)

Read the how to create skills guide (15 min)

Read the Claude Code skills docs (15 min)

Read Lee Han Chung’s deep dive on skill architecture (20 min)

Explore existing skills

Browse github.com/anthropics/skills — read 3-4 example SKILL.md files to see patterns

Browse skills.sh and skillsmp.com to see what the community has built

Install Superpowers in Claude Code:

/plugin marketplace add obra/superpowers-marketplace+/plugin install superpowers@superpowers-marketplaceRead Jesse Vincent’s blog post on Superpowers — this is the best practical write-up on skill composition

Browse travisvn/awesome-claude-skills for curated resources

Try it hands-on

Ask Claude (in claude.ai or Claude Code) to “create a skill for [something you do repeatedly]” — use the skill-creator

Test the generated skill on a real task

Iterate once or twice based on results

- Build Your System (3-4 hours)

Set up your skills infrastructure

Create a GitHub repo for your personal skills (

your-username/claude-skills)Add a

marketplace.jsonto make it a Claude Code marketplaceSet up Obsidian vault with the Skills/ and Workflows/ structure described above

Install the Obsidian Git plugin and connect to your skills repo

Create a SKILL.md template in Obsidian Templater

Build your first 3 skills Start with workflows you repeat across projects:

Your project scaffolding workflow — how you set up a new project (tech stack, folder structure, CI, etc.)

Your code review checklist — your standards for reviewing code

Your product spec process — how you go from idea to spec

For each:

Document the workflow in Obsidian Workflows/

Draft the SKILL.md

Push to Git

Register your marketplace in Claude Code

Test on a real task

Iterate

Day 5: Explore the API surface

Read the Skills API docs

Try uploading a custom skill via the API

Understand the

/v1/skillsendpoints for programmatic management

- Scale & Parallelize (3-4 hours)

Build product-specific skills For each of your product ideas, create:

Domain knowledge skill (what this product does, its entities, its terminology)

Architecture skill (your preferred stack, patterns, conventions for this product)

Testing skill (how you want tests structured for this product)

Explore advanced patterns

Read the MCP builder skill — skills + MCP is a powerful combination

Try

context: forkand subagent delegation in Claude CodeExplore anthropics/knowledge-work-plugins for the plugin architecture pattern

Install

openskills(npx openskills install anthropics/skills) for cross-tool skill management

Automate and refine

Set up GitHub Actions to validate your skills on push (use

skills-refPython library)Create a “meta-skill” that helps you create new skills faster (or use the built-in skill-creator as a base)

Document your skills system in your Obsidian vault so future-you (and future-Claude) can maintain it

https://codeagentsalpha.substack.com/p/claude-agent-skills-complete-getting

https://sifuyik.substack.com/p/anthropic-dropped-the-32-page-internal

https://artificialcorner.com/p/claude-code-skills

https://www.youngleaders.tech/p/claude-skills-commands-subagents-plugins

https://aiblewmymind.substack.com/p/claude-skills-36-examples

Spec-Driven Development

Like with many emerging terms in this fast-paced space, the definition of “spec-driven development” (SDD) is still in flux. Here’s what I can gather from how I have seen it used so far: Spec-driven development means writing a “spec” before writing code with AI (“documentation first”). The spec becomes the source of truth for the human and the AI.